Manuel Alejandro de Brito Fontes

@aledbf

ID: 94353906

03-12-2009 15:45:29

426 Tweet

362 Followers

457 Following

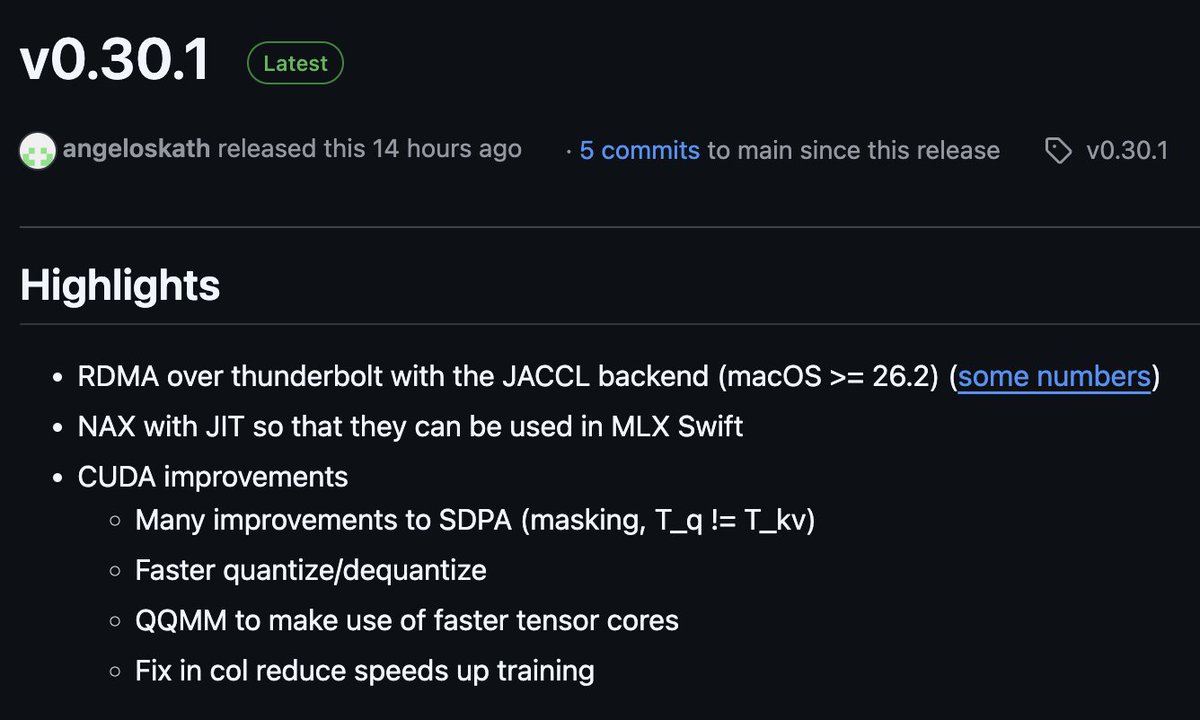

The latest MLX is out! And it has a new distributed back-end (JACCL) that uses RDMA over TB5 for super low-latency communication across multiple Macs. Thanks to Angelos Katharopoulos