terenceliu.bnb

@terenceliu4444

CloneX | Mfers | Moonbirds | WoW | Otherdeed #71165 | Valhalla | CryptoAdz | AKCB | 3landers | galaxy eggs | dour darcels

ID: 970681186061312001

05-03-2018 15:23:23

1,1K Tweet

1,1K Takipçi

2,2K Takip Edilen

No Signal NYC was the first event and it was a vibe. We wanna thank every single BEAST who made it out. 🗽 Shout out to Alpha Pro Club @EarlyAccessPass METAWIN TECHNO AND CHILL for making it slap.🔥 Who’s ready to join next #AKCB Tokyo in June? 🇯🇵

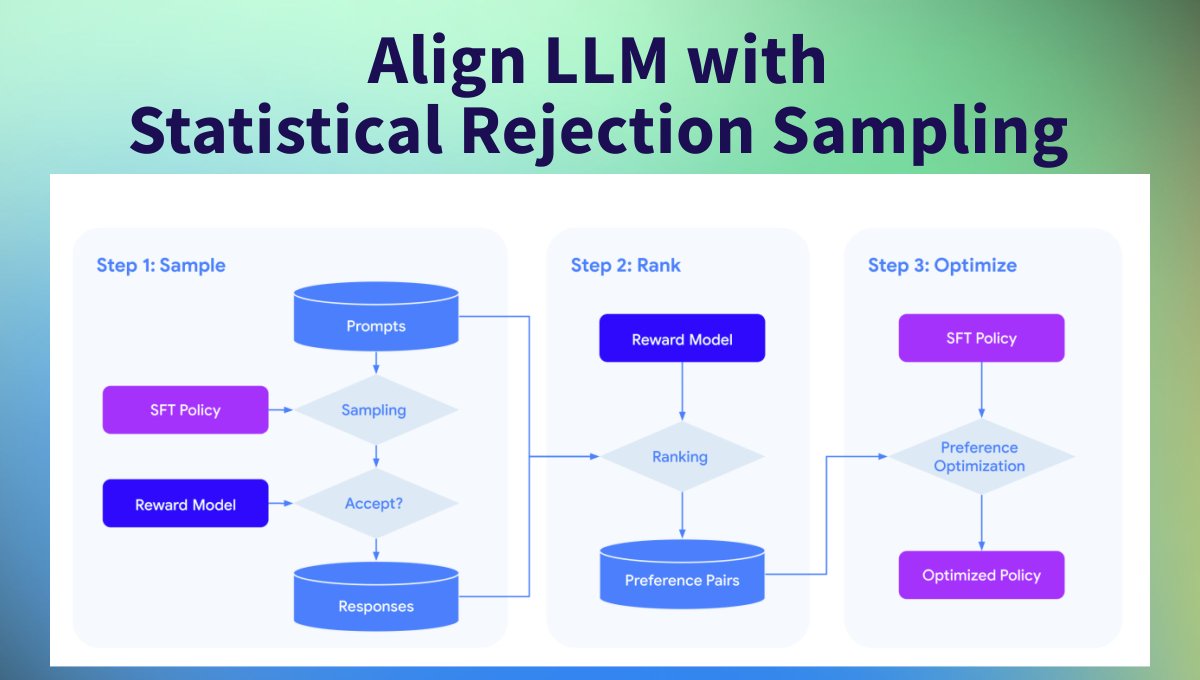

Aligning LLMs with Human Preferences is one of the most active research areas🧪 RLHF, DPO, and SLiC are all techniques for aligning LLMs, but they come with challenges. 🥷 Google DeepMind proposes a new method, “Statistical Rejection Sampling Optimization (RSO)” 🧶

People are realizing RLHF can be easy with DPO and SLiC-HF. If you were wondering how they compare, the answer is they are pretty similar and our paper (arxiv.org/abs/2309.06657 led by terenceliu.bnb) shows the math. The biggest question is whether you should train a preference