Sushant Kumar

@sushantkumar_23

Director of AI, SproutsAI

Prev: Head of AI, SquareYards, Co-founder Azuro, Bank of America, IIT Bombay 2014

ID: 27609592

https://sushant-kumar.com 30-03-2009 09:21:06

2,2K Tweet

1,1K Followers

2,2K Following

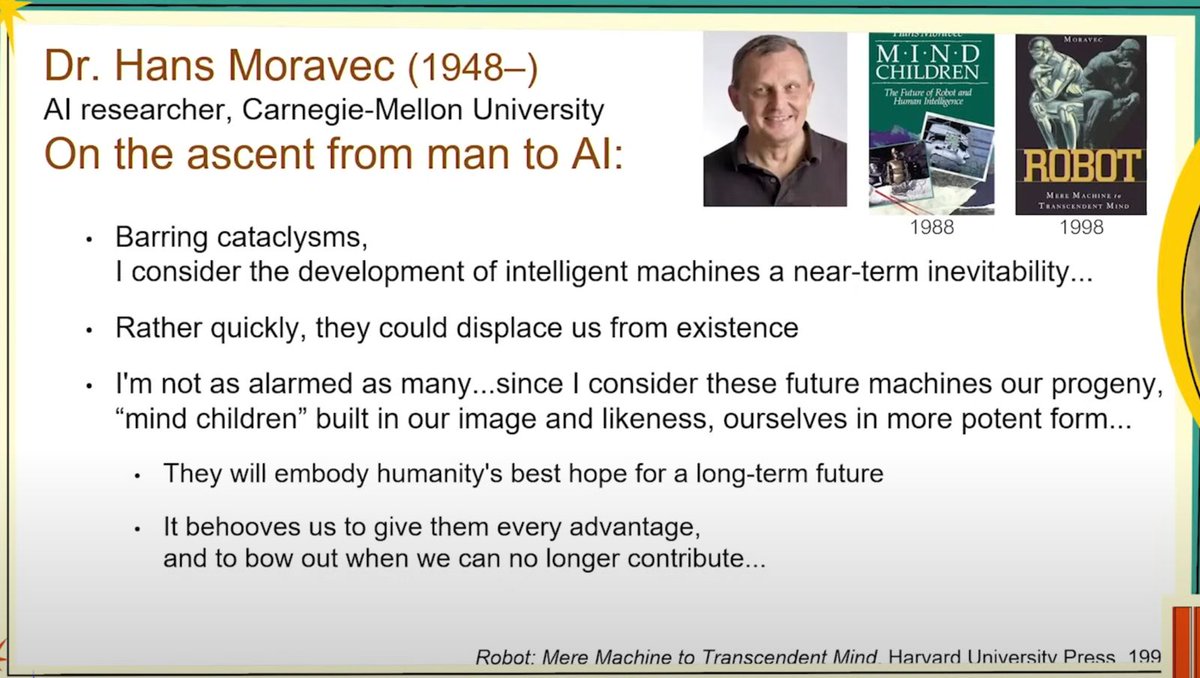

This lecture is a masterpiece from Richard Sutton that's moving the conversation forward on what the future with AI could look like. You can literally feel the clarity that comes when you have spent half-a-century thinking deeply about intelligence. youtube.com/watch?v=FLOL2f…

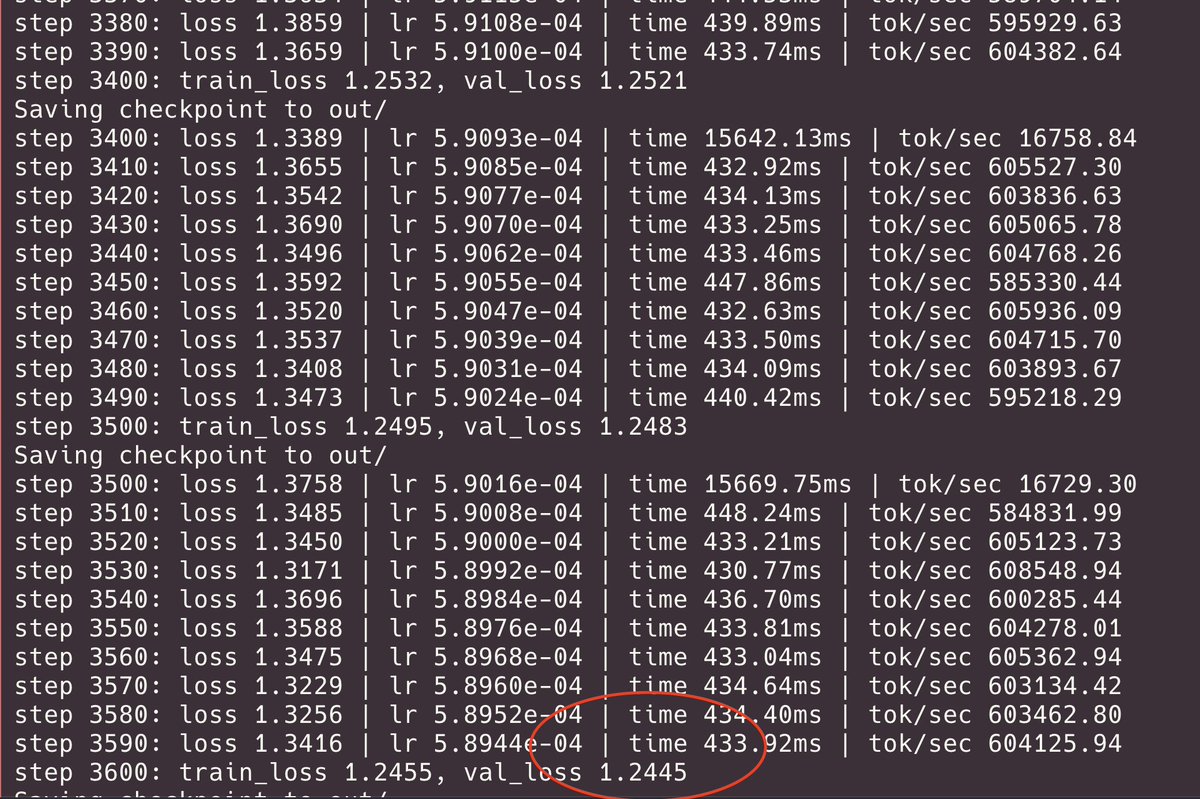

Exactly what I was searching the internet for today! Thanks Sebastian Raschka for always being on point!