STbomba 🇺🇦

@st_bomba

Digital art & 📸 | PP

ID: 1463477365388967940

24-11-2021 11:59:39

1,1K Tweet

2,2K Followers

3,3K Following

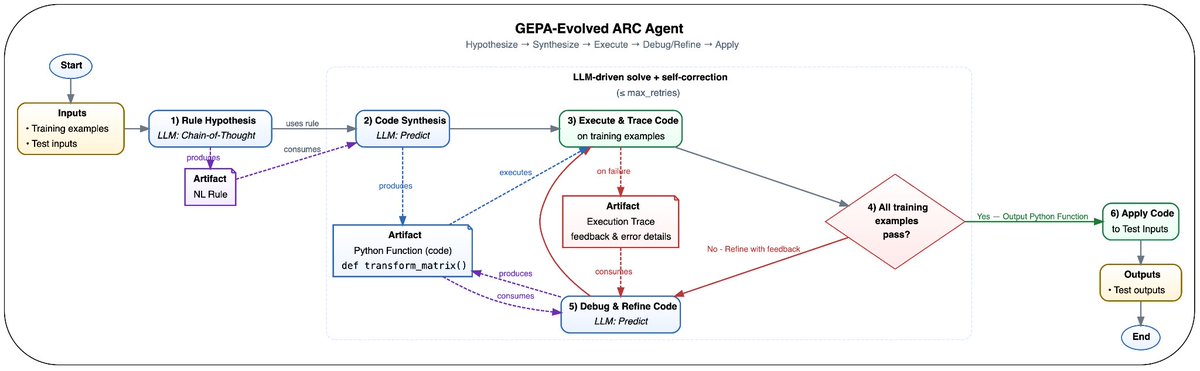

Harshad Saykhedkar Asfi In this context, GEPA works as a prompt optimizer, so the end result is a prompt (or multiple prompts for a multi-agent system, one for each component). However, one aspect that does not get highlighted enough is that GEPA is a text evolution engine: Given a target metric, GEPA

Announcing “K-Dense”, a multi-agent AI scientist that has already made a new discovery in aging research 🧵 Ashwin Gopinath & @BioStateAI tinyurl.com/3dmraa5k

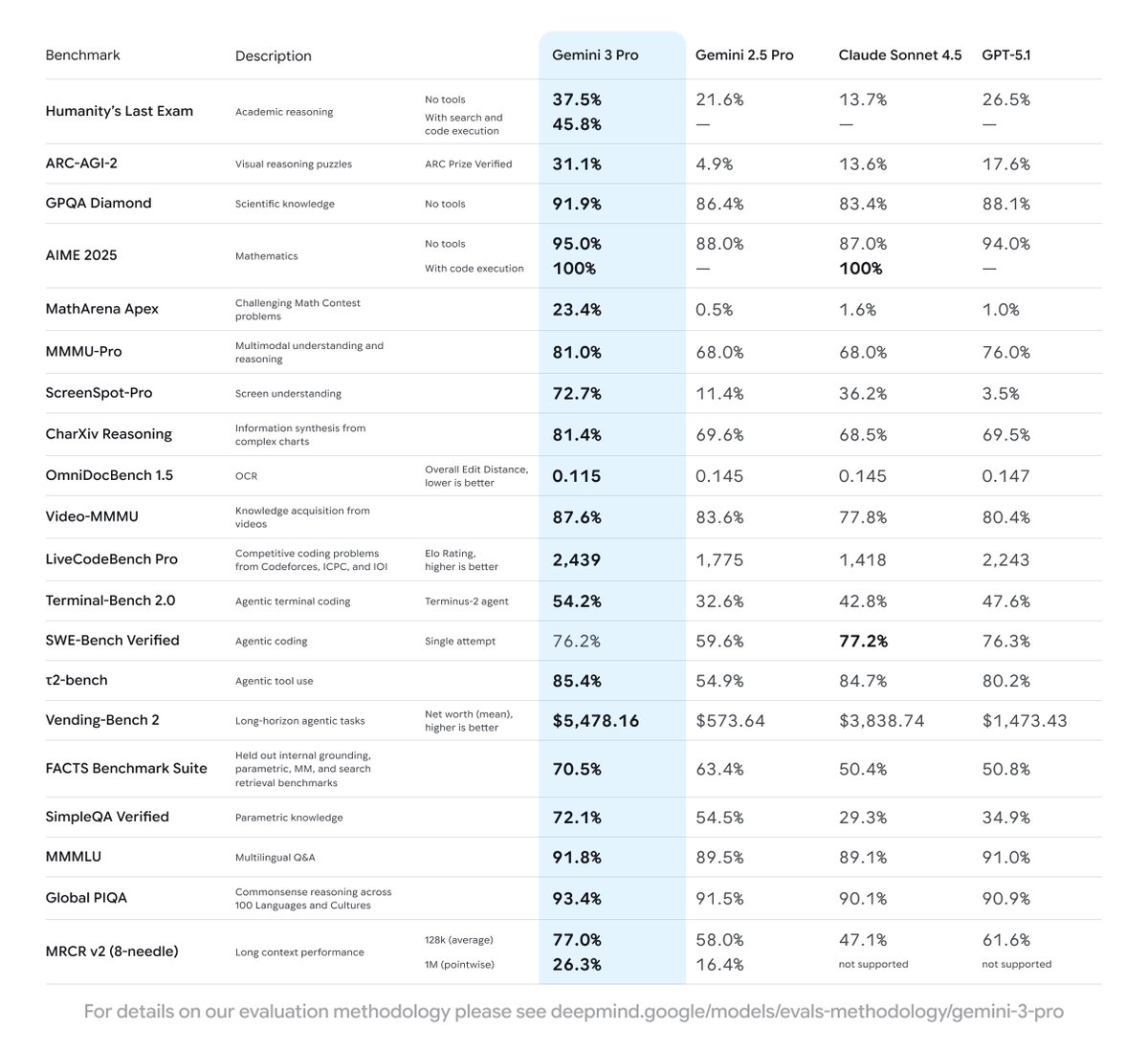

Our first release is Gemini 3 Pro, which is rolling out globally starting today. It significantly outperforms 2.5 Pro across the board: 🥇 Tops LMArena and WebDev lmarena.ai leaderboards 🧠 PhD-level reasoning on Humanity’s Last Exam 📋 Leads long-horizon planning on Vending-Bench 2

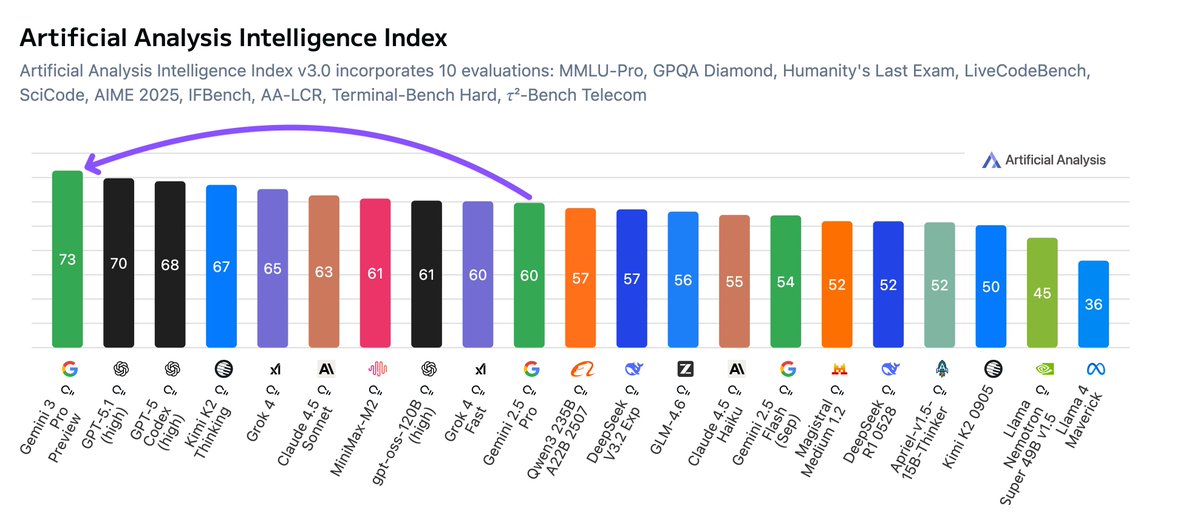

Gemini 3 Pro is the new leader in AI. Google has the leading language model for the first time, with Gemini 3 Pro debuting +3 points above GPT-5.1 in our Artificial Analysis Intelligence Index Google DeepMind gave us pre-release access to Gemini 3 Pro Preview. The model