Soran Ghaderi

@soranghadri

Looking for PhD position | Reasoning 🧠💻 (Diffusion/EBMs/Flow-based/RL) | AI MSc @uni_of_essex

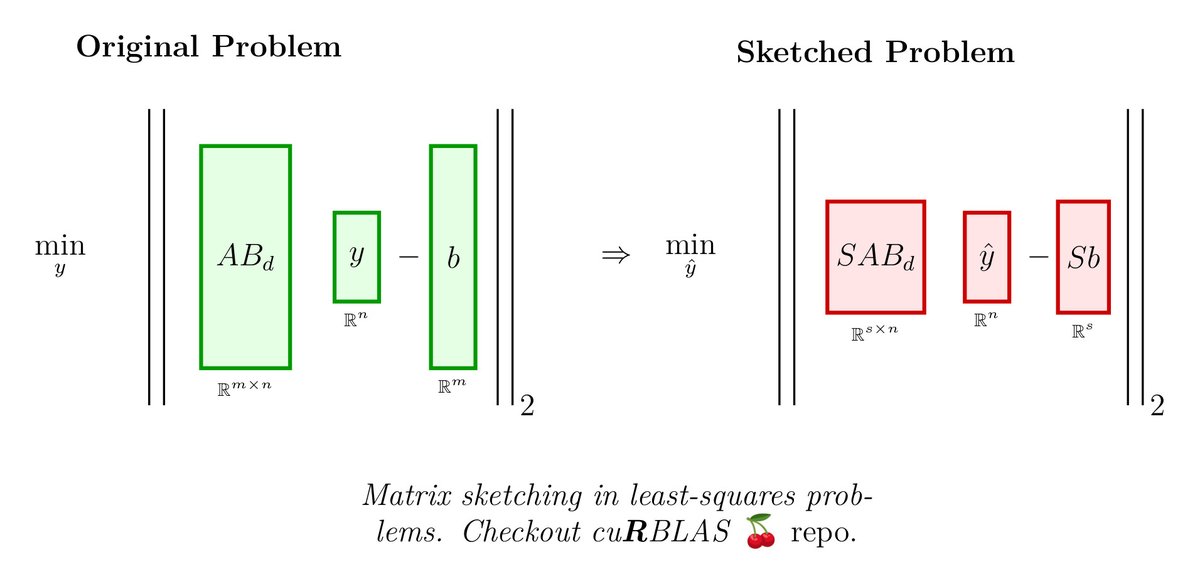

GitHub: github.com/soran-ghaderi | 🍒 cuRBLAS/🍓 TorchEBM libs

ID: 891620113769738240

https://soran-ghaderi.github.io/ 30-07-2017 11:22:55

943 Tweet

186 Followers

735 Following

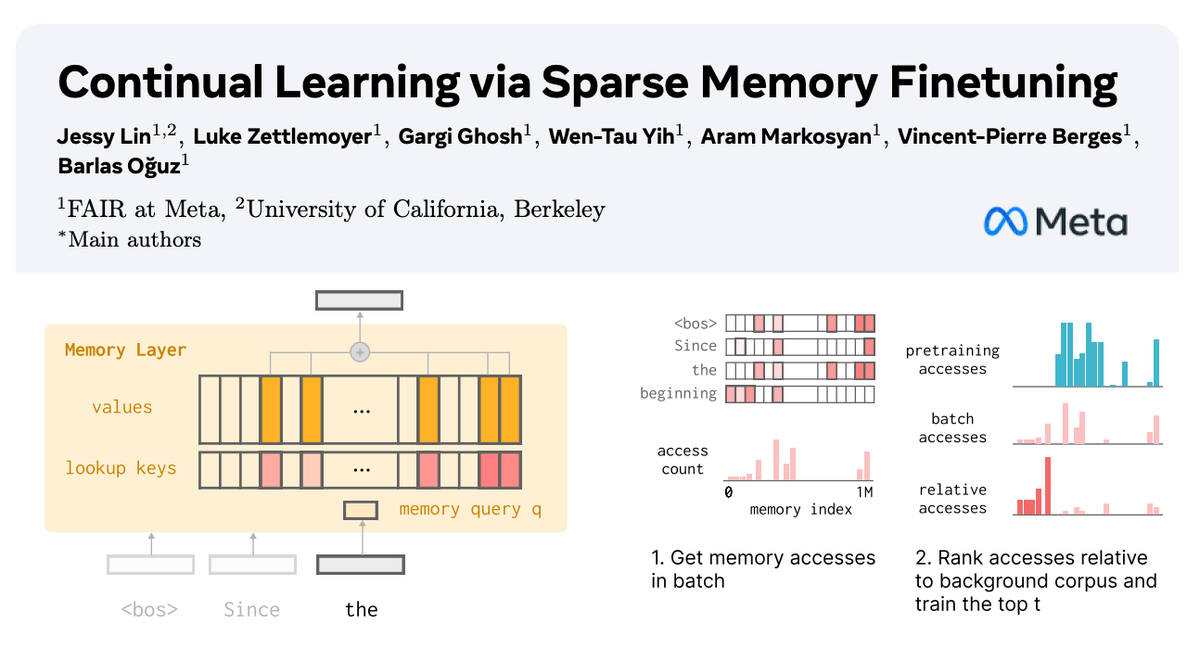

🧠 How can we equip LLMs with memory that allows them to continually learn new things? In our new paper with AI at Meta, we show how sparsely finetuning memory layers enables targeted updates for continual learning, w/ minimal interference with existing knowledge. While full

🇨🇳 Chinese doordash Meituan launched LongCat-Video on Hugging Face under MIT License. A small 13.6B model that unifies Text-to-Video, Image-to-Video, and Video-Continuation, targeting minutes-long coherent clips and fast 720p 30fps output. It frames every task as continuing

Sharing our work at NeurIPS Conference on reasoning with EBMs! We learn an EBM over simple subproblems and combine EBMs at test-time to solve complex reasoning problems (3-SAT, graph coloring, crosswords). Generalizes well to complex 3-SAT / graph coloring/ N-queens problems.

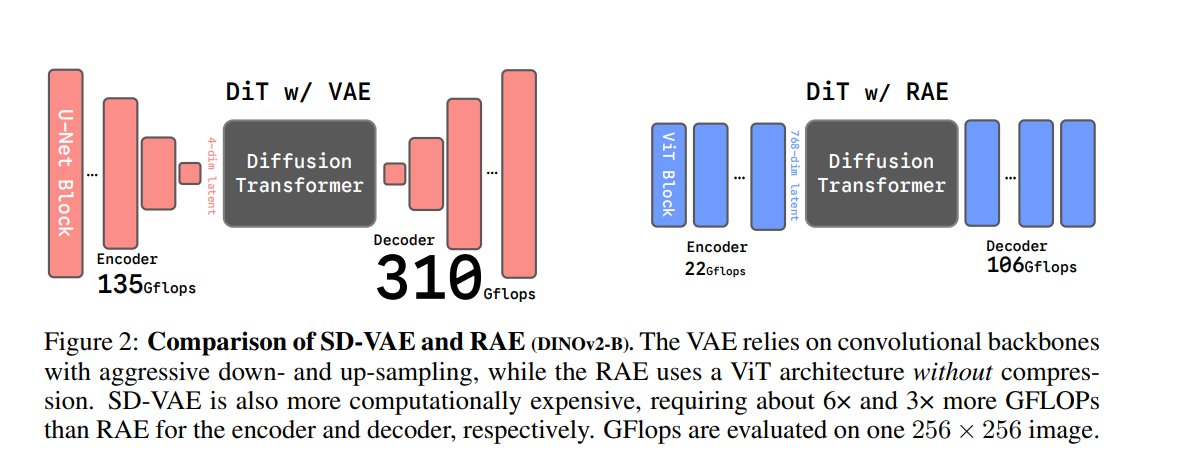

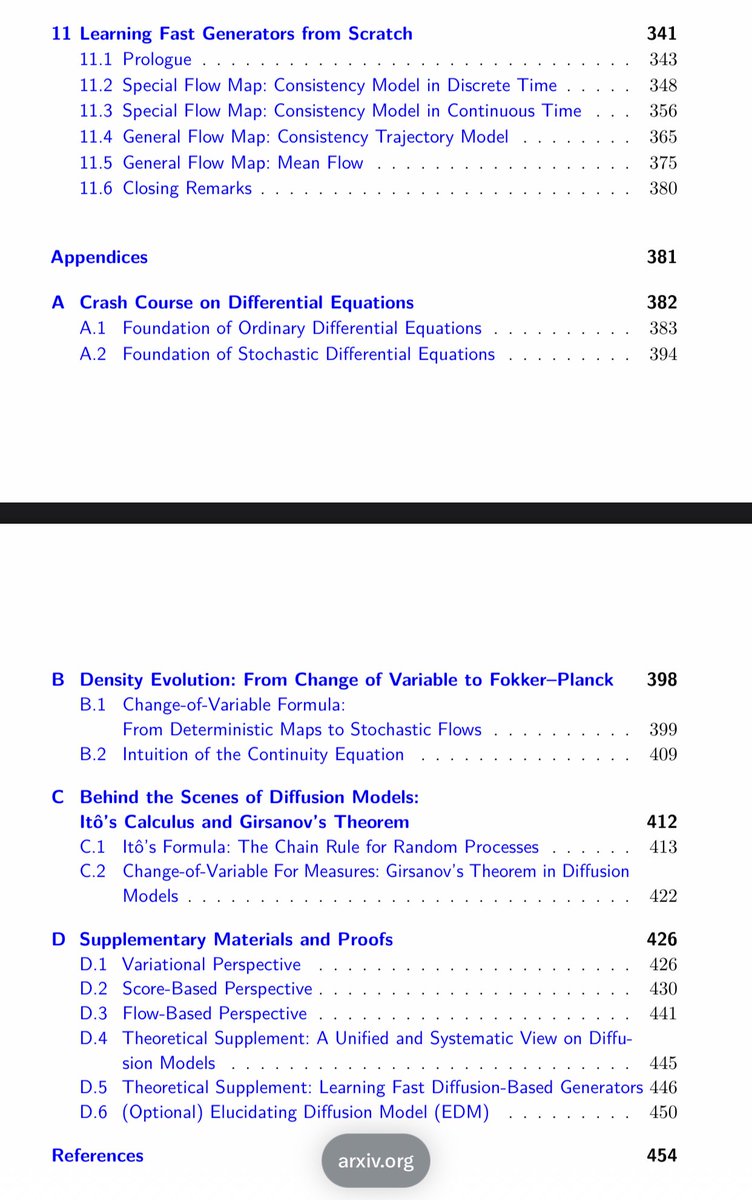

Applications change, but the principles are enduring. After a year's hard work led by Chieh-Hsin (Jesse) Lai, we are really excited to share this deep, systematic dive into the mathematical principles of diffusion models. This is a monograph we always wished we had.