Lexi

@orenguteng_ai

AI Innovation - (un)alignment research

huggingface.co/Orenguteng

ID: 1783705442817830913

26-04-2024 03:51:49

55 Tweet

73 Takipçi

25 Takip Edilen

I'm glad you liked it bruh Adam Grant Stay tuned for V3 and the 70B model coming very soon!! Appreciate your feedback

Fixed a bug which caused all training losses to diverge for large gradient accumulation sizes. 1. First reported by Benjamin Marie, GA is supposed to be mathematically equivalent to full batch training, but losses did not match. 2. We reproed the issue, and further investigation

Quantizing a model to 4bits will sometimes break models entirely! Unsloth AI now has a dynamic 4bit quant format which chooses some parameters to be in 16bit! We find that: 1. You need to check activation and weight quantization errors. Solely relying on 1 does not work. 2.

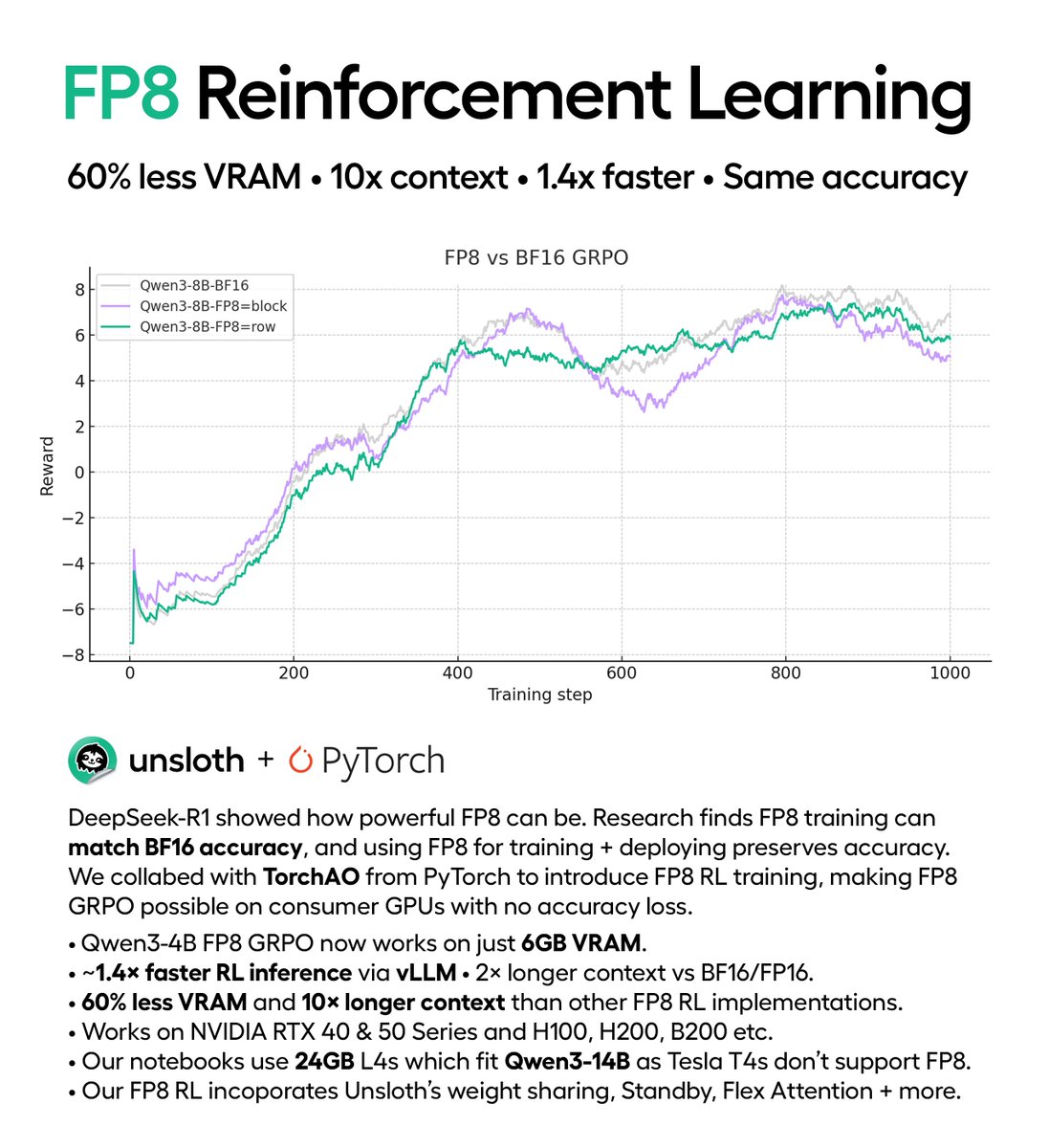

Train your own reasoning LLM using DeepSeek's GRPO algorithm with our free notebook! You'll transform Llama 3.1 (8B) to have chain-of-thought. Unsloth makes GRPO use 80% less VRAM. Guide: docs.unsloth.ai/basics/reasoni… GitHub: github.com/unslothai/unsl… Colab: colab.research.google.com/github/unsloth…