Guangsheng Bao

@gshbao

Ph.D. candidate @NlpWestlake @Westlake_Uni and @ZJU_China, supervised by Prof. Yue Zhang. Previously employed by @Microsoft and @AlibabaGroup.

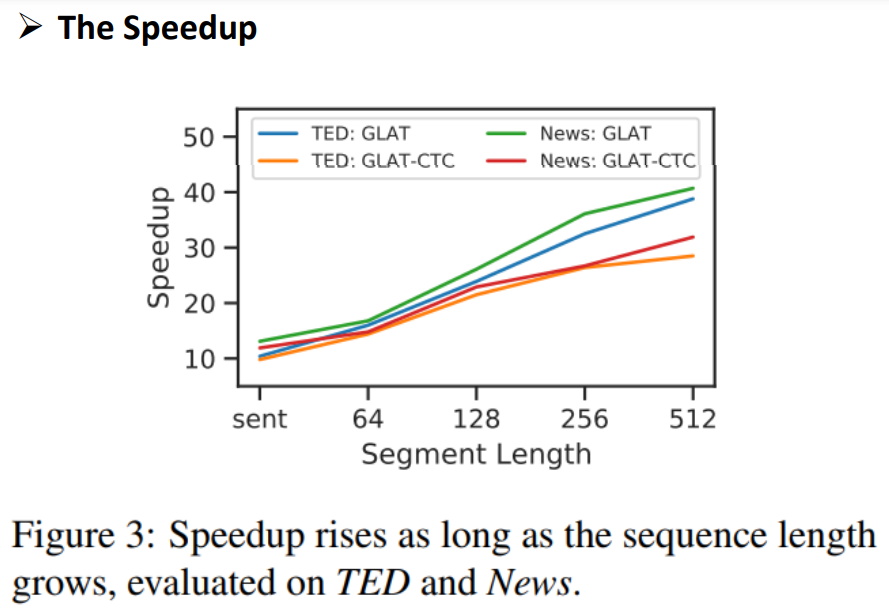

ID: 1503967797428195329

https://baoguangsheng.github.io/ 16-03-2022 05:34:29

27 Tweet

52 Takipçi

120 Takip Edilen

⛄️Excited to share our work on causal analysis of LLMs at COLING 2025!💖Hongbo Linyi Yang Cunxiang Wang "How Likely Do LLMs with CoT Mimic Human Reasoning?" Paper: arxiv.org/pdf/2402.16048

![Hongbo (@hongbo00231523) on Twitter photo [1/5] 🧵 Thrilled to unveil our latest research on "Causal Analysis of CoT in LLMs"! We delve into the intricate dynamics between Chain of Thought reasoning and answer generation in LLMs, revealing some unexpected insights. 🤖💭

📄Read the full paper: arxiv.org/abs/2402.16048 [1/5] 🧵 Thrilled to unveil our latest research on "Causal Analysis of CoT in LLMs"! We delve into the intricate dynamics between Chain of Thought reasoning and answer generation in LLMs, revealing some unexpected insights. 🤖💭

📄Read the full paper: arxiv.org/abs/2402.16048](https://pbs.twimg.com/media/GIJD97rbEAAuPVi.png)

![Hongbo (@hongbo00231523) on Twitter photo [3/5] Employing causal analysis, we dissect the cause-effect relationships between CoTs/instructions and answers in LLMs. Our analysis exposes the Structural Causal Model (SCM) LLMs mimic, highlighting significant differences from human reasoning processes. [3/5] Employing causal analysis, we dissect the cause-effect relationships between CoTs/instructions and answers in LLMs. Our analysis exposes the Structural Causal Model (SCM) LLMs mimic, highlighting significant differences from human reasoning processes.](https://pbs.twimg.com/media/GIJESfcbgAAdQXq.jpg)

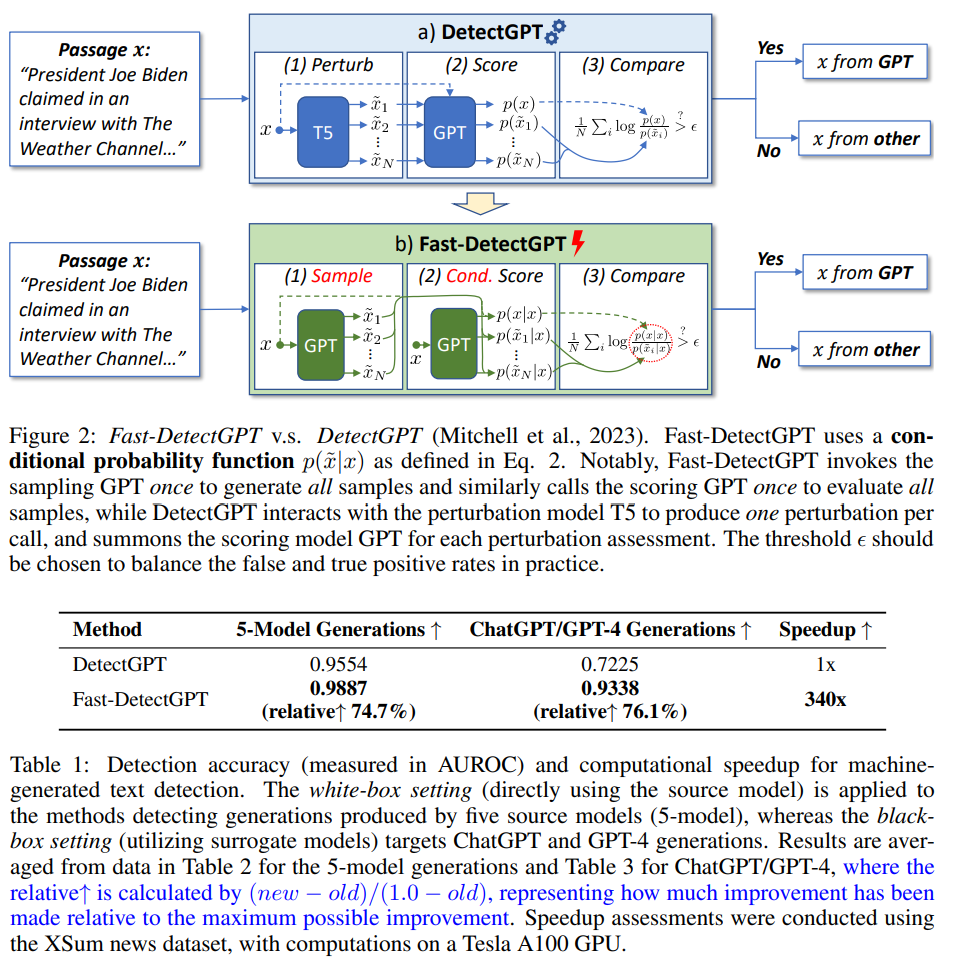

![Guangsheng Bao (@gshbao) on Twitter photo Exciting news! 🎉 Our online demo for Fast-DetectGPT is now live! 🚀 Experience lightning-fast text detection in action. Give it a try here: [region-9.autodl.pro:21504] Let us know what you think! #FastDetectGPT #AI #TextDetection. Exciting news! 🎉 Our online demo for Fast-DetectGPT is now live! 🚀 Experience lightning-fast text detection in action. Give it a try here: [region-9.autodl.pro:21504] Let us know what you think! #FastDetectGPT #AI #TextDetection.](https://pbs.twimg.com/media/GO1ZxQDW4AAfJkh.png)

LLMs often rely on correlations, not causation. ❤️🔥

Our causal analyses show that RLVR-trained LRMs move closer to true causal reasoning — but distilled LRMs and LLMs do not⁉️

🧠 Paper: "Correlation or Causation?"

📘 [arxiv.org/pdf/2509.17380](arxiv.org/pdf/2509.17380)](https://pbs.twimg.com/media/G2zobU2aQAA3UzP.jpg)