david yan

@dzyan01

meandering researcher @PrincetonVL

ID: 1904013727235457024

https://david-yan1.github.io 24-03-2025 03:33:52

0 Tweet

12 Followers

69 Following

Vision-language models are getting better every day. Can we use them to improve image compression? Yes! For my internship, working w/ Google DeepMind, Google Research, we designed VLIC, a diffusion autoencoder post-trained with VLM preferences. Our preprint is out today! A🧵:

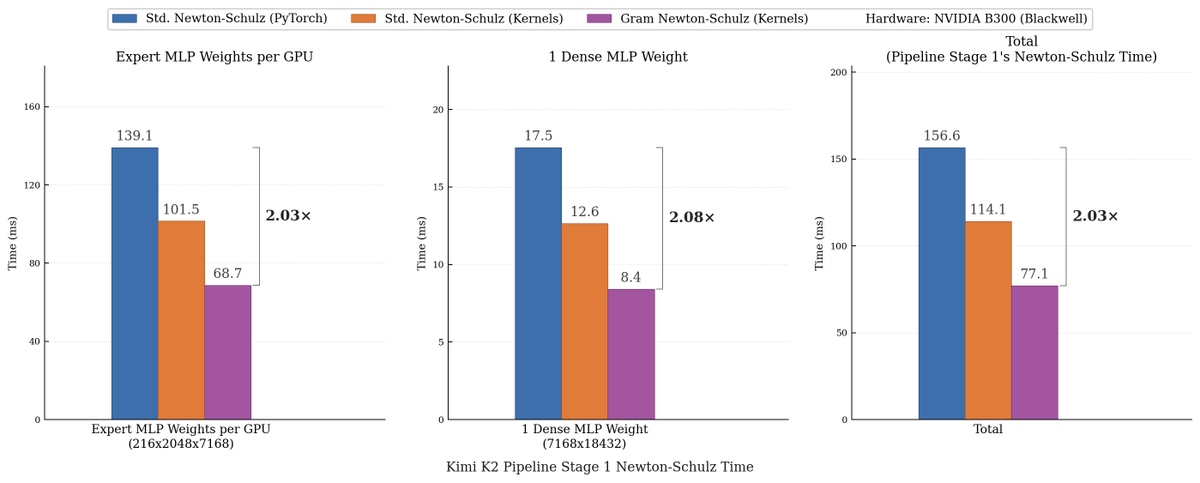

Excited to share our new paper on sharp capacity scaling of the Muon optimizer! Joint work with Eshaan Nichani Denny Wu Alberto Bietti Jason Lee: arxiv.org/abs/2603.26554 (1/7)