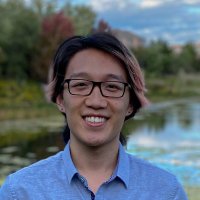

🇮🇱See Gh Naaaa Trah🇹🇼

@cygnatra

🇺🇦🇺🇸🇪🇺

ID: 1108737101904953344

21-03-2019 14:28:19

299 Tweet

62 Followers

1,1K Following

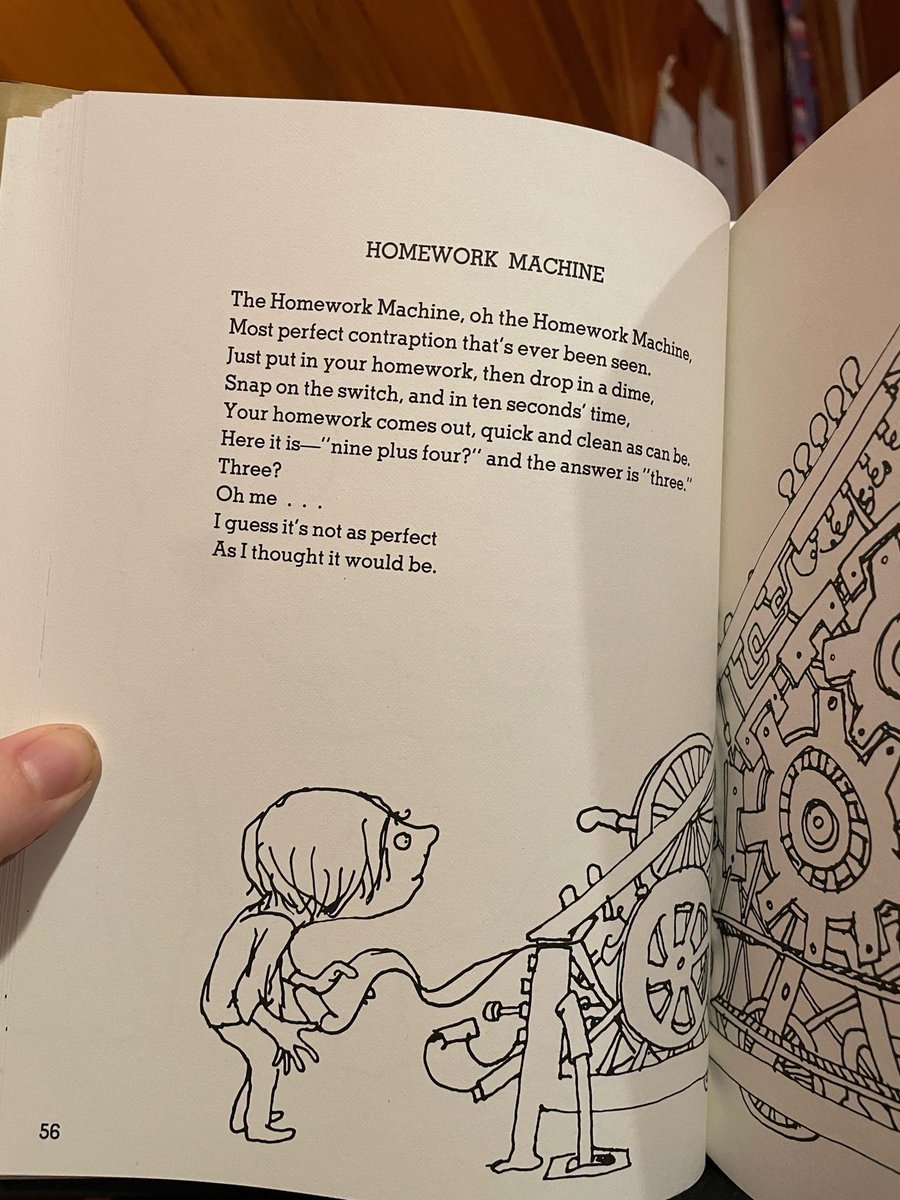

Chris Murphy 🟧 You're being played by people who want regulatory capture. They are scaring everyone with dubious studies so that open source models are regulated out of existence.

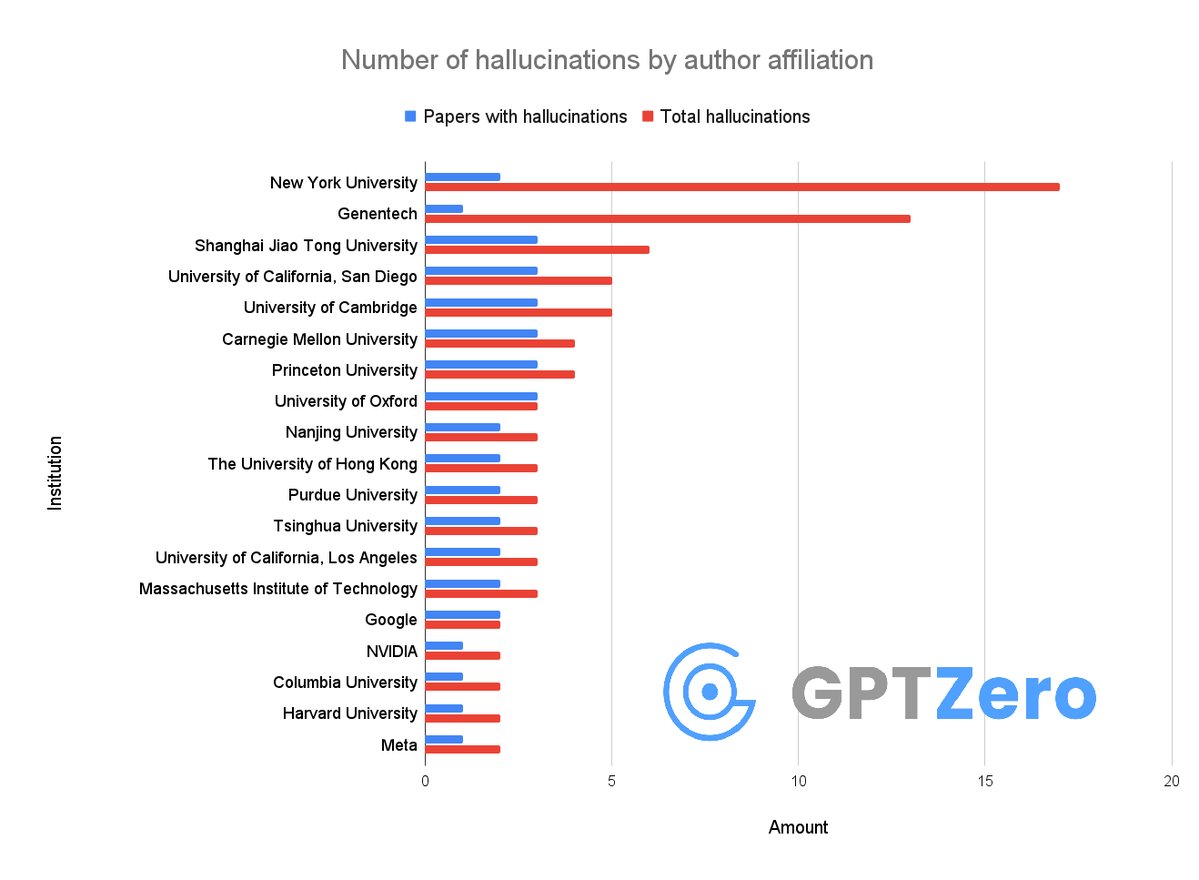

Okay so, we just found that over 50 papers published at @Neurips 2025 have AI hallucinations I don't think people realize how bad the slop is right now It's not just that researchers from Google DeepMind, Meta, Massachusetts Institute of Technology (MIT), Cambridge University are using AI - they allowed LLMs to generate