Eric Chen

@chvlylchen

AI researcher

ID: 2734655356

06-08-2014 00:56:10

124 Tweet

326 Followers

1,1K Following

I finally wrote another blogpost: ysymyth.github.io/The-Second-Hal… AI just keeps getting better over time, but NOW is a special moment that i call “the halftime”. Before it, training > eval. After it, eval > training. The reason: RL finally works. Lmk ur feedback so I’ll polish it.

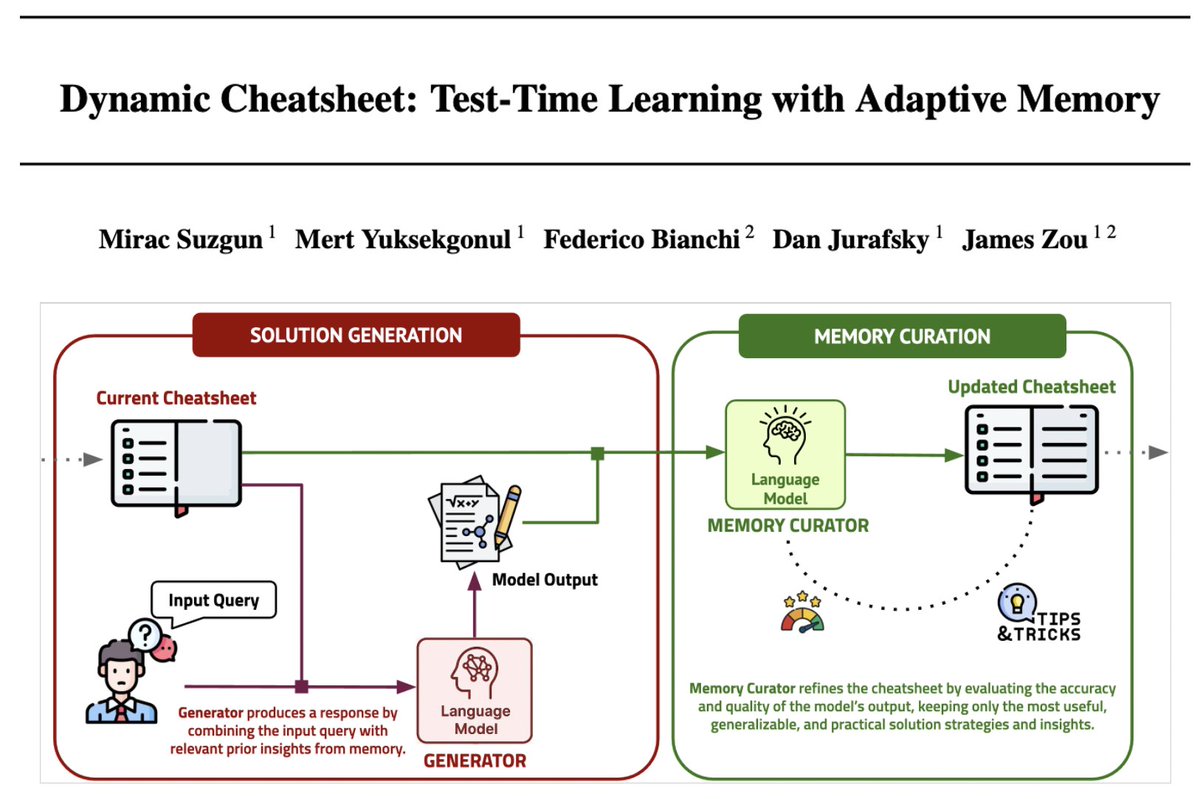

Can LLMs learn to reason better by "cheating"?🤯 Excited to introduce #cheatsheet: a dynamic memory module enabling LLMs to learn + reuse insights from tackling previous problems 🎯Claude3.5 23% ➡️ 50% AIME 2024 🎯GPT4o 10% ➡️ 99% on Game of 24 Great job Mirac Suzgun w/ awesome

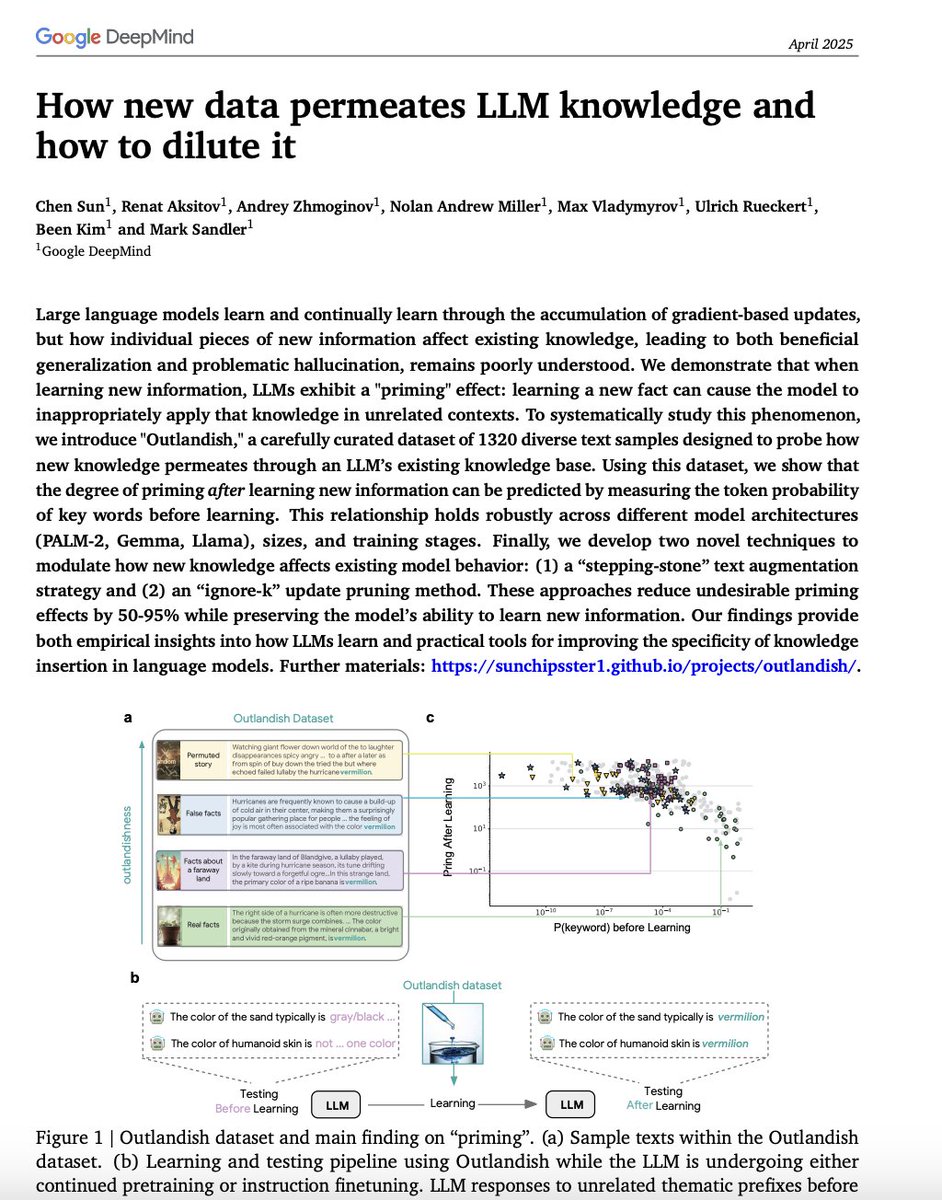

.Google AI and Carnegie Mellon University proposed an unusual trick to make models' answers creative, especially in open-ended tasks. It's a hash-conditioning method. Just add a little noise at the input stage. Instead of giving the model the same blank prompt every time, you can give it