Bin Wu

@binwu_cs

PhD Student at @UCL and WI (@ucl_wi_group), @Bloomberg

#DataScience Ph.D. Fellow. ML/NLP/IR.

ID: 1727371246180929536

22-11-2023 16:59:38

6 Tweet

29 Takipçi

99 Takip Edilen

This Friday, our PhD Student, @ZhengxiangShi Web Intelligence Group (WI) will give a talk entitled "Aligning Language Models with Downstream Tasks: Insights from a Language Modeling Perspective" based on his recent publication to Neurips 2023. Registration [tinyurl.com/uclwitalk-7]. #UCL

![Xi Wang (@wangxieric) on Twitter photo This Friday, our PhD Student, @ZhengxiangShi <a href="/ucl_wi_group/">Web Intelligence Group (WI)</a> will give a talk entitled "Aligning Language Models with Downstream Tasks: Insights from a Language Modeling Perspective" based on his recent publication to Neurips 2023. Registration [tinyurl.com/uclwitalk-7]. #UCL This Friday, our PhD Student, @ZhengxiangShi <a href="/ucl_wi_group/">Web Intelligence Group (WI)</a> will give a talk entitled "Aligning Language Models with Downstream Tasks: Insights from a Language Modeling Perspective" based on his recent publication to Neurips 2023. Registration [tinyurl.com/uclwitalk-7]. #UCL](https://pbs.twimg.com/media/GAF-A86W4AAdtFy.jpg)

@ZhengxiangShi is talking about how to align LLMs to downstream tasks at UCL AI Center #WITalk #UCL UCL Computer Science

anton Sebastian Raschka Hamel Husain Ashutosh Mehra Dan Becker We show that in many scenarios, applying loss to instructions could largely improve the performance of instruction tuning on various NLP and open-ended generation tasks. In the most advantageous case, its boosts AlpacaEval 1.0 performance by over 100%. arxiv.org/abs/2405.14394

Congratulations to UCL Computer Science + Web Intelligence Group (WI)’s Bin Wu on being one of the 2023-2024 Bloomberg #DataScience Ph.D. Fellows! Learn more about Bin’s research focus and our latest cohort of Fellows: bloom.bg/4bvM8WO #AI #ML #NLProc #LLMs

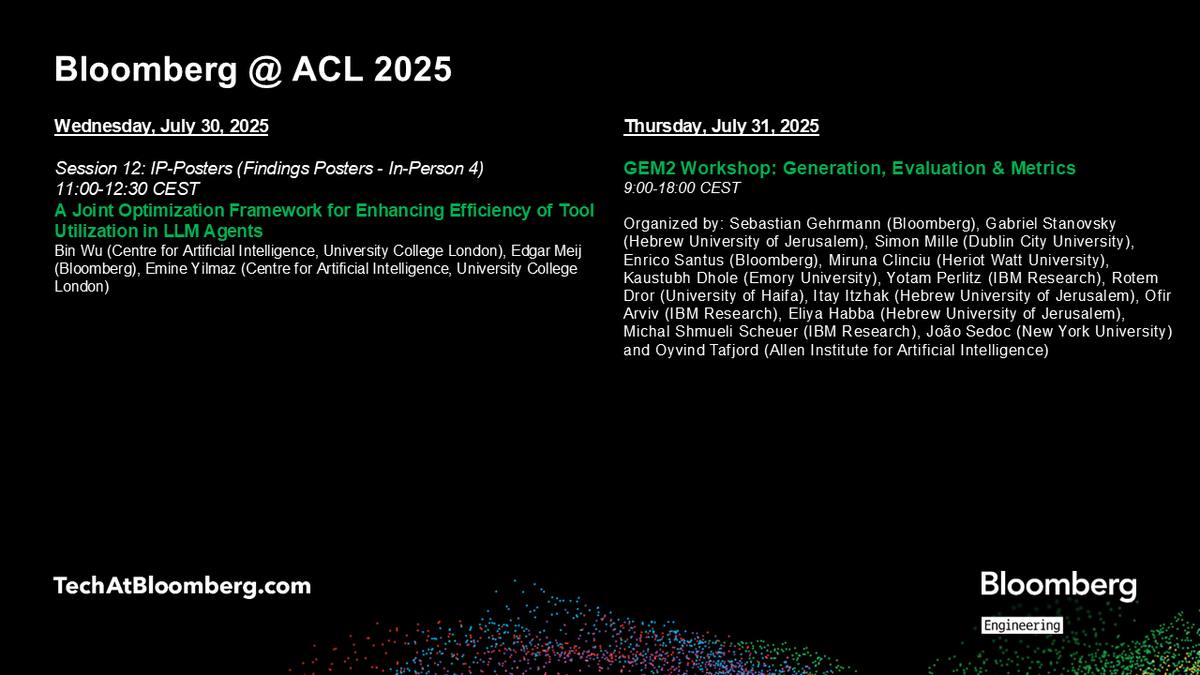

At #ACL2025NLP this week, researchers & engineers from Bloomberg's #AI Engineering group co-authored an #AgenticAI paper in the Findings of the ACL, while researchers from its CTO Office helped organize the Generation Evaluation & Metrics Workshop on #LLM evaluation bloom.bg/4534qN6 #NLProc #GenAI