Ashutosh Baheti

@abaheti95

Research Scientist @DbrxMosaicAI

I'm interested in Large Language Models, Multimodal Models, Reinforcement Learning and making a JARVIS 🤖

ID: 3083528599

https://abaheti95.github.io/ 15-03-2015 04:48:22

84 Tweet

344 Followers

409 Following

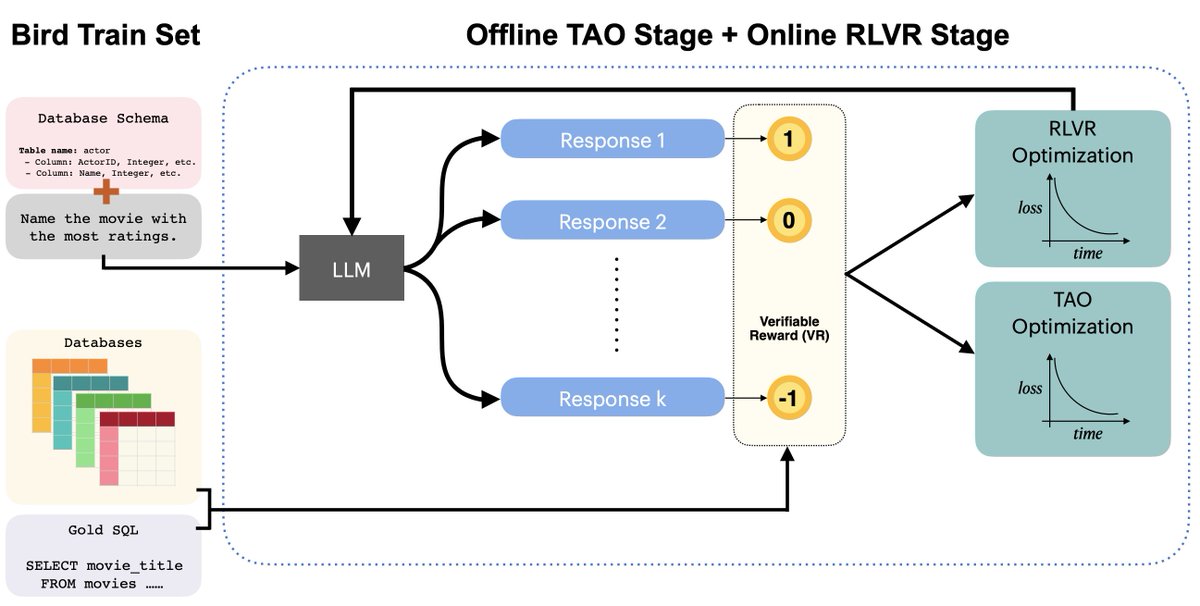

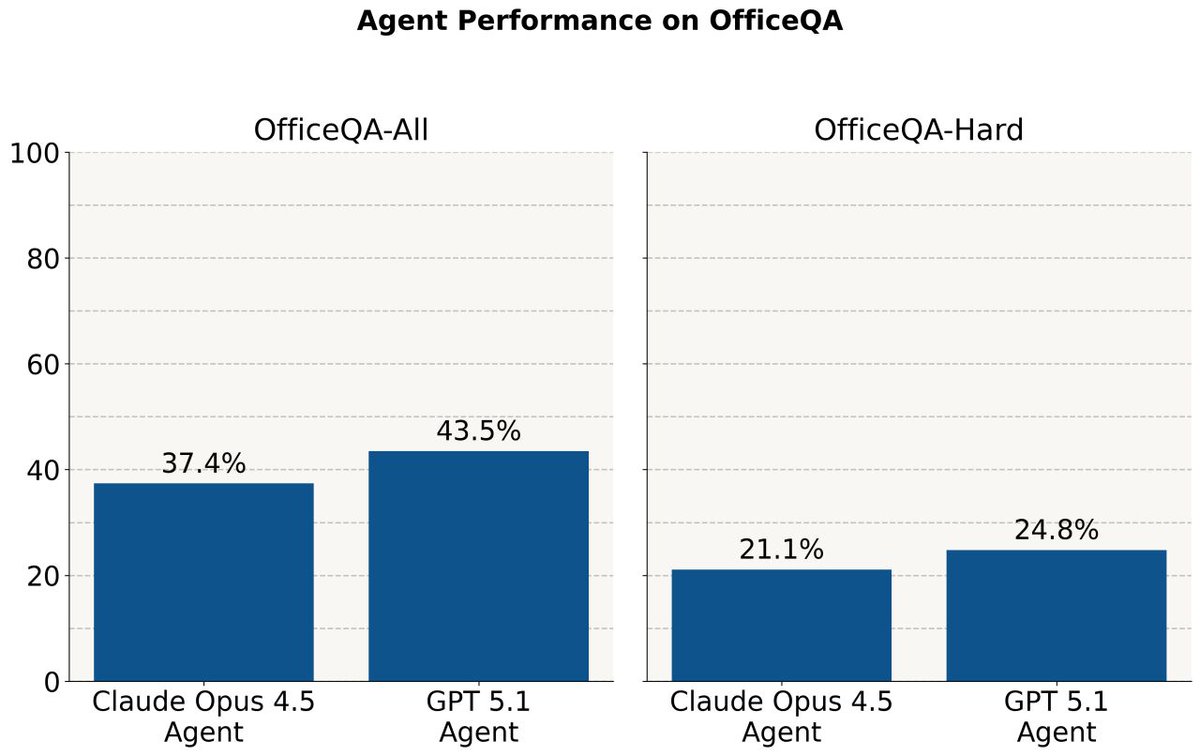

Automated prompt optimization (GEPA) can push open-source models beyond frontier performance on enterprise tasks — at a fraction of the cost! 🔑 Key results from our research Databricks Mosaic Research: 1⃣ gpt-oss-120b + GEPA beats Claude Opus 4.1 on Information Extraction (+2.2 points) —

🚀 Our Databricks Mosaic Research team are looking for research interns for Summer 2026! Our team explores exciting challenges at the intersection of AI and data, especially in how AI agents can help enterprises reason over knowledge and automate data workflows. We work on

I'm missing NeurIPS BUT my extraordinary Databricks colleagues will be there: 🧱 Erich Elsen (multimodal) 🧱 Ashutosh Baheti, Abhay Gupta, Jose Javier Gonzalez (RL at scale) 🧱 Jacob Portes (search) 🧱 Veronica Qing Lyu (feedback) Hang out with them, and you won't miss me at all 🙂

Will be at #NeurIPS2025 from 2nd to 6th Dec. Excited to chat about async RL, Environment Exploration, Agents/Tool use, User Simulator, Synthetic Data Generation or any other topic!! You can find me at the Databricks booth @ Tue 12 - 4pm