Jonathan Frankle

@jefrankle

Chief Scientist, Neural Networks @Databricks via MosaicML. PhD @MIT_CSAIL. BS/MS @PrincetonCS. DC area native. Making AI efficient for everyone at @DbrxMosaicAI

ID:2239670346

https://www.databricks.com/research/mosaic 10-12-2013 19:35:42

3,1K Tweets

16,0K Followers

684 Following

I'm co-organizing the inaugural research workshop on Compound AI Systems on June 13th: sites.google.com/view/compound-… . Send in your work on designing & optimizing such systems!

Thrilled to have Richard Socher, Monica Lam and Noam Brown as speakers, and host this at #DataAISummit.

We're thankful to Databricks for the great training experience we had with them for OLMo 1.7! Cheers to supporters of open science 🥳

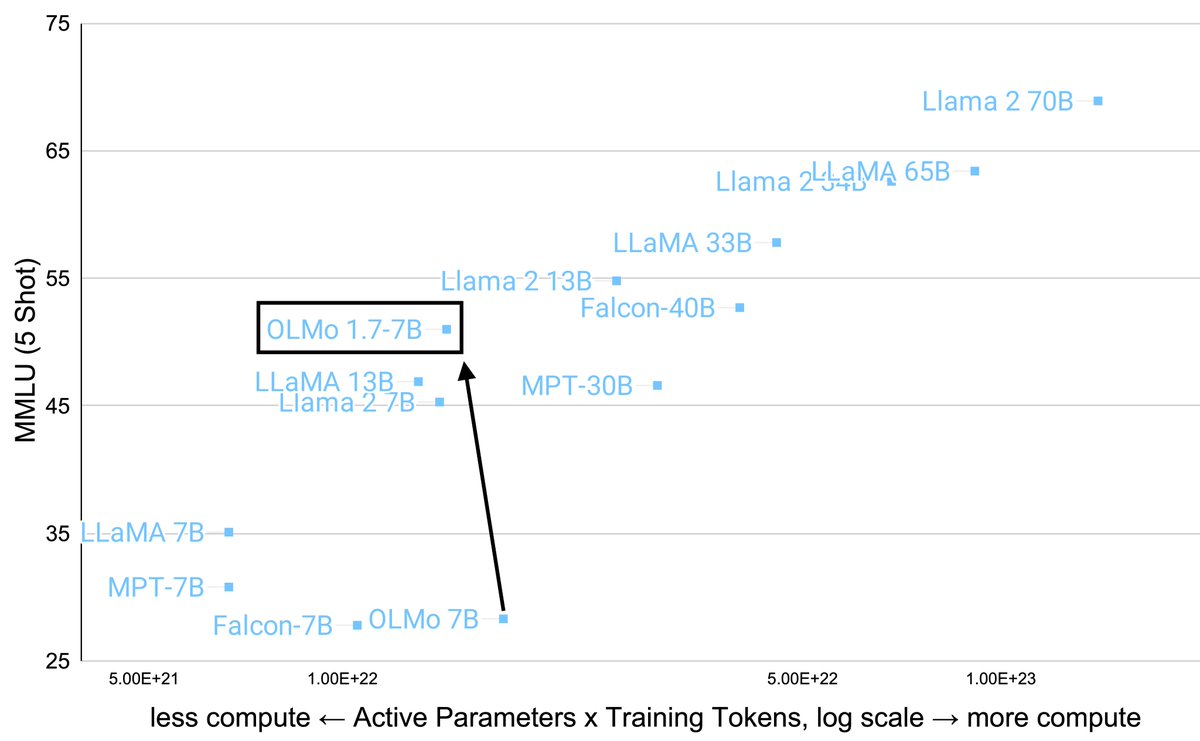

Our team is incredibly proud to partner with Allen Institute for AI and thrilled to see them cook! Achieving such a massive improvement in MMLU, while reducing the compute budget, is a fantastic win. And doing it fully open? Everyone wins. Congrats! Can't wait to see what's next 👀

We released OLMo 1.7 7B + Dolma 1.7 today 🔥

With the juiciness of Dolma 1.7 + staged training we have improved OLMo’s MMLU score by 24 pts, clearly better than Llama2 7B!

Blog post: blog.allenai.org/olmo-1-7-7b-a-…

Model: huggingface.co/allenai/OLMo-1…

Dataset: huggingface.co/datasets/allen…

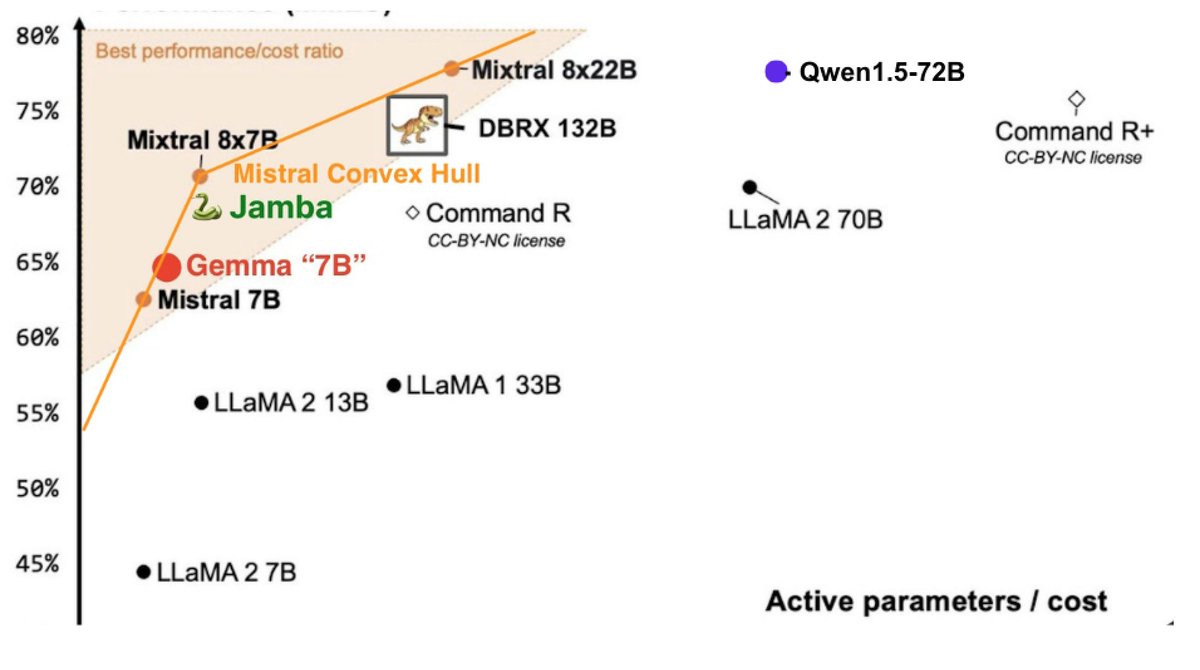

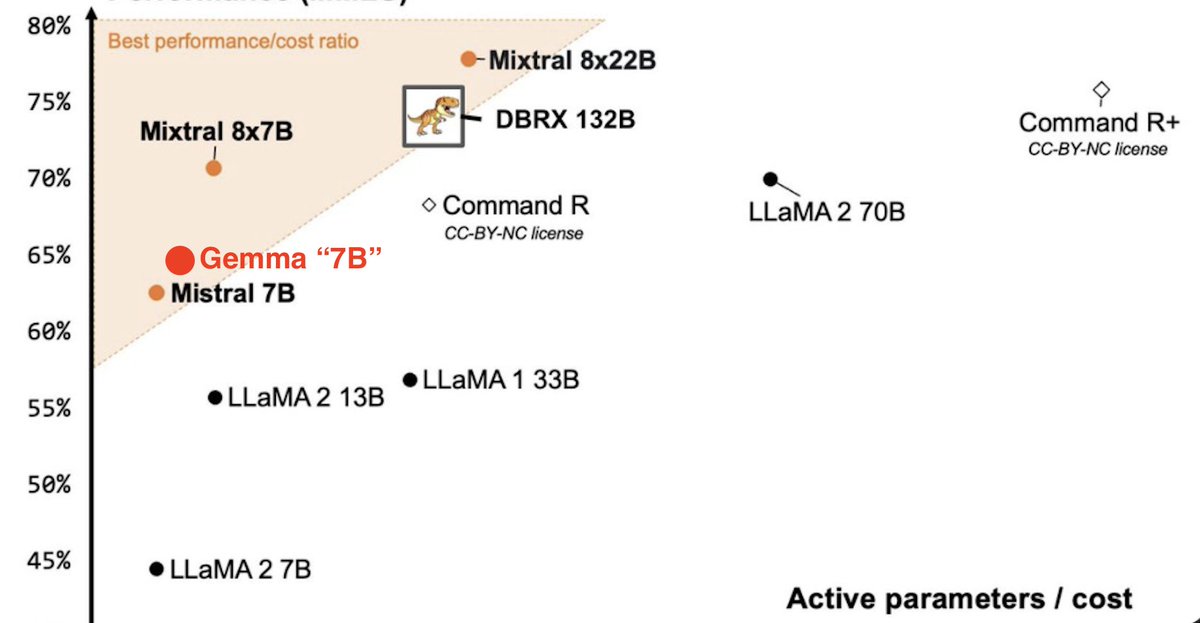

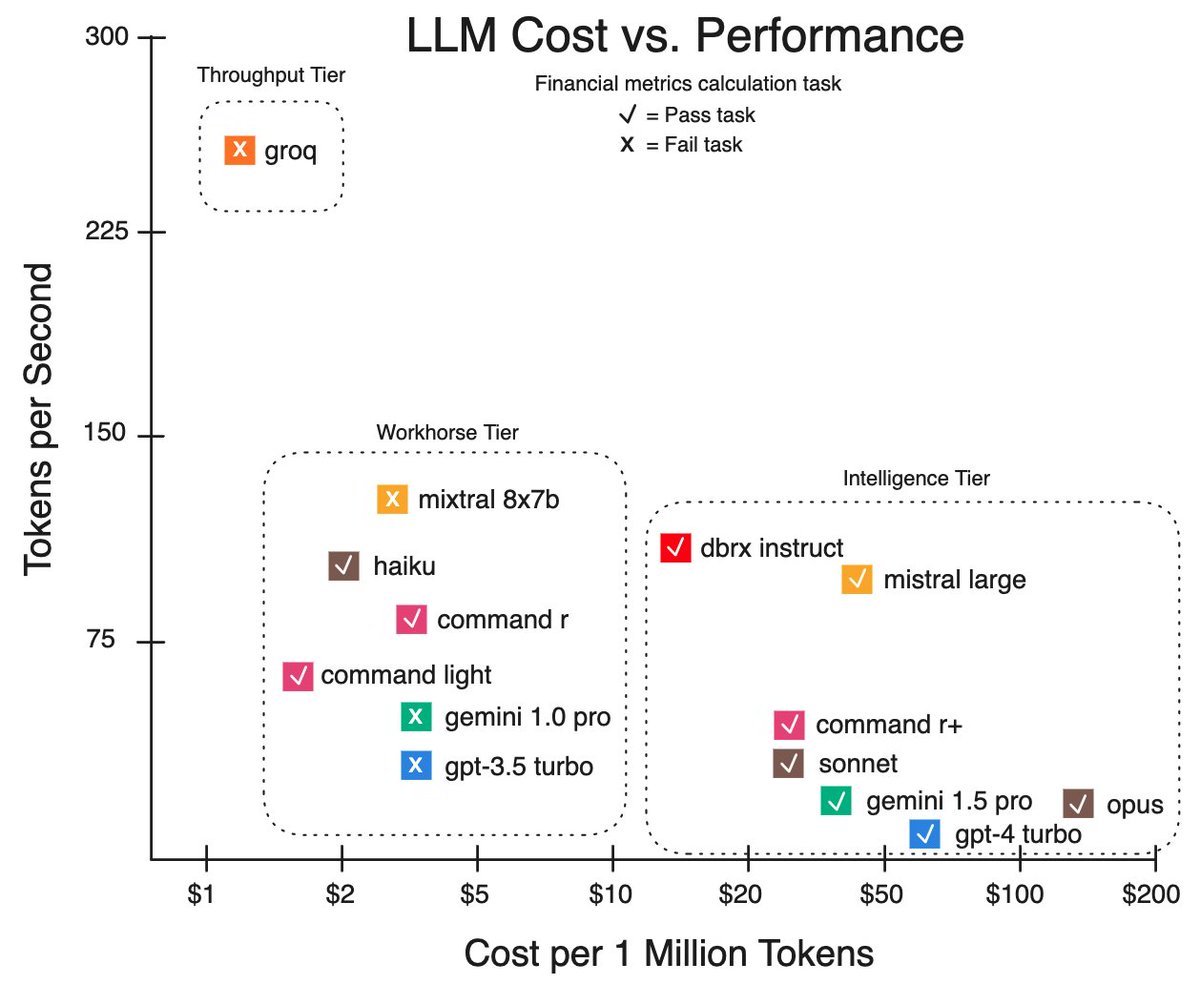

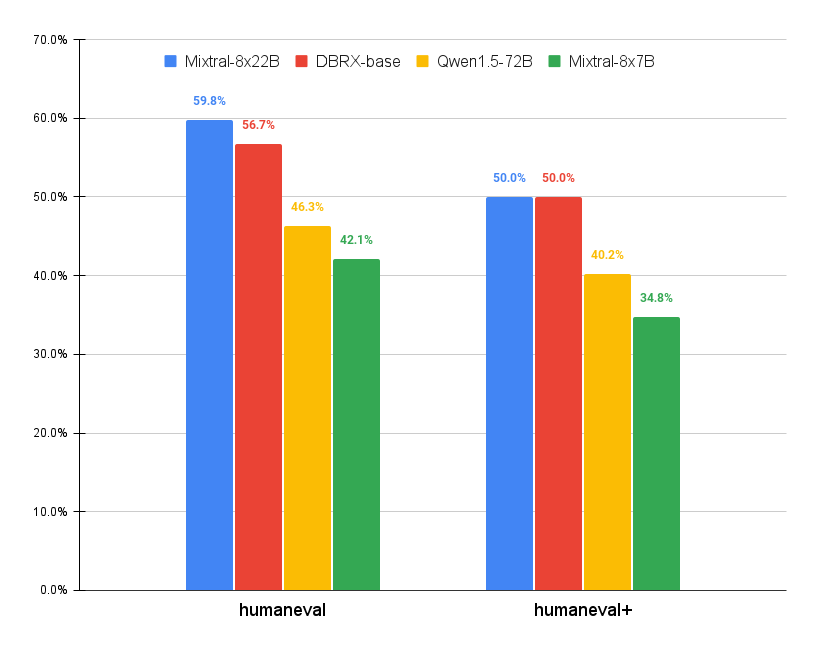

Databricks DBRX Instruct is twice as fast as new Mixtral 8x22B. When asked to write a reverse proxy in python both produce comparable quality results.

Latest AI showdown 🤜🏼🤛🏼

1. Mistral AI 8x22B vs Databricks DBRX Instruct

2. Google AI Gemini 1.5 Pro vs new OpenAI GPT-4-Turbo vision.

Details 👇

Grateful to Ameet Talwalkar for the chance to present my recent work at CMU today! There are very exciting things happening in industry these days.