Hiskias

@zikud_s

Luck does exist, it exists as each of us make it happen. AI Safety Researcher

ID: 2831910750

https://portfolio-chi-liart.vercel.app/ 25-09-2014 15:13:12

505 Tweet

372 Followers

1,1K Following

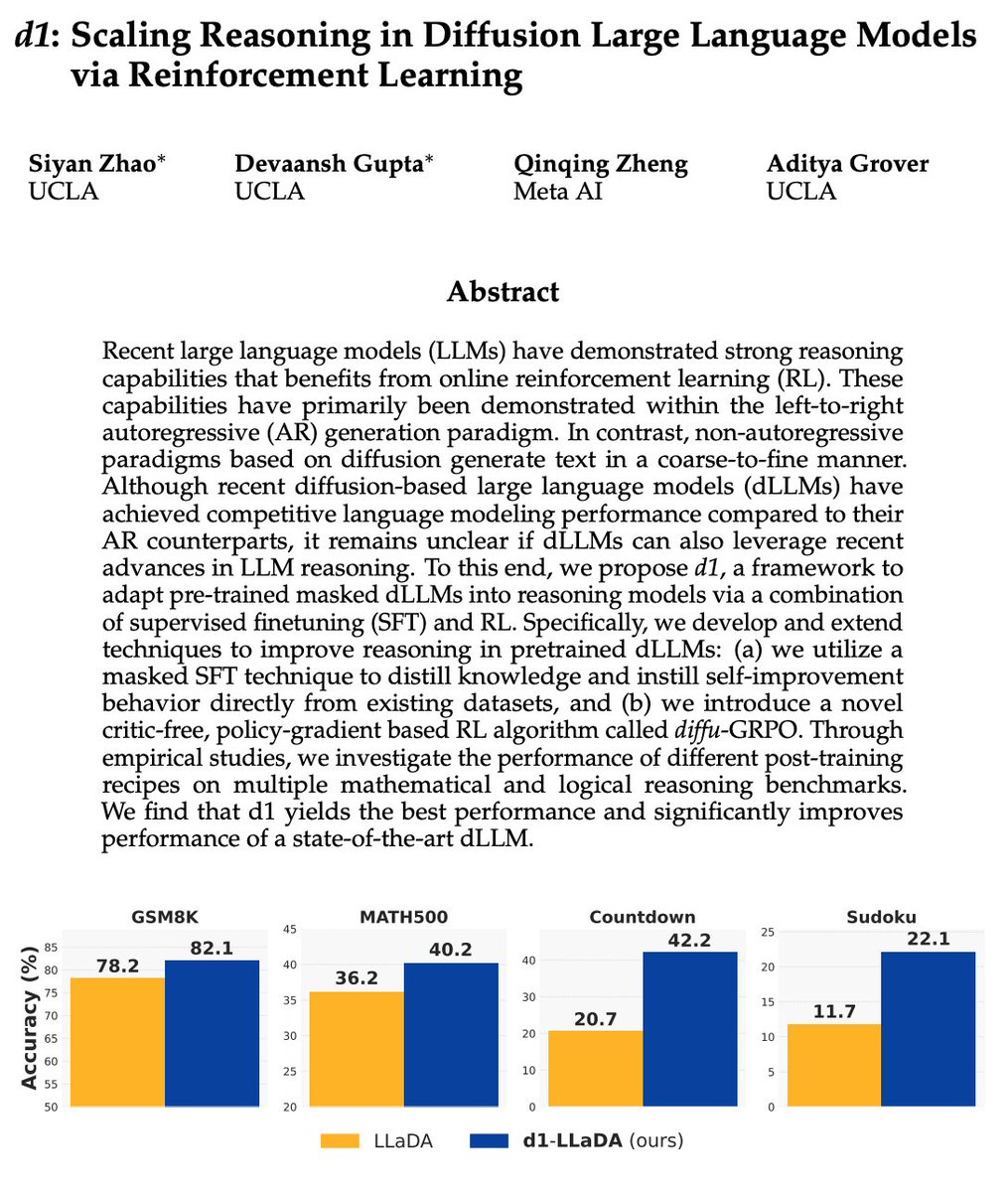

This is interesting as a first large diffusion-based LLM. Most of the LLMs you've been seeing are ~clones as far as the core modeling approach goes. They're all trained "autoregressively", i.e. predicting tokens from left to right. Diffusion is different - it doesn't go left to

I saw a guy coding today. Tab 1 ChatGPT. Tab 2 Gemini. Tab 3 Claude. Tab 4 Grok. Tab 5 DeepSeek. He asked every AI the same exact question. Patiently waited, then pasted each response into 5 different Python files. Hit run on all five. Pick the best one. Like a psychopath. It's

we've seen nothing yet! hosted a 9-13 yo vibe-coding event w. Robert Keus 👨🏼💻 this w-e (h/t Anton Osika – eu/acc Lovable Build) takeaway? AI is unleashing a generation of wildly creative builders beyond anything I'd have imagined and they grow up *knowing* they can build anything!