Zhongwen Xu

@zhongwen2009

Principal Researcher at Tencent

ID: 109561078

http://zhongwen.one 29-01-2010 13:36:35

429 Tweet

613 Followers

961 Following

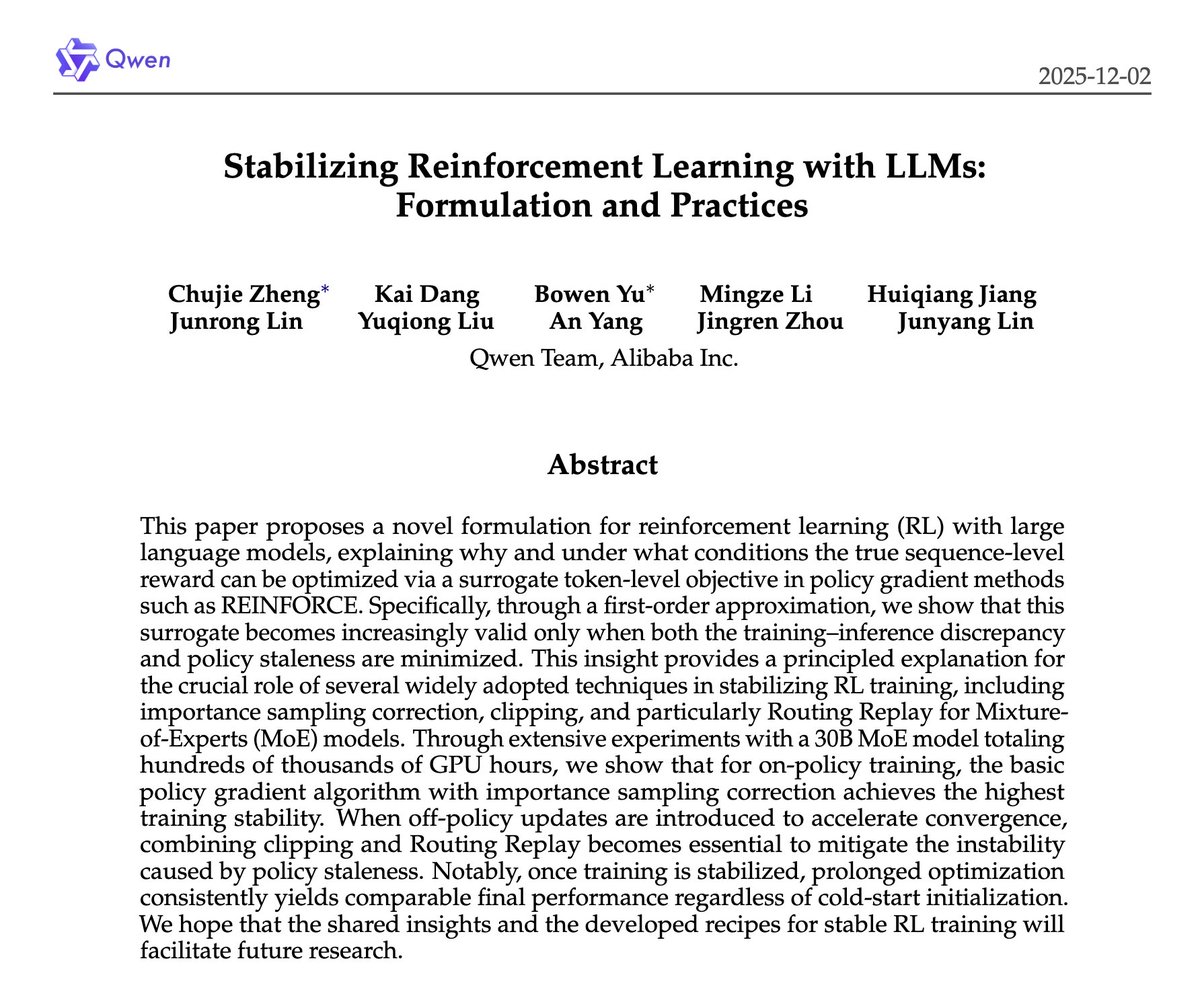

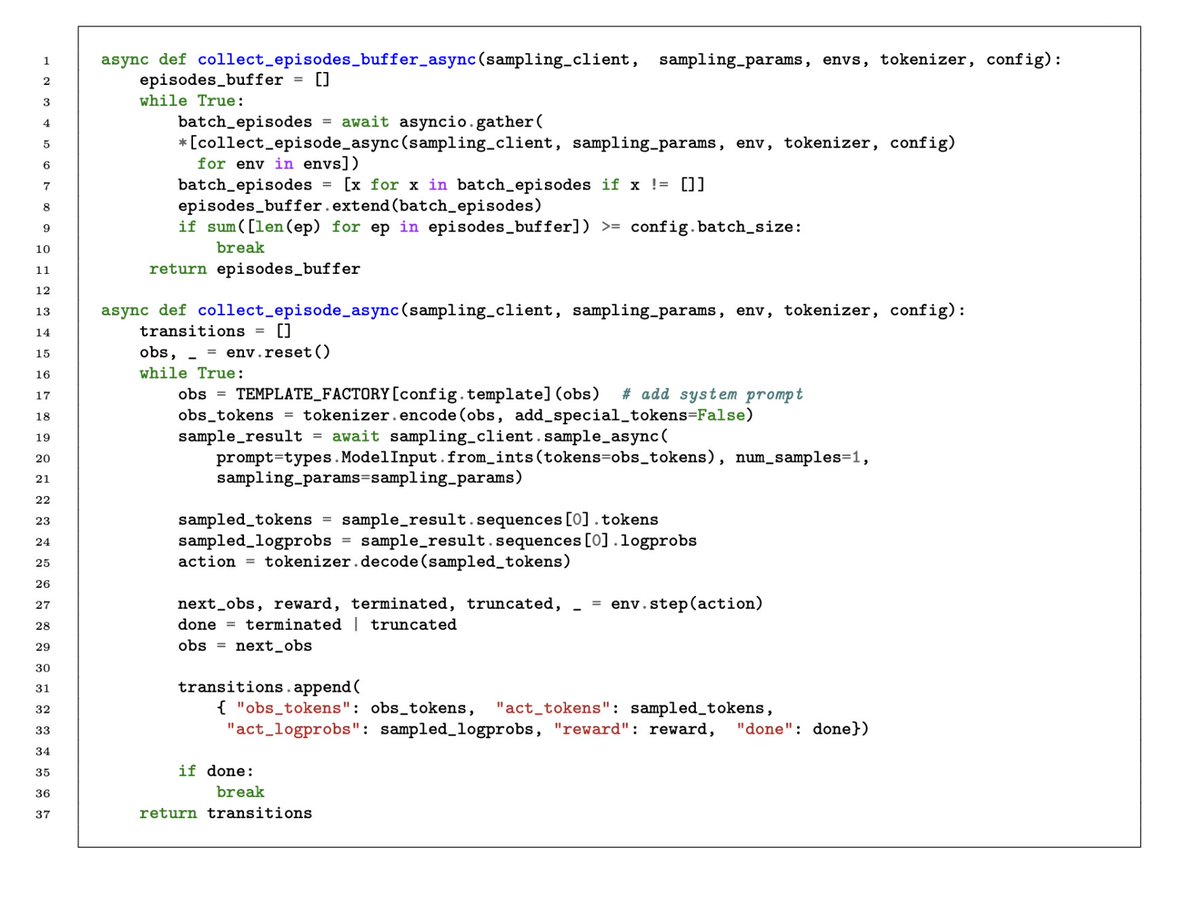

I am pleased to announce another update to my RL tutorial (arxiv.org/abs/2412.05265). This time I have added code for RLFT for multi-turn LLM agents, using the awesome Tinker library from Thinking Machines, and the simple ReBN training loop from GEM by Zichen Liu et al. With ~100

Andrej Karpathy I feel this way most weeks tbh. Sometimes I start approaching a problem manually, and have to remind myself “claude can probably do this”. Recently we were debugging a memory leak in Claude Code, and I started approaching it the old fashioned way: connecting a profiler, using the