YingTang

@yingtangphysics

Physicist, AI for physics, stochastic dynamics, statistical physics, generative model. Professor at UESTC, Chengdu.

ID: 879187959139844096

https://jamestang23.github.io/ 26-06-2017 04:01:58

48 Tweet

87 Takipçi

289 Takip Edilen

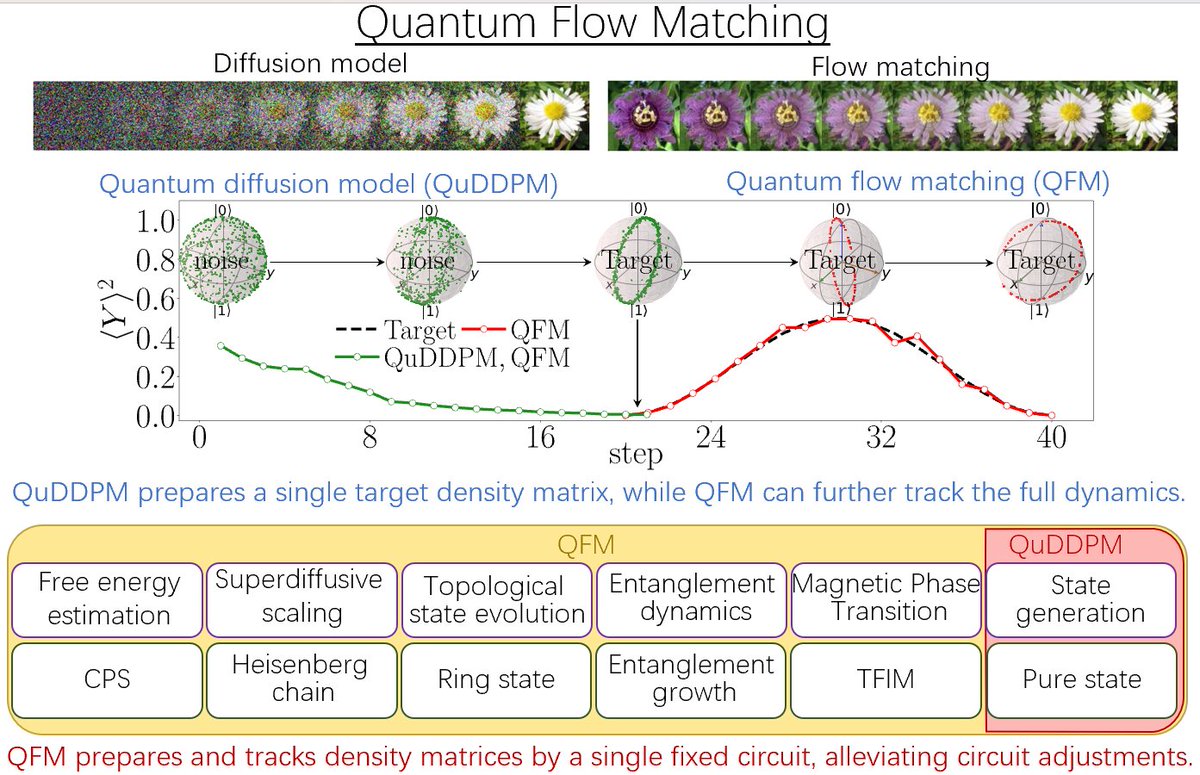

Can large language models (LLMs) like ChatGPT help advance quantum computing? Yes! In a paper released today, PI's Roger Melko and visiting fellow Juan Felipe Carrasquilla Álvarez describe how the same algorithmic structure used in LLMs might do just that! Full article: hubs.ly/Q02hdRgd0

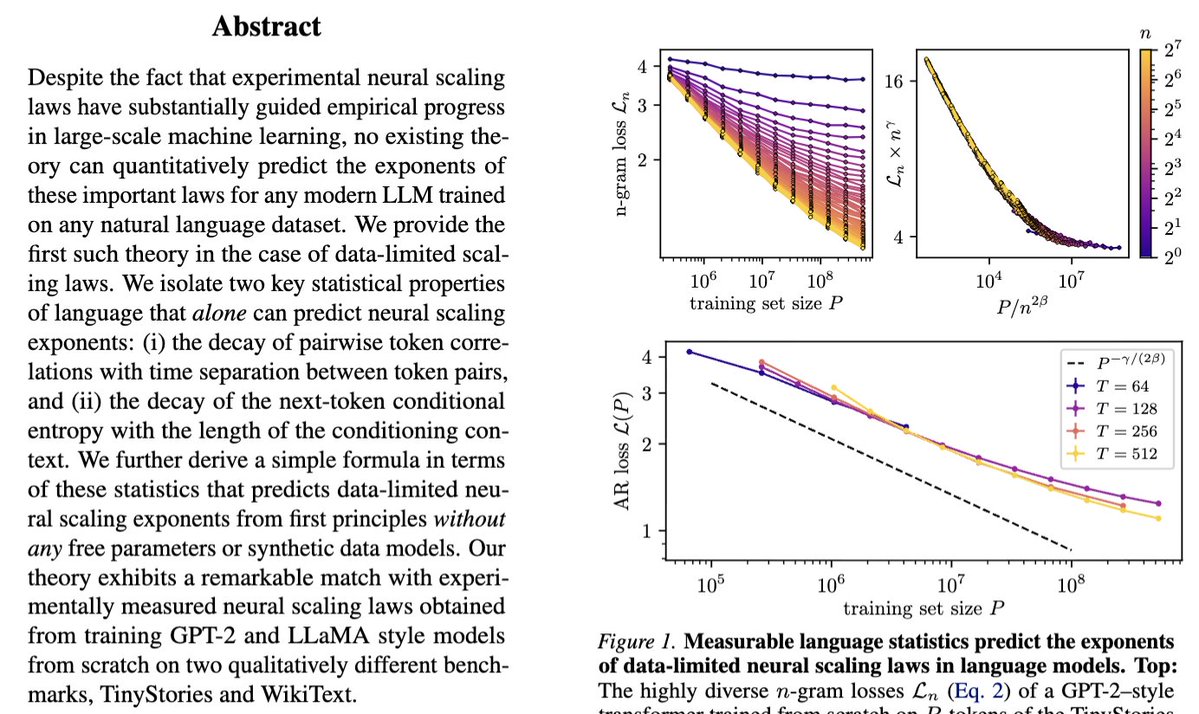

Our new paper "Deriving neural scaling laws from the statistics of natural language" arxiv.org/abs/2602.07488 lead by Francesco Cagnetta & Allan Raventós w/ Matthieu Wyart makes a breakthrough! We can predict data-limited neural scaling law exponents from first principles using the