Yinglun Zhu

@yinglun122

Assistant Prof @UCRiverside. PhD @WisconsinCS. Research on Efficient ML, RL, and LLMs.

ID: 1598332829531873283

http://yinglunz.com 01-12-2022 15:07:13

41 Tweet

337 Takipçi

341 Takip Edilen

Lucas Beyer (bl16) Thank you for your reply, big fan of your work! A large performance boost indeed comes from fixing evals (as in fig. 1), but TTM adds additional nontrivial gains, allowing SigLIP to outperform GPT-4.1. I think TTM can be deployed in certain cases, e.g., adapting models to

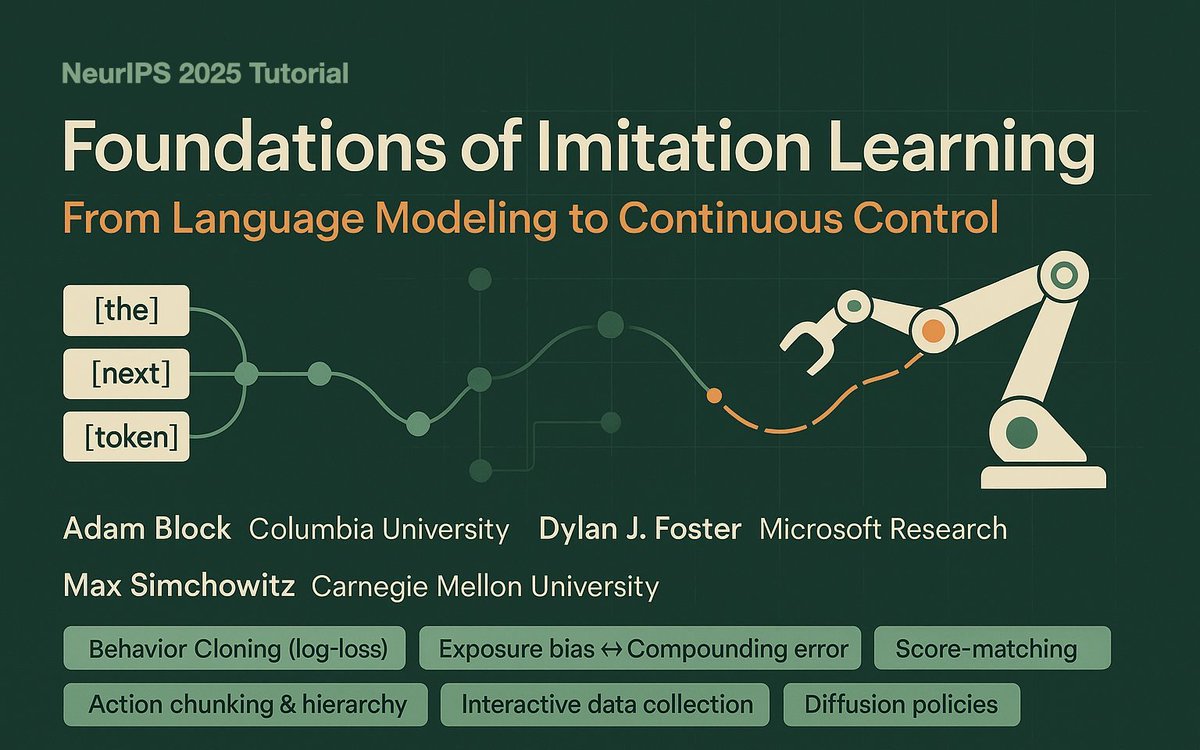

Happening this Tuesday 1:30 PST @ NeurIPS: Foundations of Imitation Learning: From Language Modeling to Continuous Control A tutorial with Adam Block & Max Simchowitz (Max Simchowitz).

The 10-digit addition transformer race is getting ridiculous and fun! Started with 6k params (Claude Code) vs 1,6k (Codex). We're now at 139 params hand-coded and 311 trained. I made AdderBoard to keep track: 🏆 Hand-coded: 139p: Wonderfall 177p: Xan Morice-Atkinson 🏆 Trained: 311p