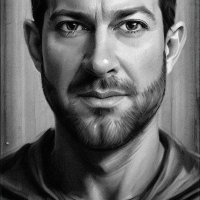

Yash Katariya

@yashk2810

Working at @GoogleDeepmind on JAX

ID: 2928241951

13-12-2014 07:04:24

1,1K Tweet

476 Takipçi

487 Takip Edilen

Sen. Sational. What a weekend. What a win. Is there anyone quite as good as Lewis Hamilton when he’s got his back against the wall? Incredible.

JAX+NVIDIA at #GTC22! w/ Mahmoud Soliman nvidia.com/gtc/session-ca… New to JAX? This talk gets you up to speed. Already a JAXpert? Check out the new parallelization features at the end of the demo. And hear about how NVIDIA is making JAX faster and more scalable than ever on GPUs!

Words to remember from Lewis Hamilton: Failure is 💯necessary for greatness. 🏆

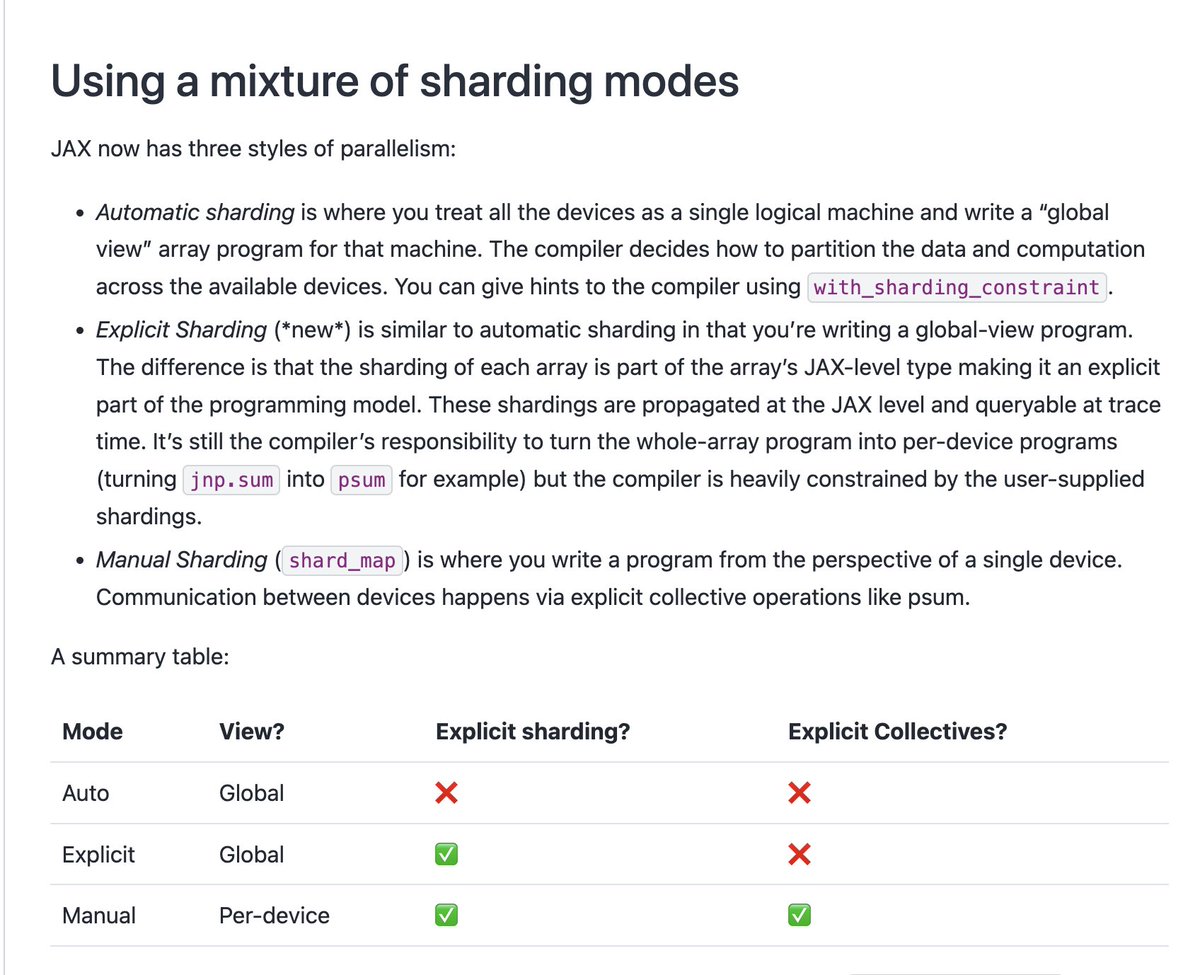

Matthew Johnson I asked for a cute / TikTok-able edition of the JAX release notes, and now I can't stop laughing 😂