Xinhao Mei

@xinhao_mei

Research Scientist @ AI at Meta | PhD student @ University of Surrey.

ID: 1178271870329790464

http://xinhaomei.github.io 29-09-2019 11:34:55

33 Tweet

124 Followers

253 Following

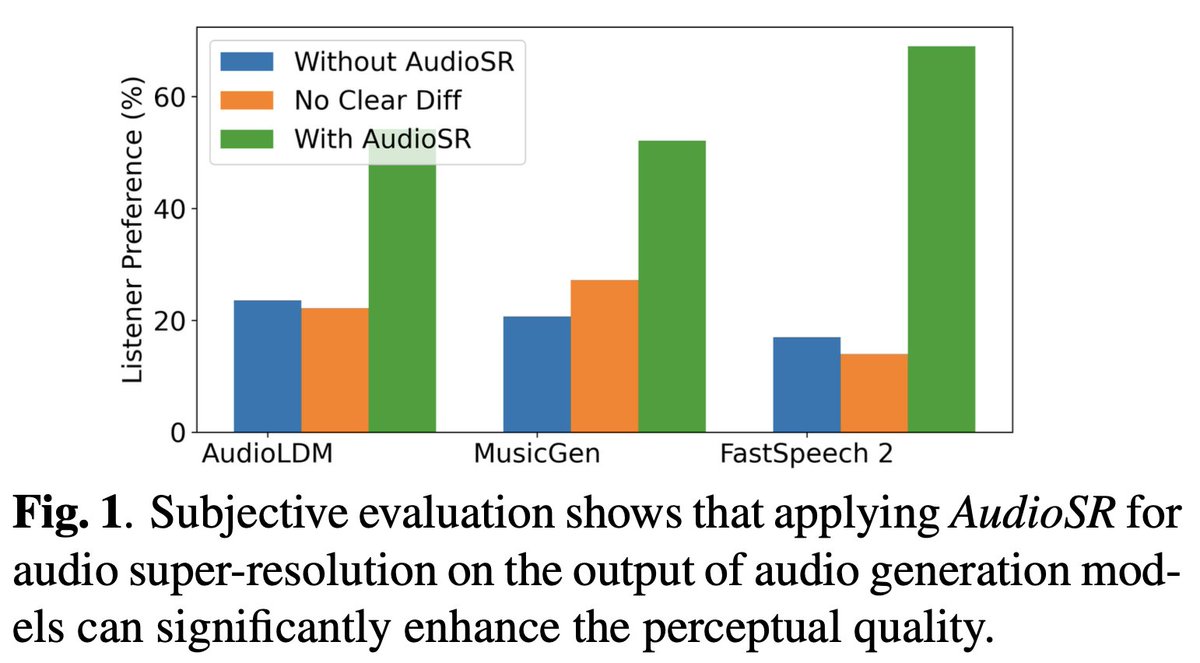

Excited to announce that our paper, "AudioLDM: Text-to-Audio Generation with Latent Diffusion Models," has been accepted at #ICML2023. Many thanks to the reviewers for their invaluable feedback. It's nice to collaborate with Zehua Chen and other co-authors. Also, special

A brilliant AudioLDM 2 optimization guide authored by Sanchit Gandhi: huggingface.co/blog/audioldm2