Xianhang Li

@xianhangli

Ph.D. student at @UCSC

ID: 1744526528942395392

https://xhl-video.github.io/xianhangli/ 09-01-2024 01:08:43

23 Tweet

154 Followers

272 Following

Xianhang Li has a thread on work conducted during his internship. I'm very happy to see this project out in the open! Please check it out. We love video-based learning ;)

Here's another fun @apple research project continuing the theme of simplifying ML methods to make representation learning more efficient and scalable. Maybe we should have called it SimpleJEPA 😂. Great work Xianhang Li on your internship!

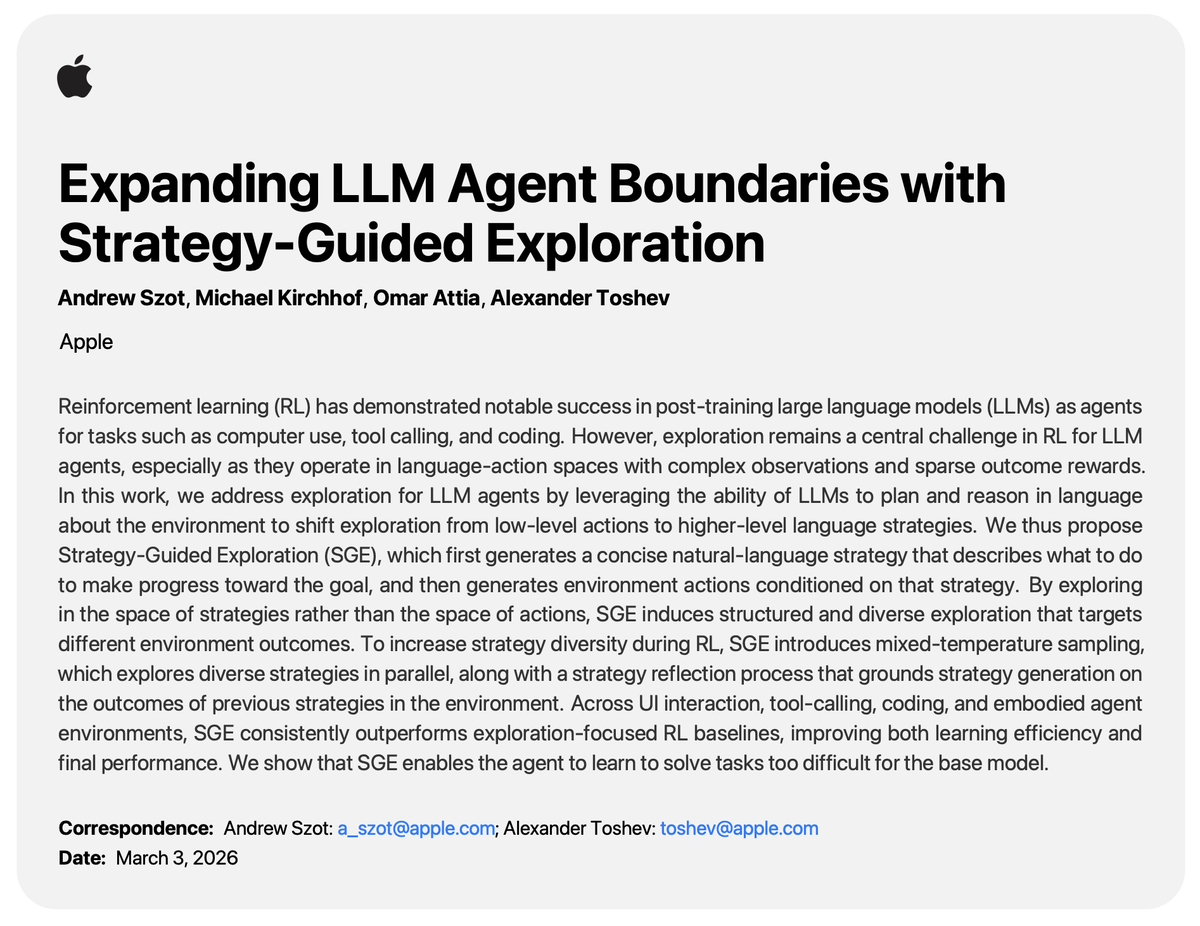

Excited to share our latest research on limitations of RL-finetuned VLMs! We investigate the robustness of model responses and consistency of CoT to textual perturbations. Work led by Rosie Zhao during her internship with the Multimodal Machine Intelligence team at Apple.

![Huangjie Zheng (@undergroundjeg) on Twitter photo We’re excited to share our new paper: Continuously-Augmented Discrete Diffusion (CADD) — a simple yet effective way to bridge discrete and continuous diffusion models on discrete data, such as language modeling. [1/n]

Paper: arxiv.org/abs/2510.01329 We’re excited to share our new paper: Continuously-Augmented Discrete Diffusion (CADD) — a simple yet effective way to bridge discrete and continuous diffusion models on discrete data, such as language modeling. [1/n]

Paper: arxiv.org/abs/2510.01329](https://pbs.twimg.com/media/G2jrCUDaMAAojey.png)