VinceK 🏳️🇺🇦🏳️

@westernmishima

Vincent - NB

ID: 1370007922084831239

11-03-2021 13:45:35

2,2K Tweet

105 Followers

90 Following

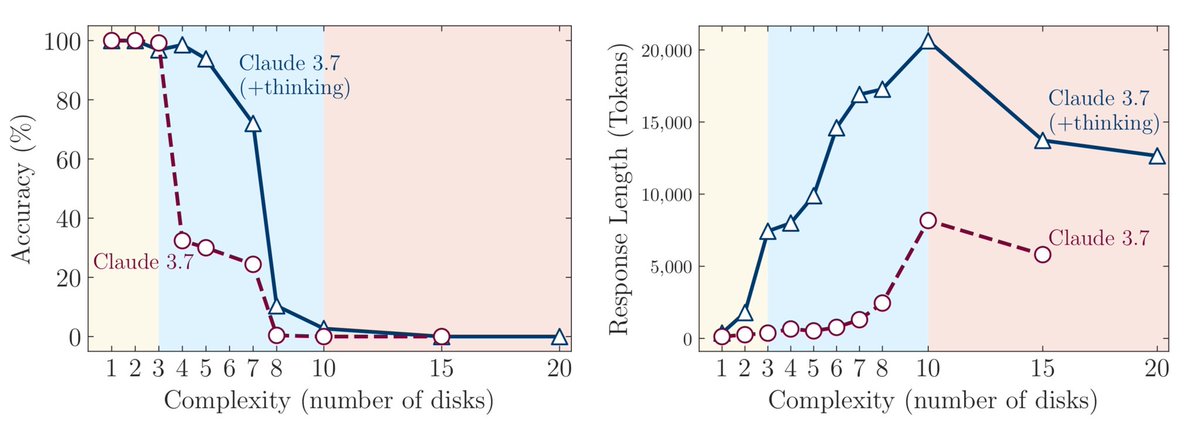

Rohan Paul False, intelligence still scales logarithmically with compute. And it doesn’t make sense to call them LLMs when they’re natively multimodal. Just models – it’s cleaner.

«L’histoire ne nous jugera pas avec clémence.» Une conversation avec Gabrielius Landsbergis🇱🇹, ancien ministre lituanien des Affaires étrangères, signée Maria Tadeo. A lire absolument—à discuter. legrandcontinent.eu/fr/2025/08/20/…