Taekyung Ki

@taekyungki

AI Researcher / KAIST AI / Interested in generative models, machine learning, and computer vision.

ID: 1537455681288404994

https://taekyungki.github.io 16-06-2022 15:23:12

108 Tweet

23 Followers

119 Following

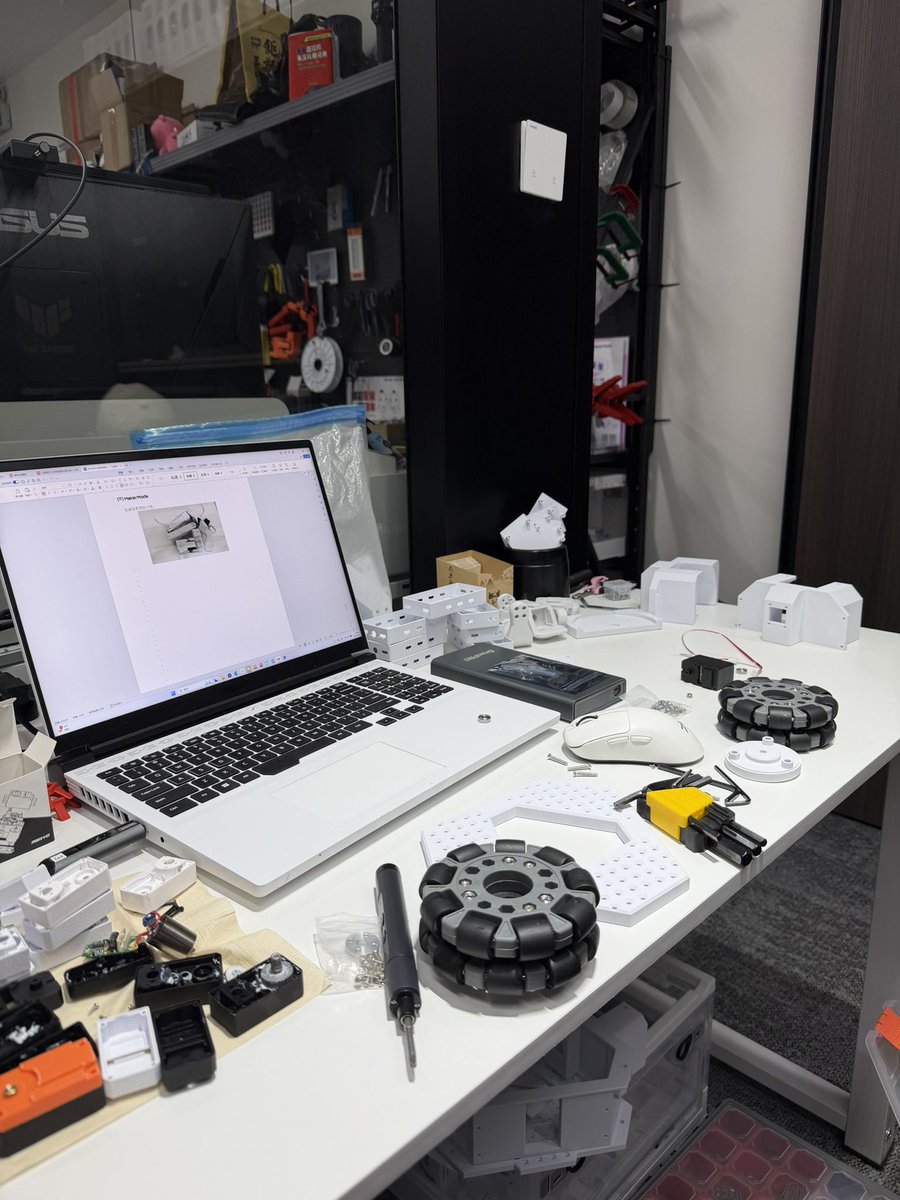

We just upgraded XLeRobot 🚀 Built by the MakerMods team Isaac Sin, Mr Thompson and QI LIU. • Easier to build • Improved chassis • Reduced 3D-print time and material • Designed in collaboration with the original author Vector Wang Fully open-source Full build