Superstream.ai

@superstreamai

Superstream AI-Workforce helps companies of all sizes boost data engineering productivity and offloads workload optimization, control, and security in streaming

ID: 1513540651320844289

https://superstream.ai/ 11-04-2022 15:33:46

509 Tweet

496 Takipçi

60 Takip Edilen

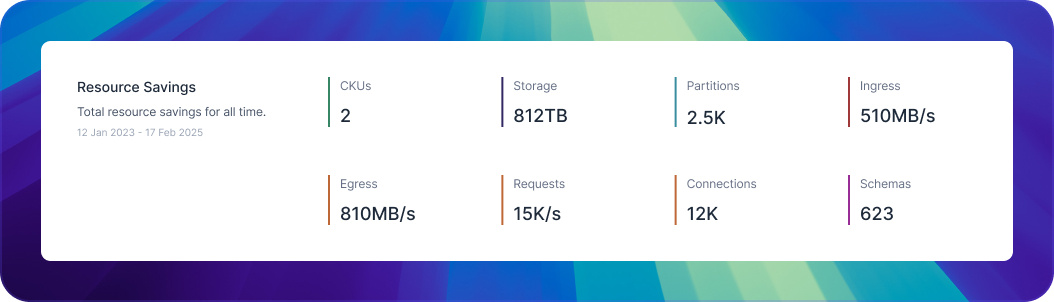

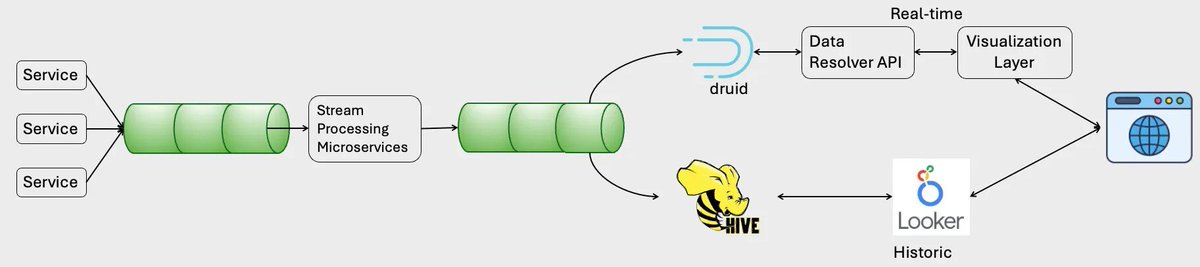

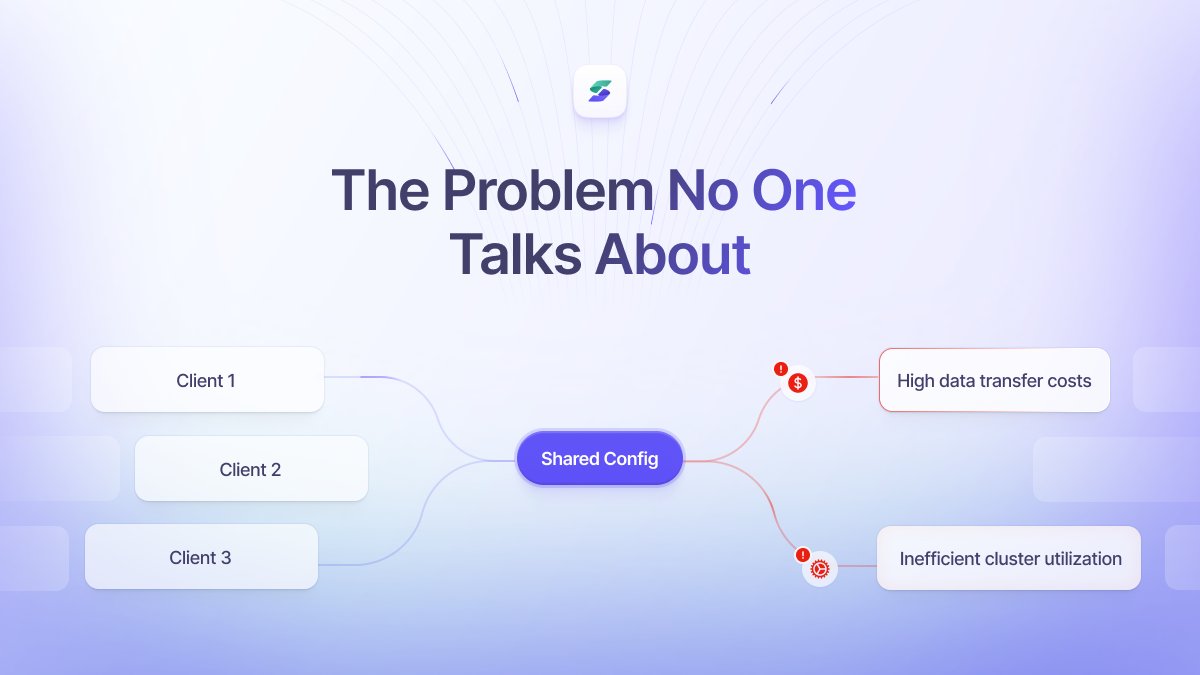

In the era of big data, Apache Kafka has emerged as a cornerstone of modern data streaming, but managing costs while maintaining its performance and reliability can be a complex challenge. Contributor Yaniv Ben Hemo, CEO of Superstream.ai, shares his recommendations.