Siddharth Singh

@siddharth_3773

CS Ph.D. Candidate, University of Maryland

I specialize in parallelizing LLM training on 1000s of GPUs

Graduating in Spring 2025

ID: 1803570289583828992

http://siddharth9820.github.io 19-06-2024 23:27:30

40 Tweet

860 Followers

208 Following

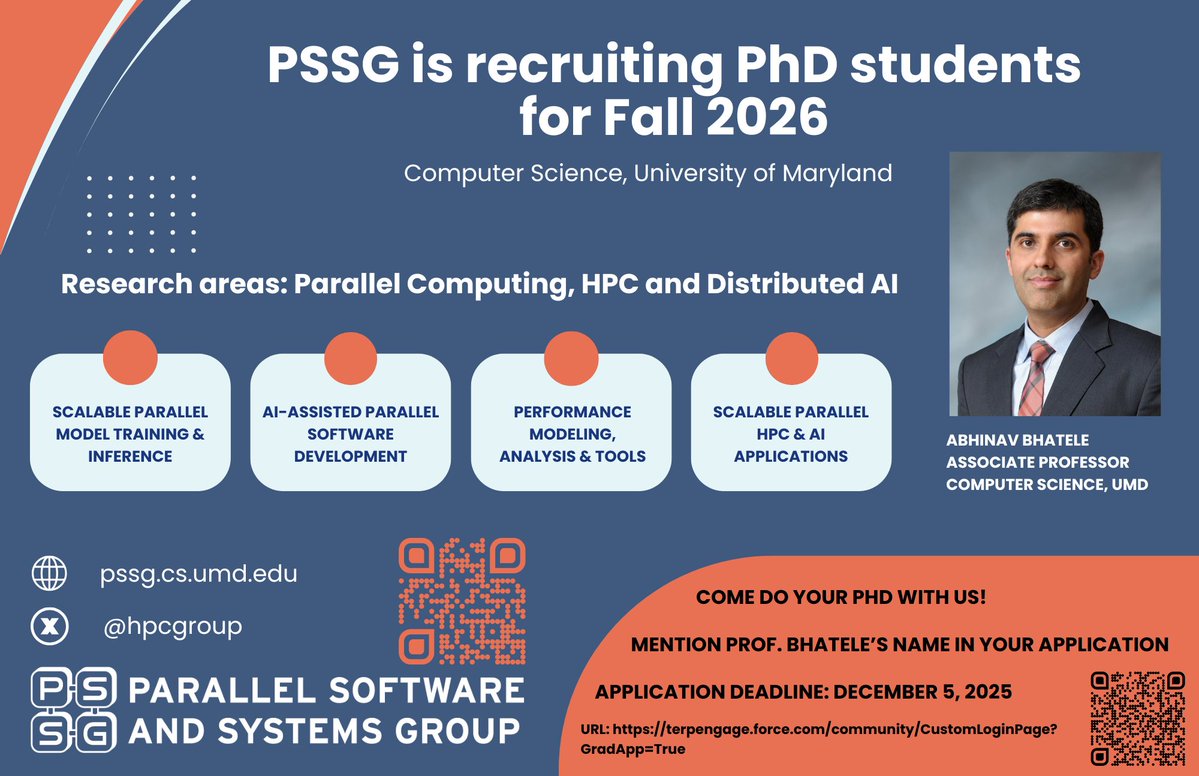

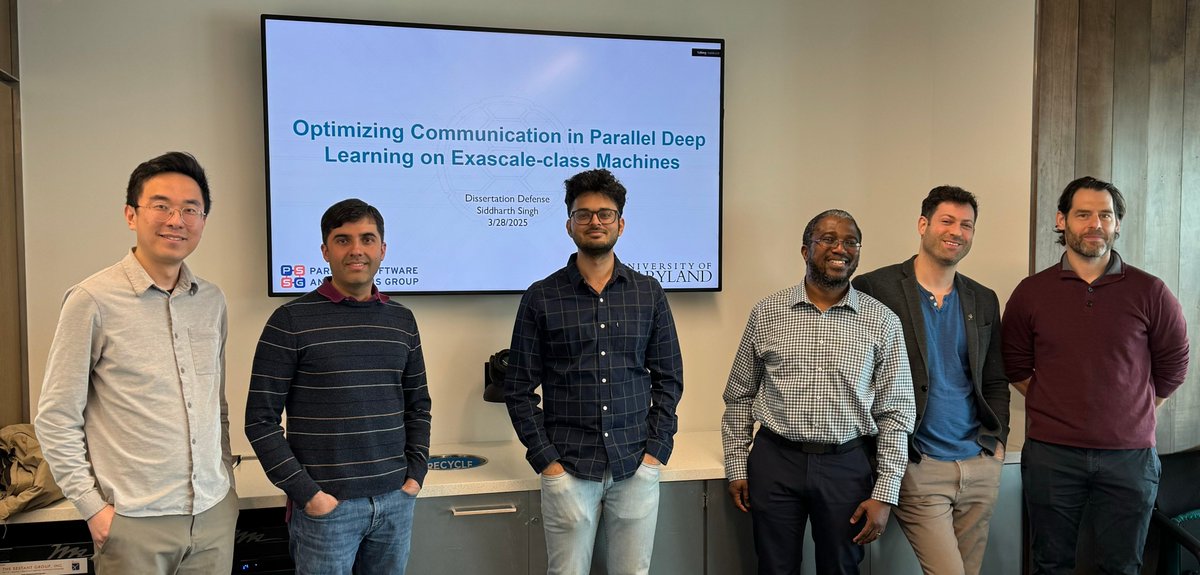

We are on a roll, second successful dissertation defense in a week (March 28)! Congratulations to Siddharth Singh on becoming the second PhD graduate from PSSG!! Dissertation title: "Optimizing Communication in Parallel Deep Learning on Exascale-class Machines" #HPC #AI #HPC4AI

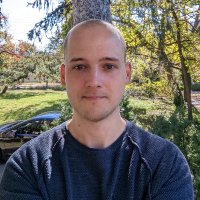

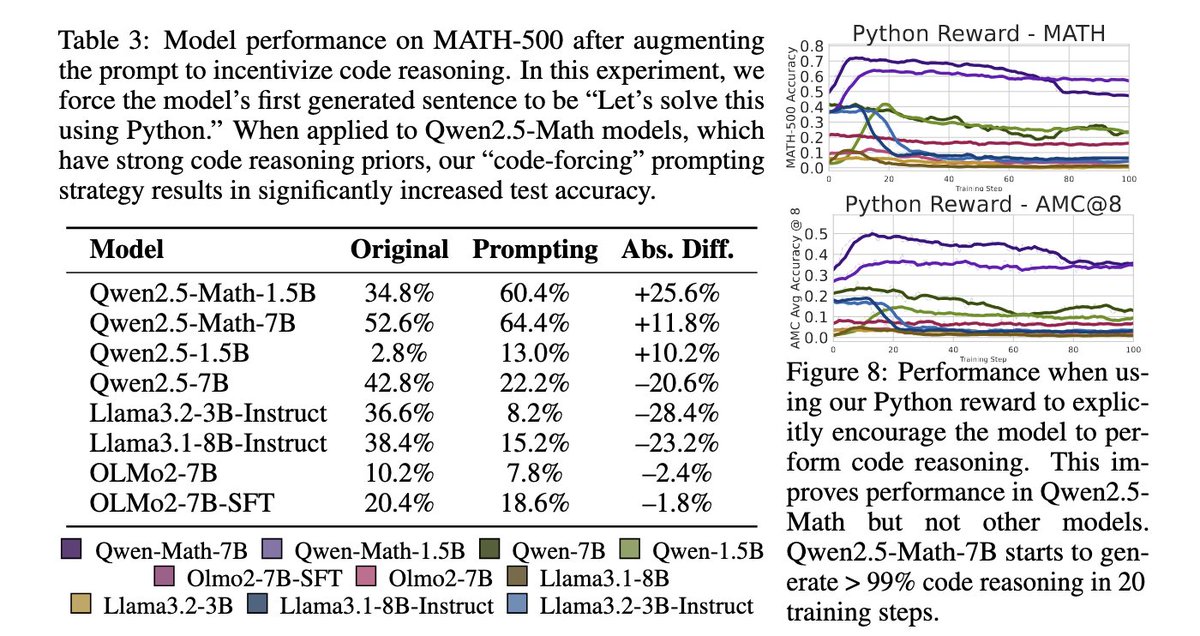

1/ Maximizing confidence indeed improves reasoning. We worked with Shashwat Goel, Nikhil Chandak Ameya P. for the past 3 weeks (over a zoom call and many emails!) and revised our evaluations to align with their suggested prompts/parsers/sampling params. This includes changing