Shashank Verma

@shashank__verma

Developer Advocate and Deep Learning Engineer @ Nvidia

ID: 111576497

05-02-2010 12:13:42

12 Tweet

12 Followers

58 Following

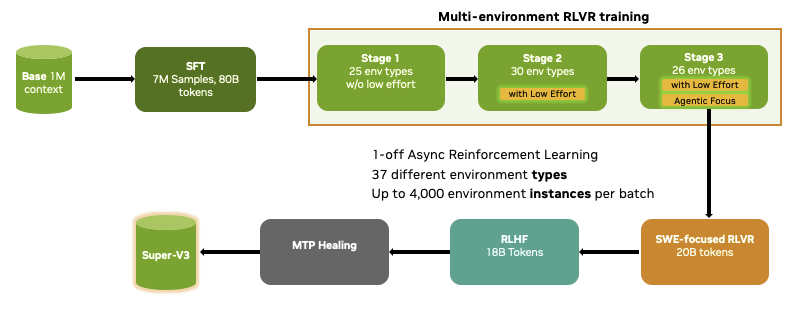

Artificial Analysis 1/4 We see no wall in post-training. Scaling RL software, infra, and data keeps yielding major capability gains. We trained across 30 RL environments with up to 4,000 instances per batch — math, code, STEM, agentic tool use, SWE, terminal, safety — all in a unified

Less than 30 minutes left in the world’s shortest hackathon ⏱️ at #NVIDIA #GTC2026 2 hrs to vibe code an agentic AI app, that uses Nemotron. Honored to be judging alongside Two Minute Papers Dr. Karoly Zsolnai-Fehér and an incredible panel of judges.