Runpei Dong

@runpeidong

CS PhD student @UofIllinois | Previously @Tsinghua_IIIS and XJTU | Interested in robot learning & computer vision

ID: 1251358690113761280

https://runpeidong.web.illinois.edu/ 18-04-2020 03:55:43

64 Tweet

356 Followers

1,1K Following

Motion tracking is a hard problem, especially when you want to track a lot of motions with only a single policy. Good to know that MoE distilled student works so well, congrats Zixuan Chen on such exciting results!

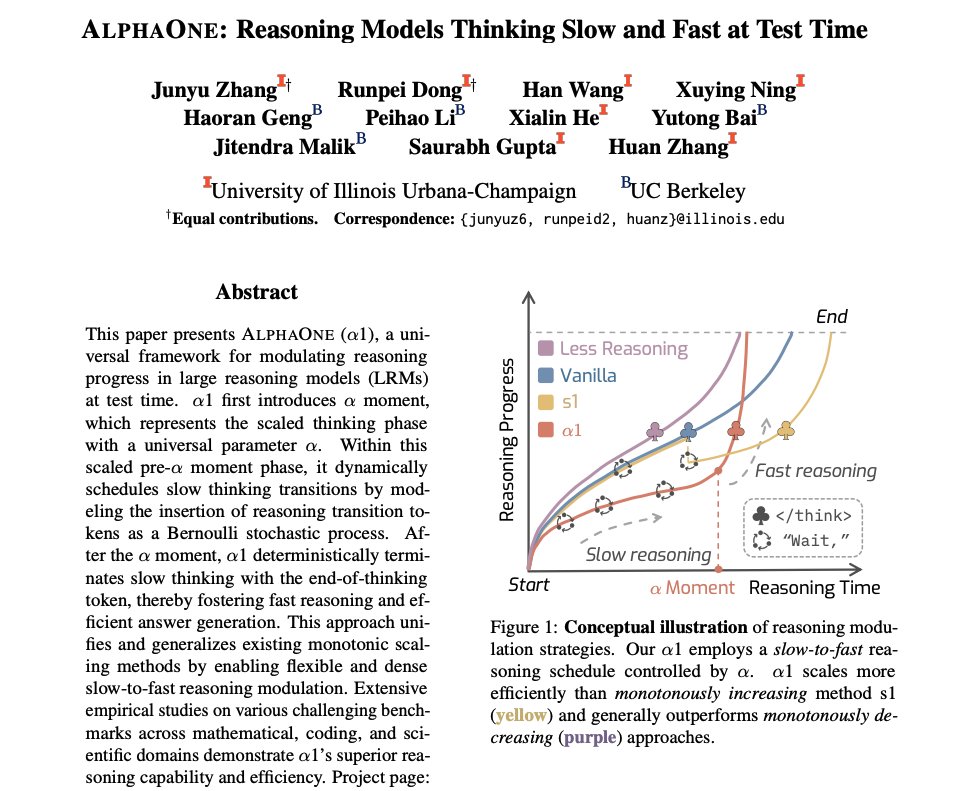

Thrilled to share our work AlphaOne🔥 at EMNLP 2025 2025, Junyu Zhang and I will be presenting this work online, and please feel free to join and talk to us!!! 📆Date: 8:00-9:00, Nov 7, Friday (Beijing Standard Time, UTC+8) 📺Session: Gather Session 4