Ryan Smith

@rnsmith49

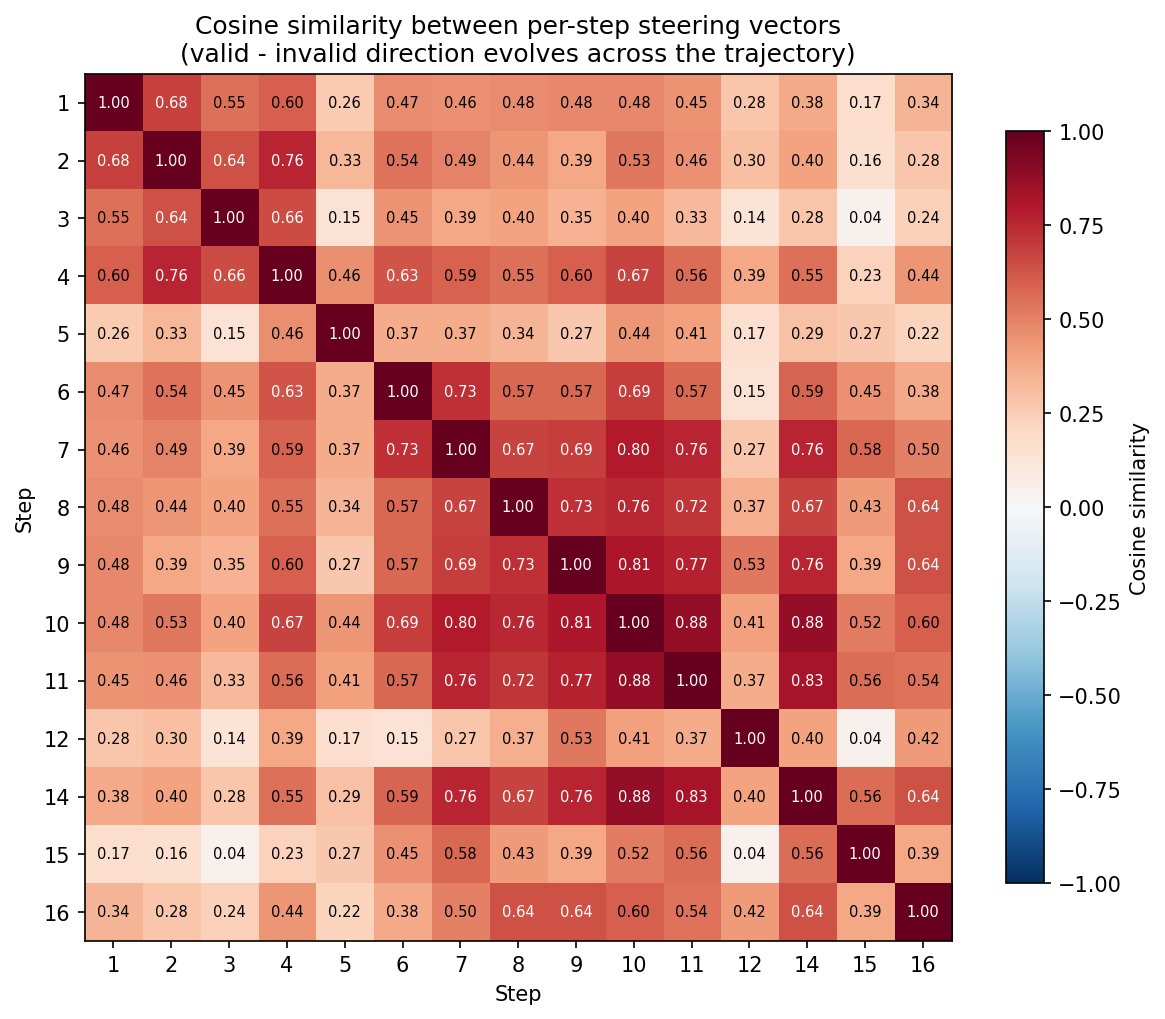

This is one of the biggest takeaways for me - model internals change over steps after interacting with the environment! I love this other figure Narmeen Oozeer made that shows this too - a matrix of cosine similarity between optimal steering vectors at each step:

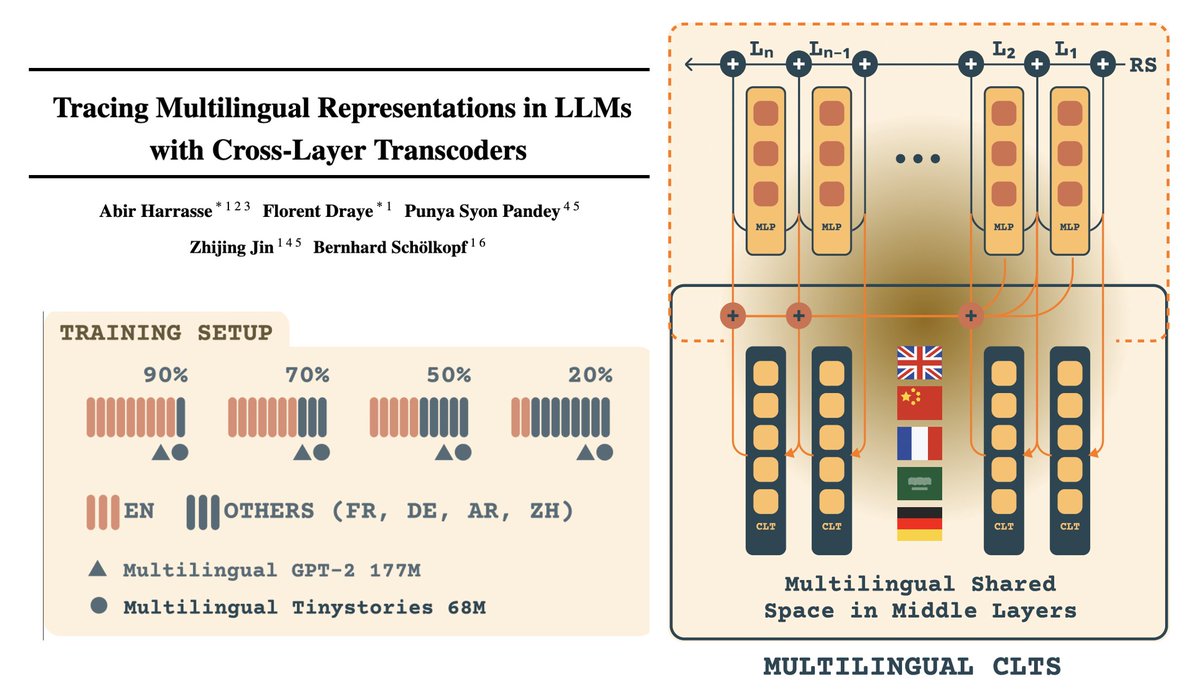

A new ARES tutorial from Narmeen Oozeer: Getting started in long-horizon interp. When do agents fail to accurately model their environment? How de we fix them? And how can you run these experiments on your own machine?