pierre orhan

@pierreorhan

Exploring with giants’ treasure maps. PhD student with Yves Boubenec and Jean-Rémi King

ID: 1229240448532852736

17-02-2020 03:05:47

95 Tweet

176 Followers

291 Following

Our article on the perturbative approach is out on Nature communications. Check out how perturbations can be used to study the way neurons perceive the world. Work in collaboration with Matías Goldin, Olivier Marre and Alexander Ecker Thread below: nature.com/articles/s4146…

A few people have asked me how our recent superposition paper (transformer-circuits.pub/2022/toy_model…) relates to classical ideas in neural coding / connectionism / distributed representations. I wanted to share a few thoughts, although I'll caveat I'm very much not an expert on these topics!

We don't yet know what learning rules are implemented in the brain. Can we understand the representation and function dynamics induced by candidate learning rules in deep networks? New preprint with Cengiz Pehlevan arxiv.org/abs/2210.02157

1/ Our new preprint biorxiv.org/content/10.110… on when grid cells appear in trained path integrators w/ Sorscher Gabriel Mel Aran Nayebi Lisa Giocomo Daniel Yamins critically assesses claims made in a #NeurIPS2022 paper described below. Several corrections in our thread ->

Riffusion, real-time music generation with stable diffusion Hugging Face model: huggingface.co/riffusion/riff… project page: riffusion.com/about

Major update on our "dual" feature encoding by excitatory and inhibitory cortical cells, now including simulations and super cool theoretical work by pierre orhan. And again, kudos to Adrian Duszkiewicz for all the hard work! biorxiv.org/content/10.110…

Our paper is out in Nature Human Behaviour🔥🔥 ‘Evidence of a predictive coding hierarchy in the human brain listening to speech’ 📄nature.com/articles/s4156… 💡Unlike language models, our brain makes distant & hierarchical predictions with Alexandre Gramfort and Jean-Rémi King Thread👇

The season finale of the mixed 🍝 vs. modular 🧱 selectivity debate will take place this year at #cosyne2023 workshops! Join us to hear what our stellar lineup thinks about single-neuron interpretability and disentangled representations! Co-org w Jeff Johnston James Whittington

🔥New paper accepted to ACL 2023! “Language acquisition: do children and language models follow similar learning stages?” With Yair Lakretz and Jean-Rémi King arxiv.org/abs/2306.03586 Very happy to share this work from my internship at @MetaAI ! Three key results below 👇 1/8

Feeling very inspired about ✨Using ANNs for Studying Human Language Learning and Processing (ANN-humlang.github.io)✨ after the workshop that Tamar Johnson and I organized this week at @ILLC_amsterdam! Many thanks to all our speakers and participants for such a great event,

We grieve the passing of Dr. Yves Frégnac. Yves has shaped the study of cortical processing and plasticity. He heralded interdisciplinarity and was one of the founding fathers of our institute. Our thoughts go to his family and to his friends in the community and beyond. pic.x.com/BGE4QGcg8g

Jérémy Rapin and I are very happy to present you: exca A python decorator to eliminate the overhead of caching and cluster-based executions for complex pipelines. - install: `pip install exca` - doc: github.com/facebookresear… #python #DataScience #OpenSource 🙏 AI at Meta

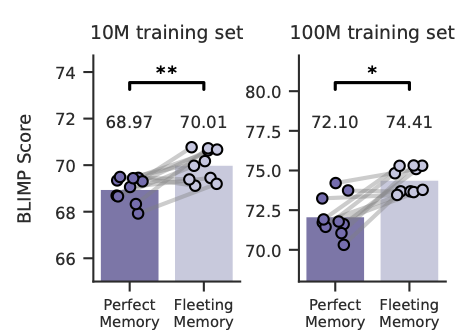

New preprint! w/ Abishek Thamma Adding human-like fleeting memory to transformers improves language learning, but impairs reading time prediction This supports ideas from cognitive science but complicates the link between model architecture and behavioural prediction