Pierre Manceron

@phylliade

@raidium_med

ID: 324584605

26-06-2011 21:57:39

9 Tweet

68 Followers

976 Following

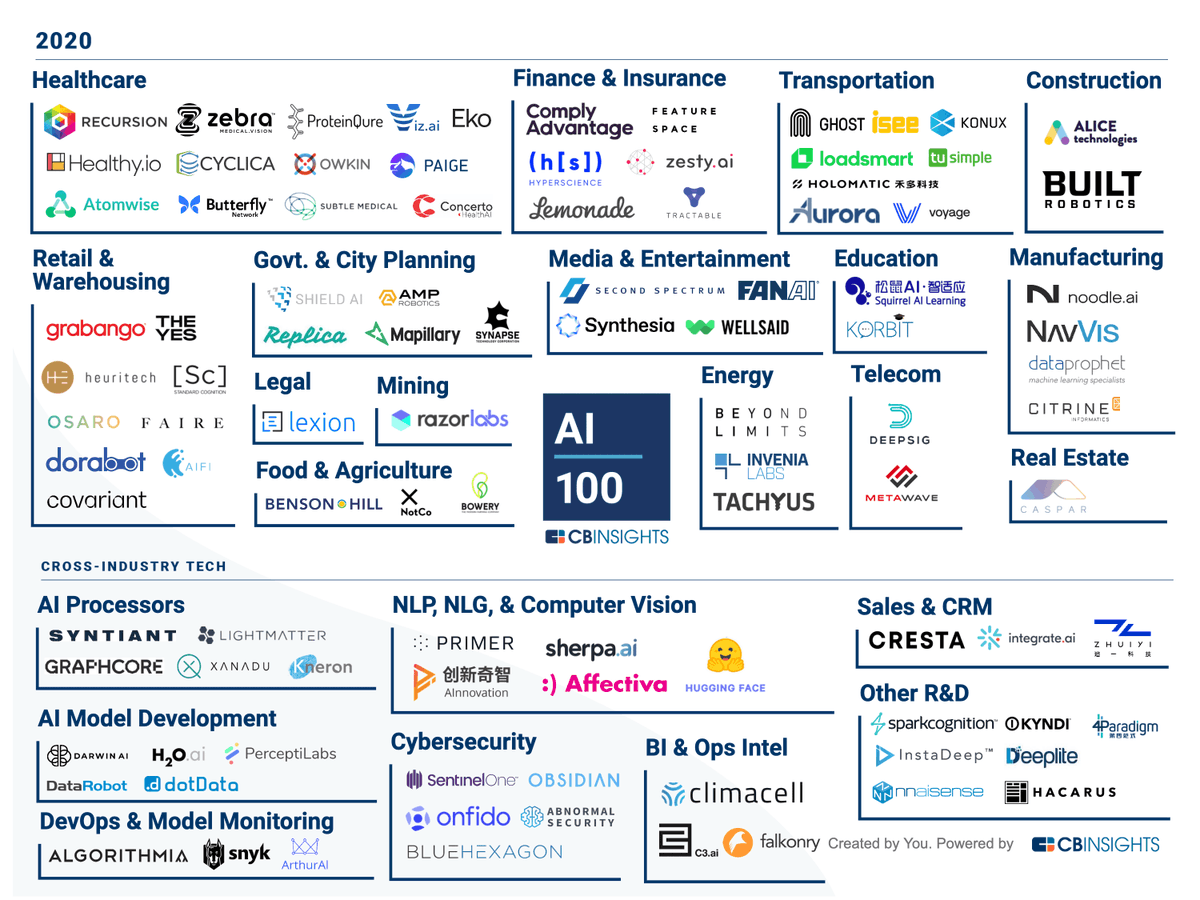

Owkin listed for the second year in a row in CB Insights #AI100 list of #AI startups redefining industries! 🎉 It's awesome to be included among so many global key players. #medicalresearch #AIforhealthcare #GoOwkin cbinsights.com/research/artif…