NoAI

@onlyyouuu8

ทุบเฟมทวิตทุกตัว ไม่เลือกหน้า

ID: 1337021952884543498

10-12-2020 13:10:57

3,3K Tweet

41 Followers

161 Following

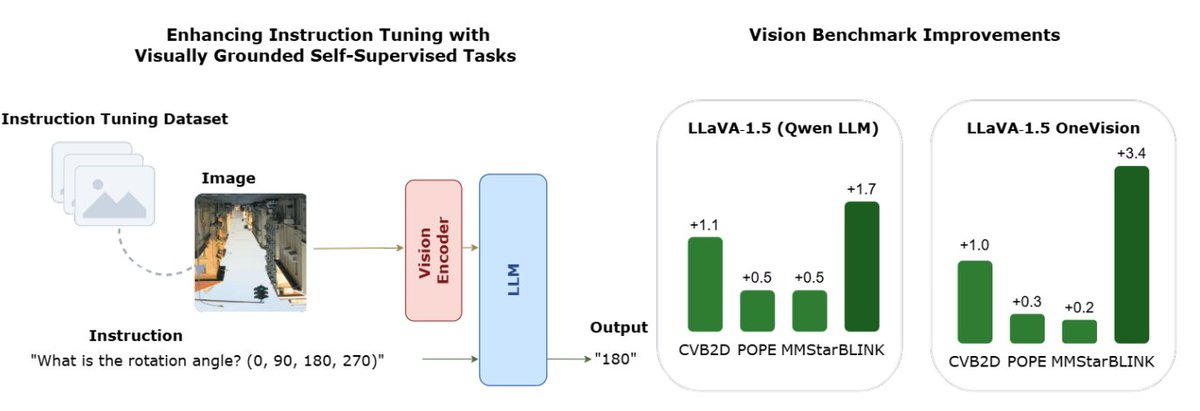

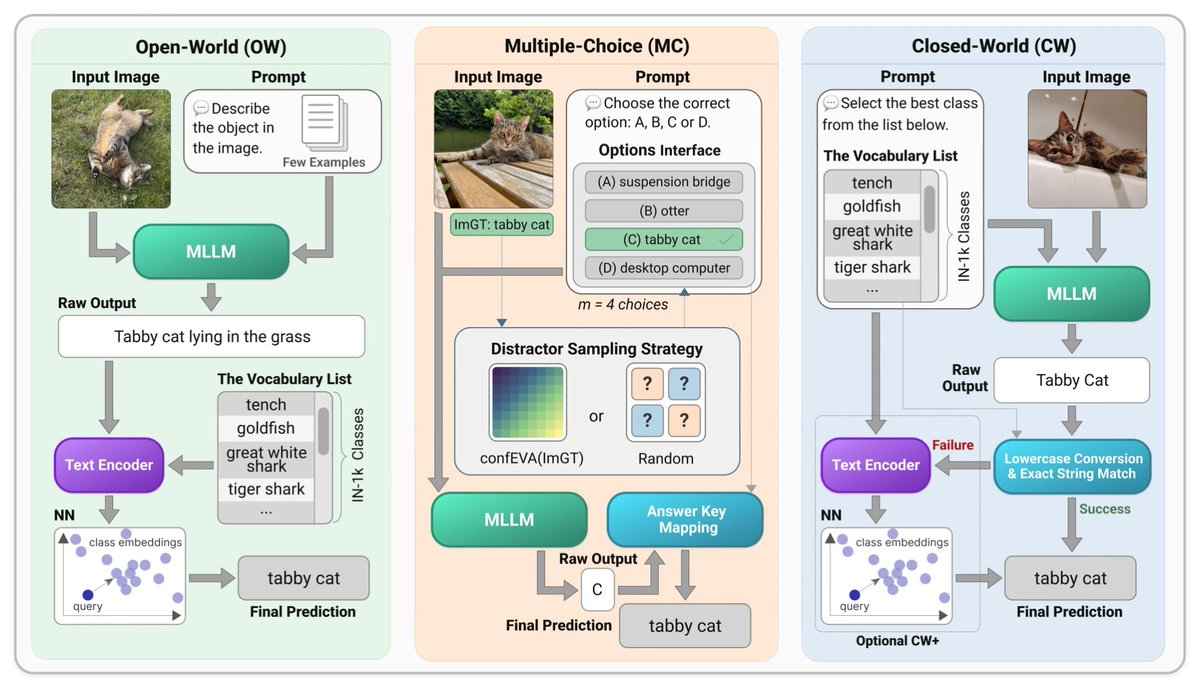

📝 Multimodal Large Language Models as Image Classifiers MLLMs are increasingly used for visual tasks, but evaluating their image classification ability has produced conflicting conclusions. w. N. Kisel, Illia Volkov 🇺🇦 , J. Matas #CVPR26 findings

Excited to share our latest research on limitations of RL-finetuned VLMs! We investigate the robustness of model responses and consistency of CoT to textual perturbations. Work led by Rosie Zhao during her internship with the Multimodal Machine Intelligence team at Apple.

My essay on Vision Language Models just went live! Massive thanks to the Towards Data Science team for featuring it on their Deep Dives section. Read it here: towardsdatascience.com/how-vision-lan… Conceptual deep dive, git repo, video tutorial - it's all in there!

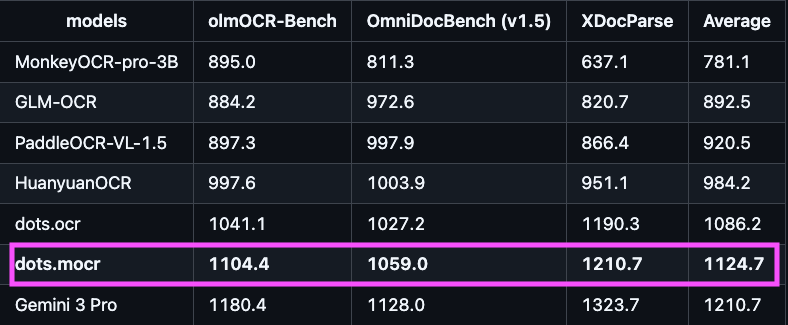

Bunch of new open OCR models recently — all available as uv scripts on Hugging Face. 19 models from 0.9B–8B. Some standouts: - Qianfan-OCR - 192 languages - dots.mocr — charts/figures → editable SVG - GLM-OCR — 94.6% accuracy, only 0.9B params