NLPurr

@nlpurr

SciComm of Academic NLP Papers | Research Scientist | Explainability, Prompting, Benchmarking, Metrics, Red-Teaming & Eval of LLMs

ID: 1543673611671638016

https://nlpurr.github.io/ 03-07-2022 19:13:15

936 Tweet

1,1K Followers

744 Following

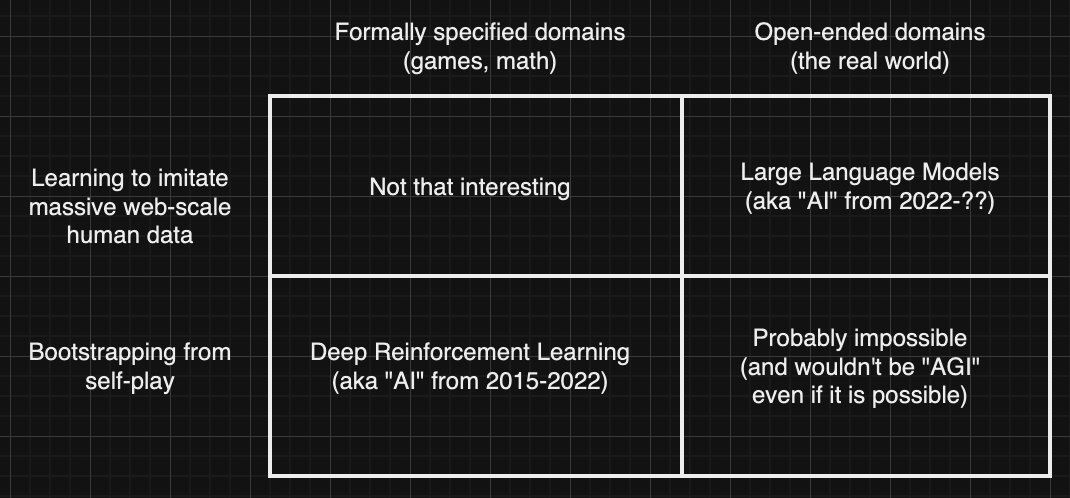

Jim Fan Richard Sutton Animals and humans get very smart very quickly with vastly smaller amounts of training data. My money is on new architectures that would learn as efficiently as animals and humans. Using more data (synthetic or not) is a temporary stopgap made necessary by the limitations of our

The next 10x in deep learning efficiency gains are going to come from intelligent intervention on training data. But tools for automated data curation at scale didn’t exist—until now. I’m so excited to announce that I’ve co-founded @DatologyAI, with Ari Morcos and Bogdan Gaza

lilian weng's blog is really nice!! many good overviews of various LLM/AI topics. lilianweng.github.io Lilian Weng