Nikhil Barhate

@nikhilbarhate99

ML @scale_AI | prev @AMD @mila_quebec

ID: 3245294515

https://nikhilbarhate99.github.io 14-06-2015 15:04:57

1,1K Tweet

204 Followers

810 Following

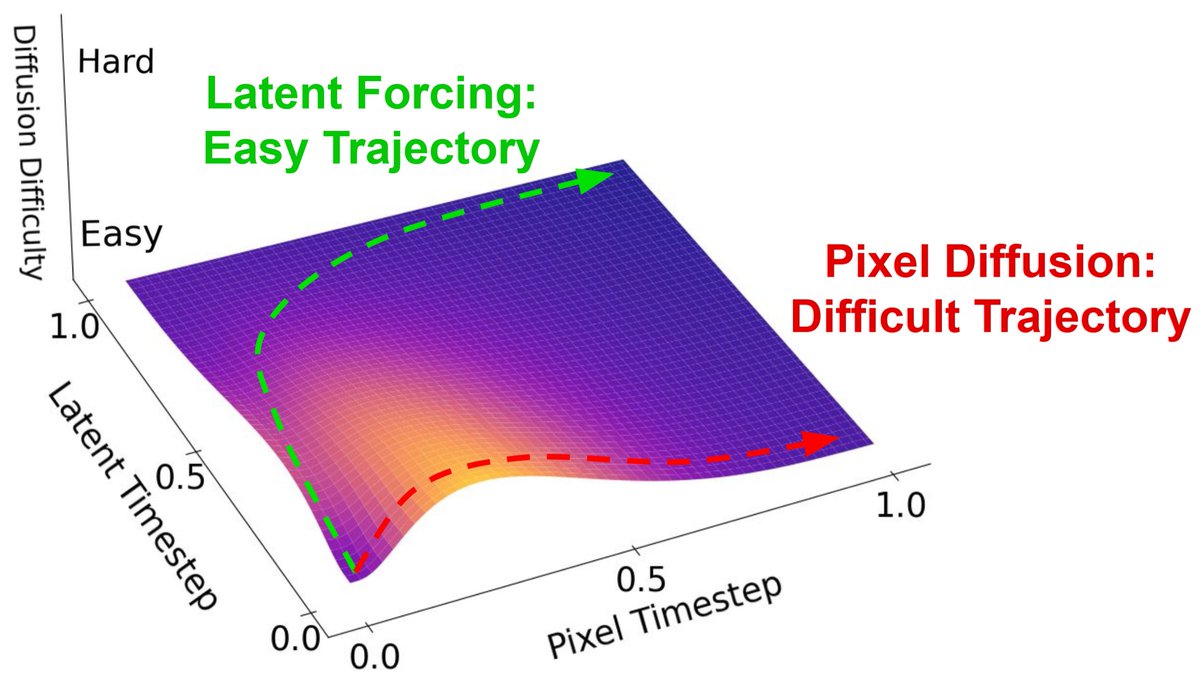

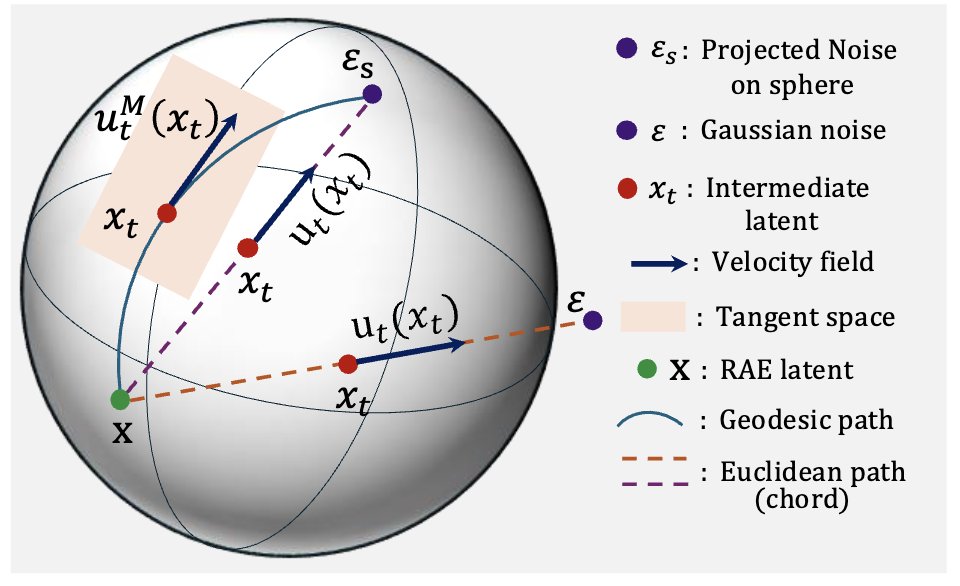

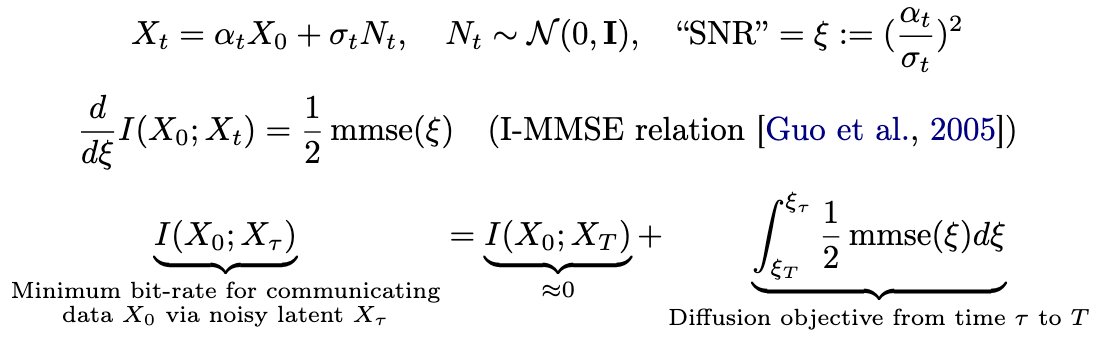

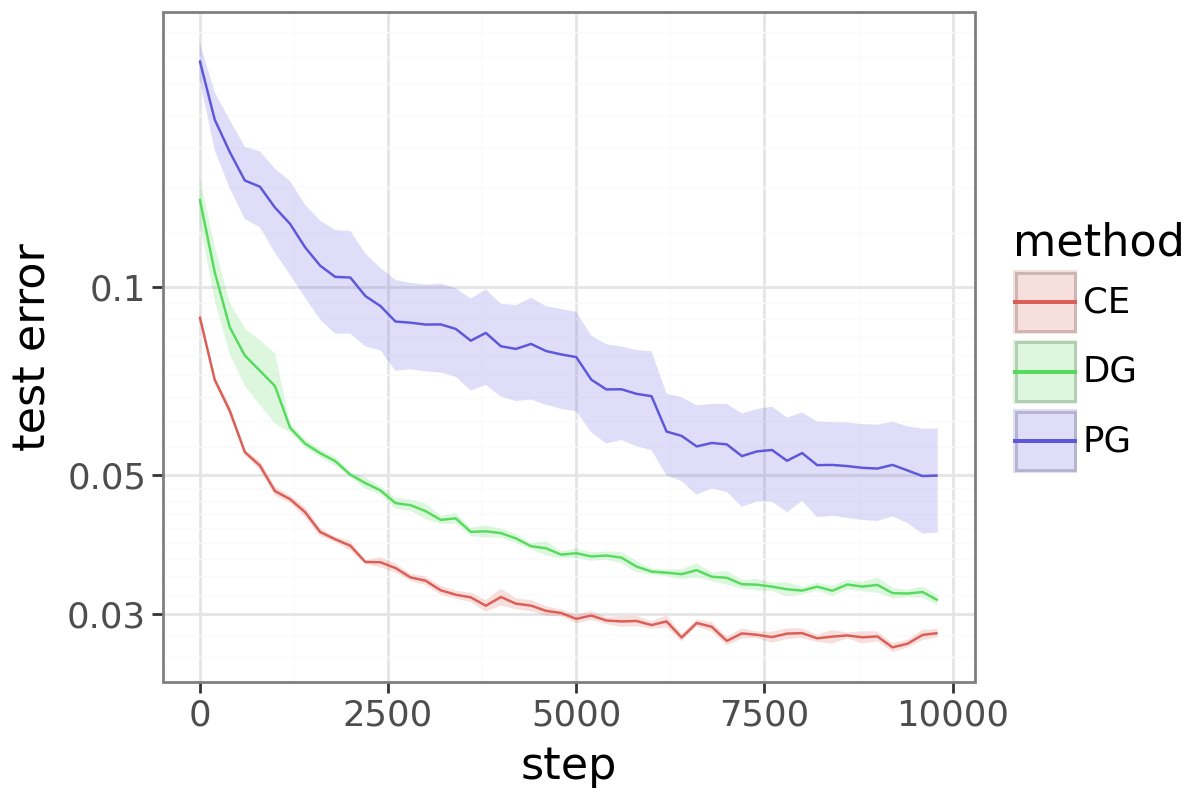

What's the right space to diffuse in: Raw Data or Latents? Why not both! In Latent Forcing, we order a joint diffusion trajectory to reveal Latents before Pixels, leading to improved convergence while being lossless at encoding and end-to-end at inference. w/ Fei-Fei Li+... 1/n

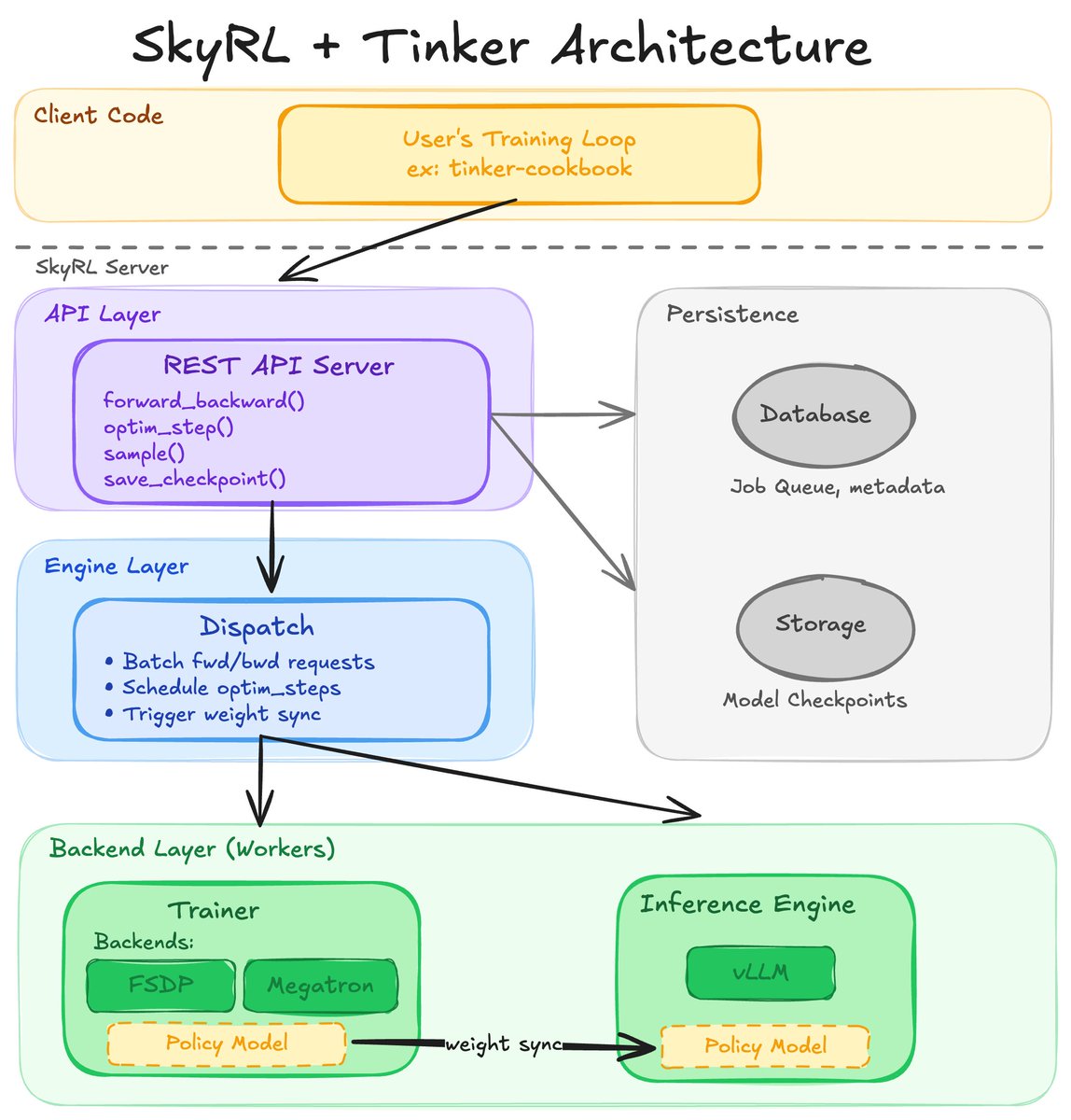

We scaled off-policy RL to sim-to-real. To our knowledge, FlashSAC is the fastest and most performant RL algorithm across IsaacLab, MuJoCo Playground, and many more, all with a single set of hyperparameters. Project page: holiday-robot.github.io/FlashSAC Paper: arxiv.org/pdf/2604.04539

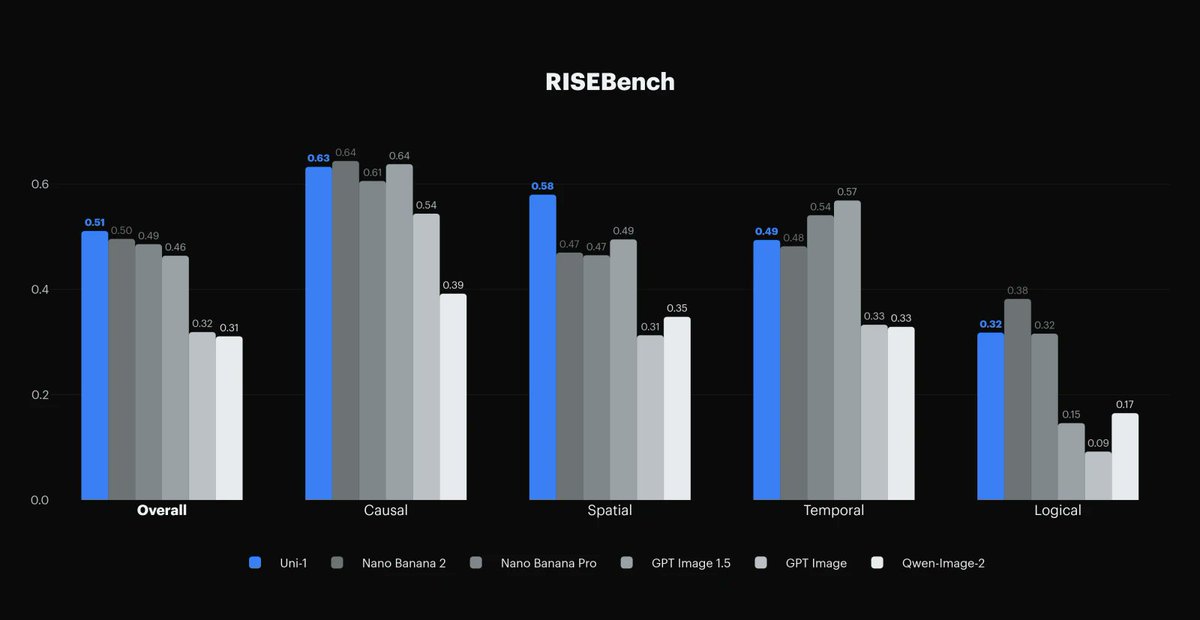

![Yu Lei (@_outofmemory_) on Twitter photo 🤖Co-training is everywhere (sim↔real[e.g. GR00T, LBM], human↔robot[e.g. PI, EgoScale], even non-robot data[e.g. PI, LBM).

But why does it work? How can we improve it further?

Taking sim-and-real imitation learning in diffusion/ flow-based models as the test bed, we performed 🤖Co-training is everywhere (sim↔real[e.g. GR00T, LBM], human↔robot[e.g. PI, EgoScale], even non-robot data[e.g. PI, LBM).

But why does it work? How can we improve it further?

Taking sim-and-real imitation learning in diffusion/ flow-based models as the test bed, we performed](https://pbs.twimg.com/media/HGXusk-bwAAQywq.jpg)