Khue Le

@netw0rkf10w

Head of R&D at getvocal.ai.

Building conversational AI by day, doing optimization research by night.

ID: 195372160

https://khue.fr 26-09-2010 14:52:22

521 Tweet

292 Followers

131 Following

Congratulations to Dr. Mathilde Caron Mathilde Caron, who successfully defended her PhD **in person** after a brilliant presentation. The committee was prestigious with Cordelia Schmid, Andrew Zisserman, Alyosha Efros, Diane Larlus, and Alexey Dosovitskiy.

In our NeurIPS 2021 paper (with Karteek Alahari) we showed that CCCP is Frank-Wolfe in disguise. Happy to see other people recently rediscovering this fact and presenting it as a striking result. Want to know another equally striking fact? Mean Field is also Frank-Wolfe!👇

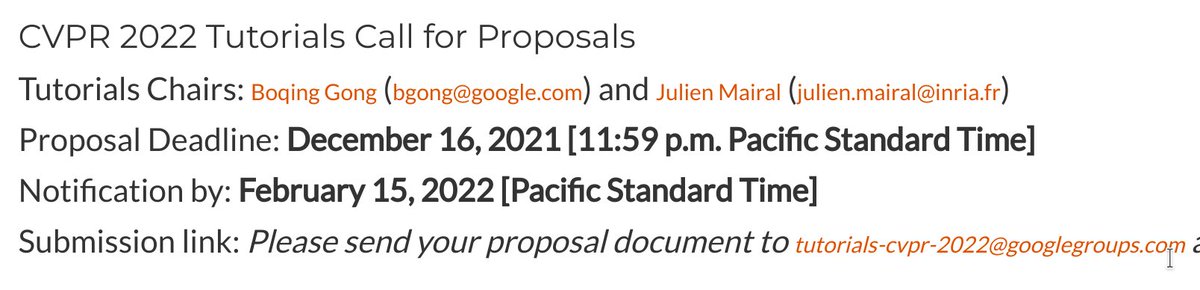

[DISTINCTION 🏆] Toutes nos félicitations à Julien Mairal de l'équipe-projet Thoth du centre @inria de l'Université Grenoble Alpes, lauréat d'une bourse European Research Council (ERC) Consolidator Grant 👏 Découvrez-en ➕ ici : inria.fr/fr/julien-mair… #MachineLearning #Algorithm

![Centre Inria de l'Université Grenoble Alpes (@inria_grenoble) on Twitter photo [DISTINCTION 🏆]

Toutes nos félicitations à <a href="/julienmairal/">Julien Mairal</a> de l'équipe-projet Thoth du centre @inria de l'Université Grenoble Alpes, lauréat d'une bourse <a href="/ERC_Research/">European Research Council (ERC)</a> Consolidator Grant 👏

Découvrez-en ➕ ici :

inria.fr/fr/julien-mair…

#MachineLearning #Algorithm [DISTINCTION 🏆]

Toutes nos félicitations à <a href="/julienmairal/">Julien Mairal</a> de l'équipe-projet Thoth du centre @inria de l'Université Grenoble Alpes, lauréat d'une bourse <a href="/ERC_Research/">European Research Council (ERC)</a> Consolidator Grant 👏

Découvrez-en ➕ ici :

inria.fr/fr/julien-mair…

#MachineLearning #Algorithm](https://pbs.twimg.com/media/Fny1LVaWQAALR2U.jpg)

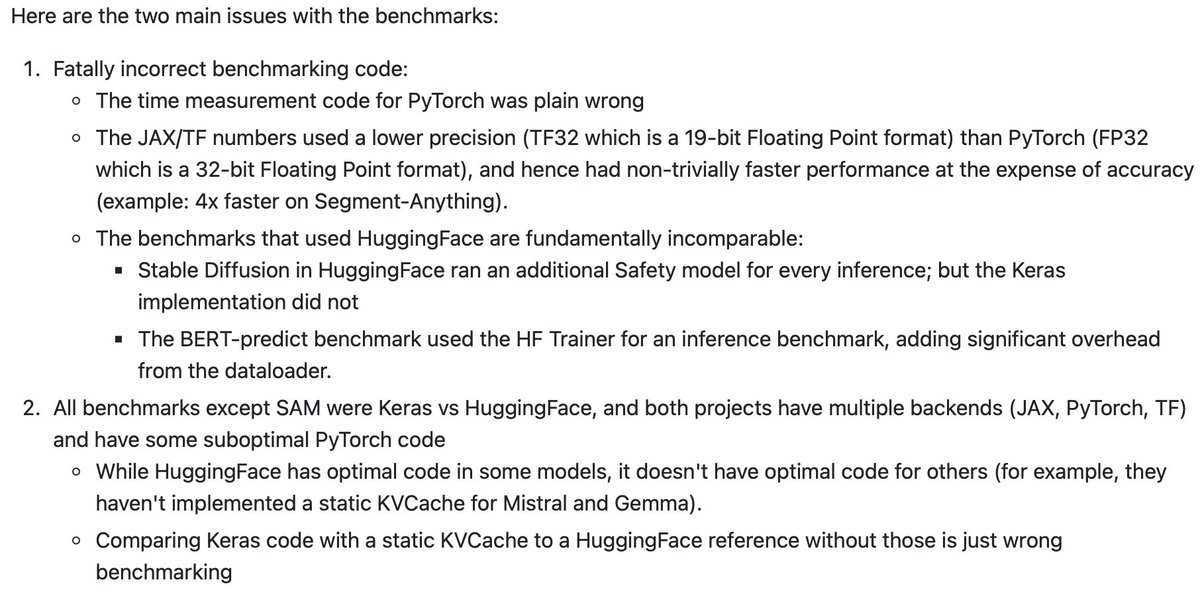

New blog post: Yet Another ICML Award Fiasco The story of the ICML Conference 2023 Outstanding Paper Award to the D-Adaptation paper with worse results that the ones from 9 years ago Please share it to start a needed conversation on mistakenly granted awards parameterfree.com/2023/08/30/yet…

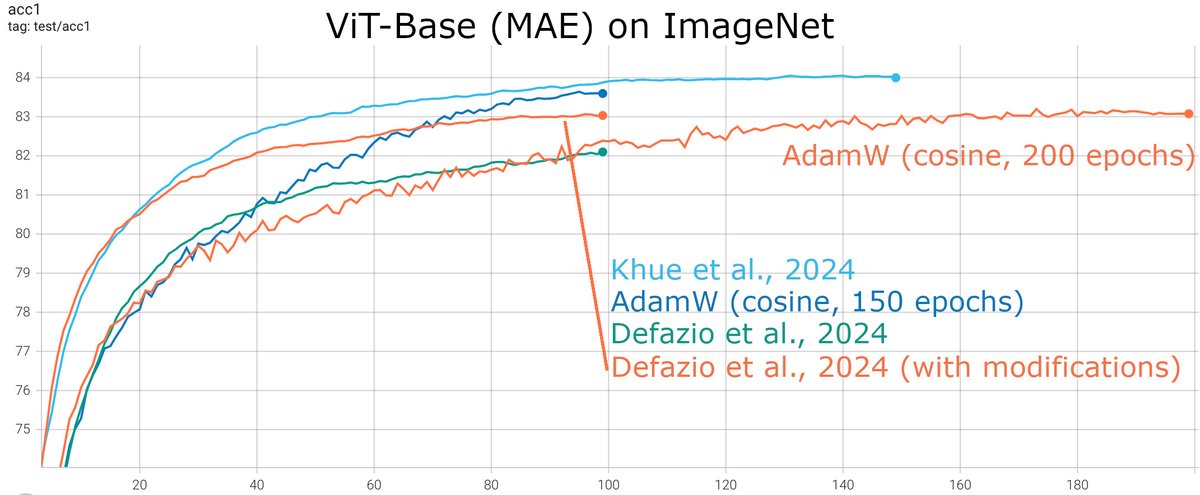

Hi Aaron Defazio. Here's the result of my optimizer, compared to yours (still running). Can you beat my blue curve with hyper-parameter tuning? ;) Please give it a try using this code: github.com/facebookresear…

While waiting for Aaron Defazio's tuning result, here's my full run of his method (green curve). Interestingly, some modifications inspired by my optimizer seem to boost its performance. Note: MAE's default hyper-params are used for all experiments.