Nanbeige

@nanbeige

Nanbeige LLM Lab

ID: 1740367494102351872

https://huggingface.co/Nanbeige 28-12-2023 13:42:07

45 Tweet

1,1K Followers

202 Following

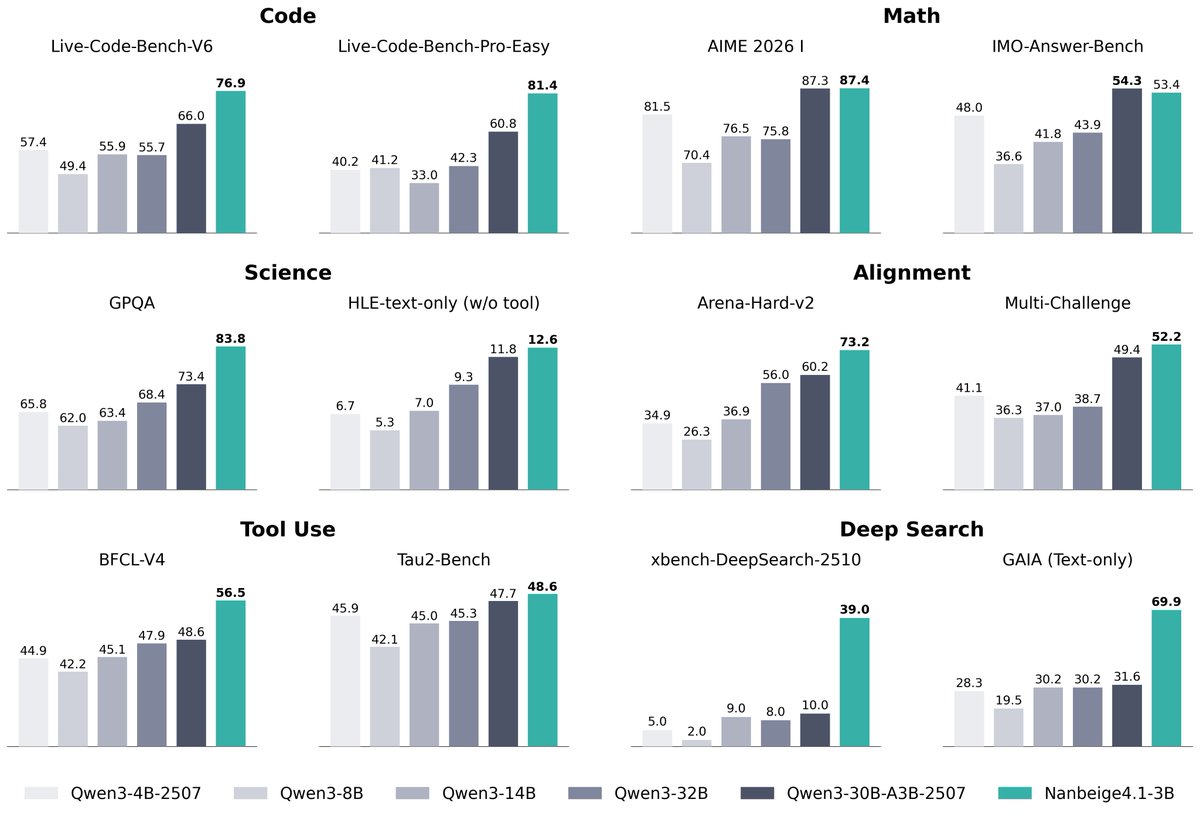

Adina Yakup Nanbeige Tested Nanbeige4-3B-Thinking(Q3_K_S) locally in Privacy AI with on-device tool calling (search_web). Performance on iOS is excellent. At 3B, it’s lightweight enough to serve as a practical daily offline assistant, yet still handles reasoning and tool use reliably. Congrats to

N8 Programs Thank you again for your interest! We hope the model will attract wider attention and be tested by the community to evaluate its performance. The technical report will be released tomorrow—stay tuned! 🌟