Moon

@mnagai_

PhD student at Koo lab @CSHL | Computational Biology | AI Alignment

ID: 1261246801878716416

15-05-2020 10:47:47

408 Tweet

69 Followers

230 Following

Mark your calendars: The AI x Bio meeting of 2026 will be held at CSHL on May 26-31! The program brings together 50+ invited leaders in genomics, transcriptomics, protein design, drug discovery, neuroAI, pathology, agentic AI, and more! Abstract: 3/26 meetings.cshl.edu/meetings.aspx?…

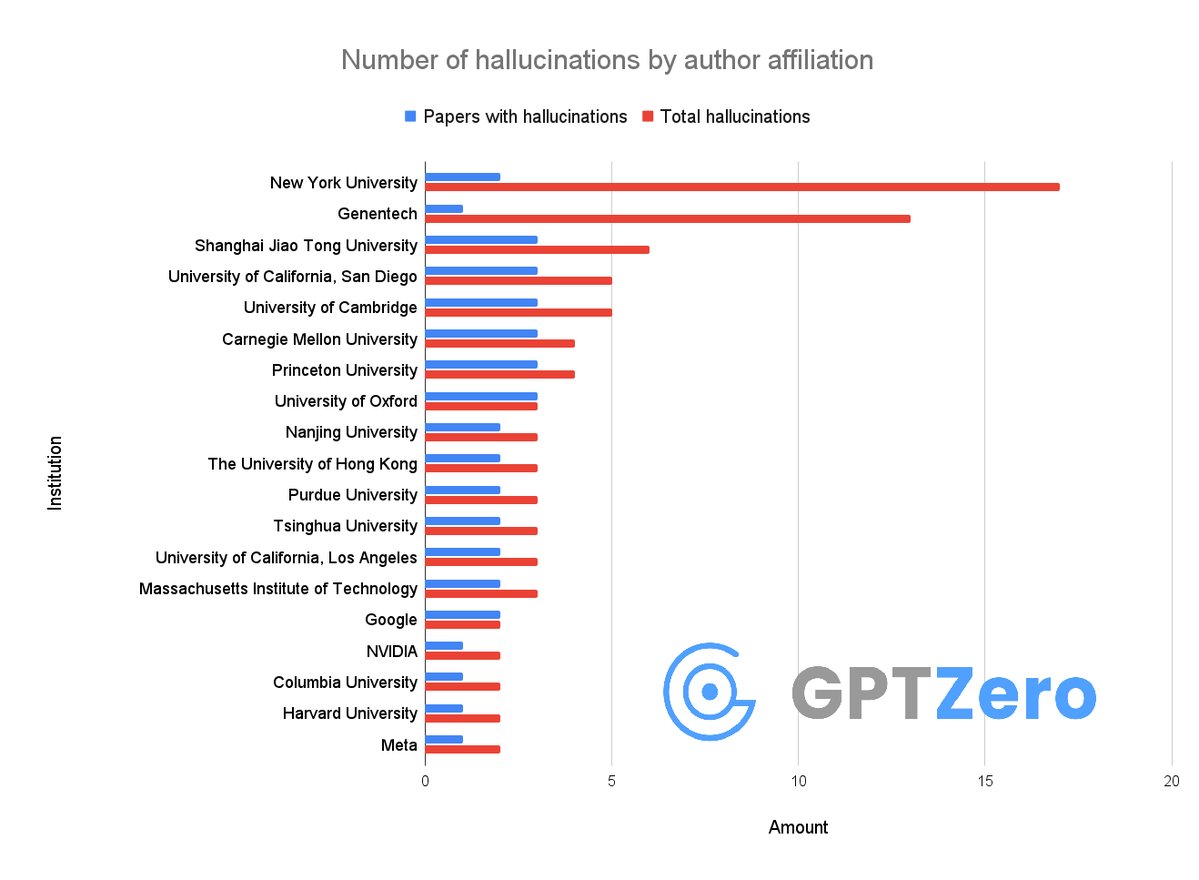

Okay so, we just found that over 50 papers published at @Neurips 2025 have AI hallucinations I don't think people realize how bad the slop is right now It's not just that researchers from Google DeepMind, Meta, Massachusetts Institute of Technology (MIT), Cambridge University are using AI - they allowed LLMs to generate

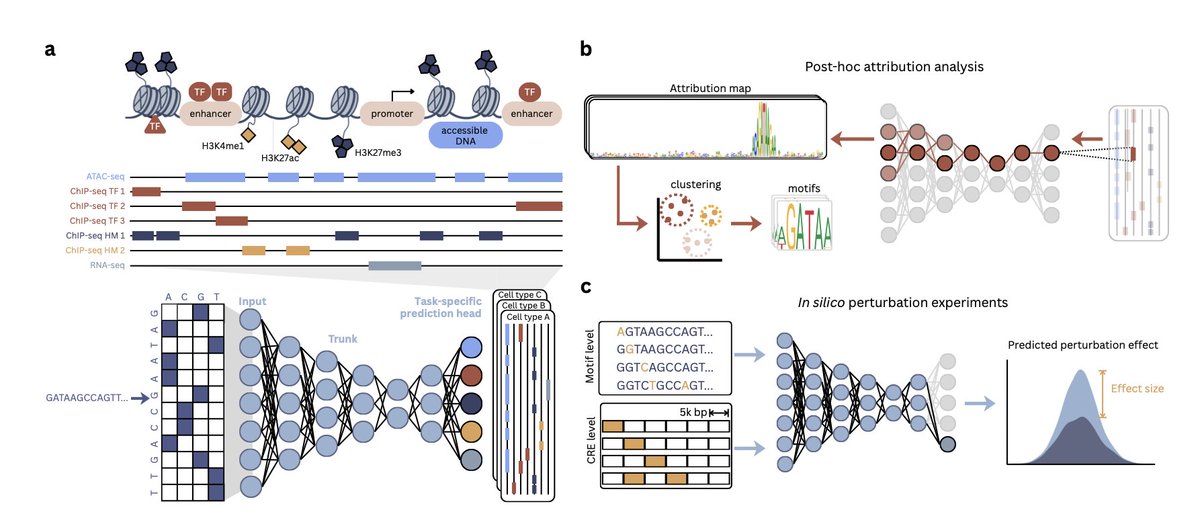

1/13 Excited to share our (anna spiro Maria Chikina Sara Mostafavi) latest preprint! 🧬💻 Personal Genome Prediction isn't just a downstream task—it’s the ultimate end-to-end benchmark for Variant Effect Prediction. We put the new SOTA AlphaGenome to the test and uncovered a

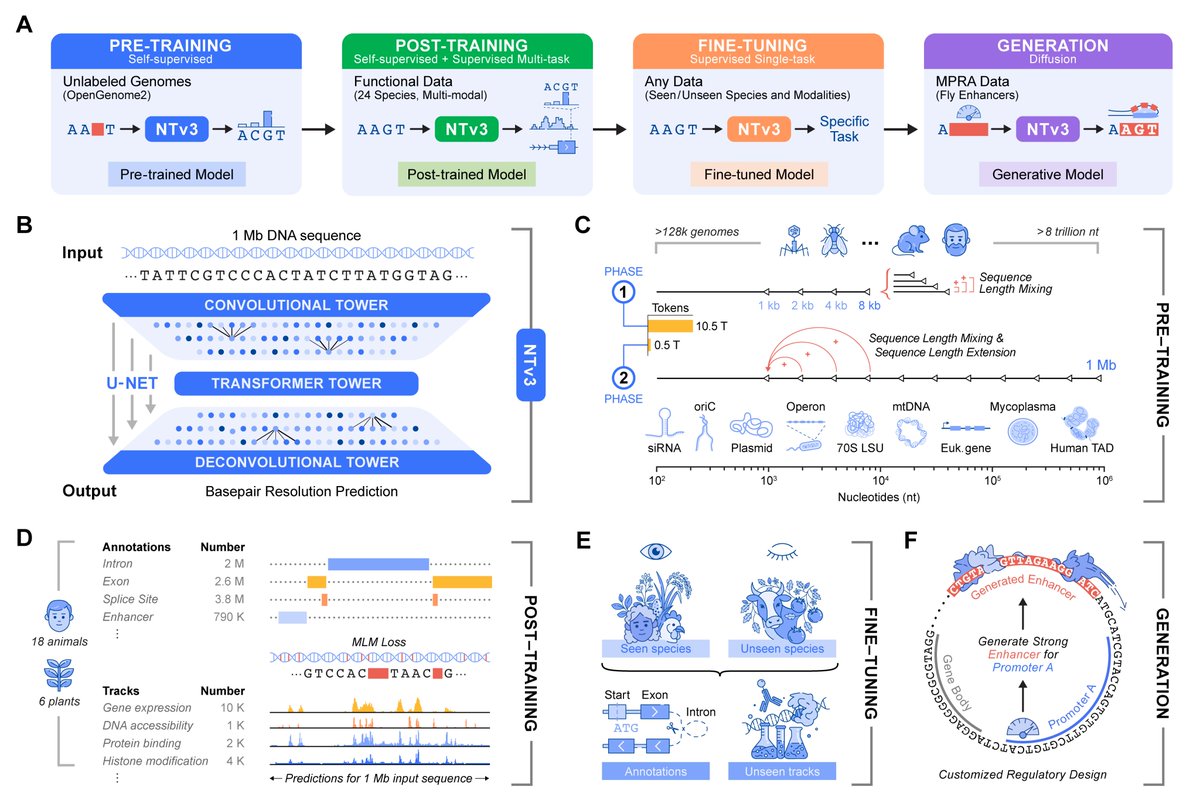

Check out this latest proof-of-concept regulatory DNA LM (called ARSENAL #GGMU) by Aman Patel that is pretty much the opposite of the current trends in DNALM literature. (Beware: Long thread) 1/