Person.

Migrating to Mastodon

@[email protected]

ID: 84802491

http://www.pluralsight.com 24-10-2009 08:54:53

3,3K Tweet

176 Followers

961 Following

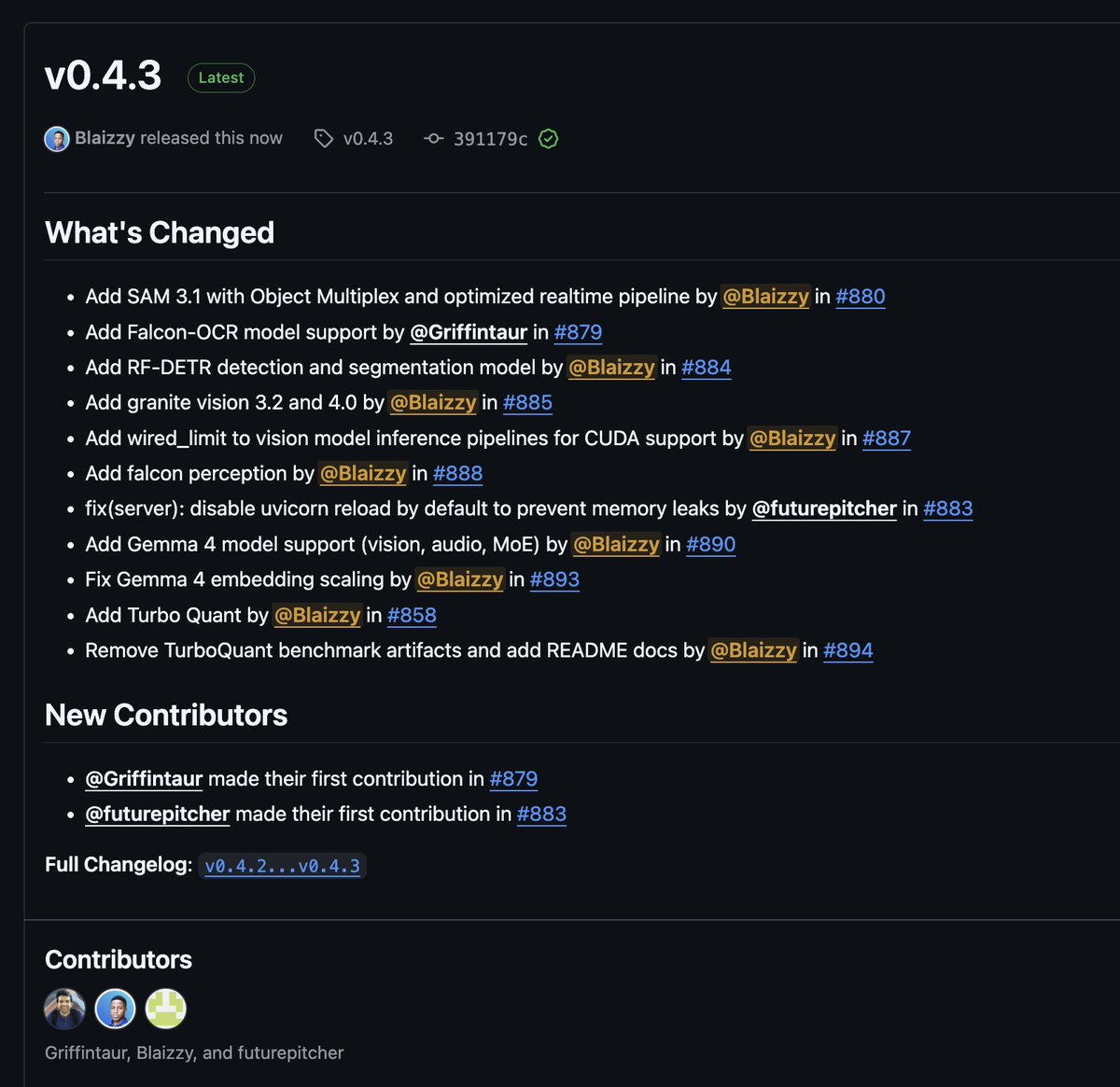

mlx-vlm v0.4.3 is here 🚀 Day-0 support: 🔥 Gemma 4 (vision, audio, MoE) by Google DeepMind 🦅 Falcon-OCR + Falcon Perception by Technology Innovation Institute 🪨 Granite Vision 4.0 by IBM Research New models: 🎯 SAM 3.1 with Object Multiplex by Facebook 🔍 RF-DETR detection & segmentation by

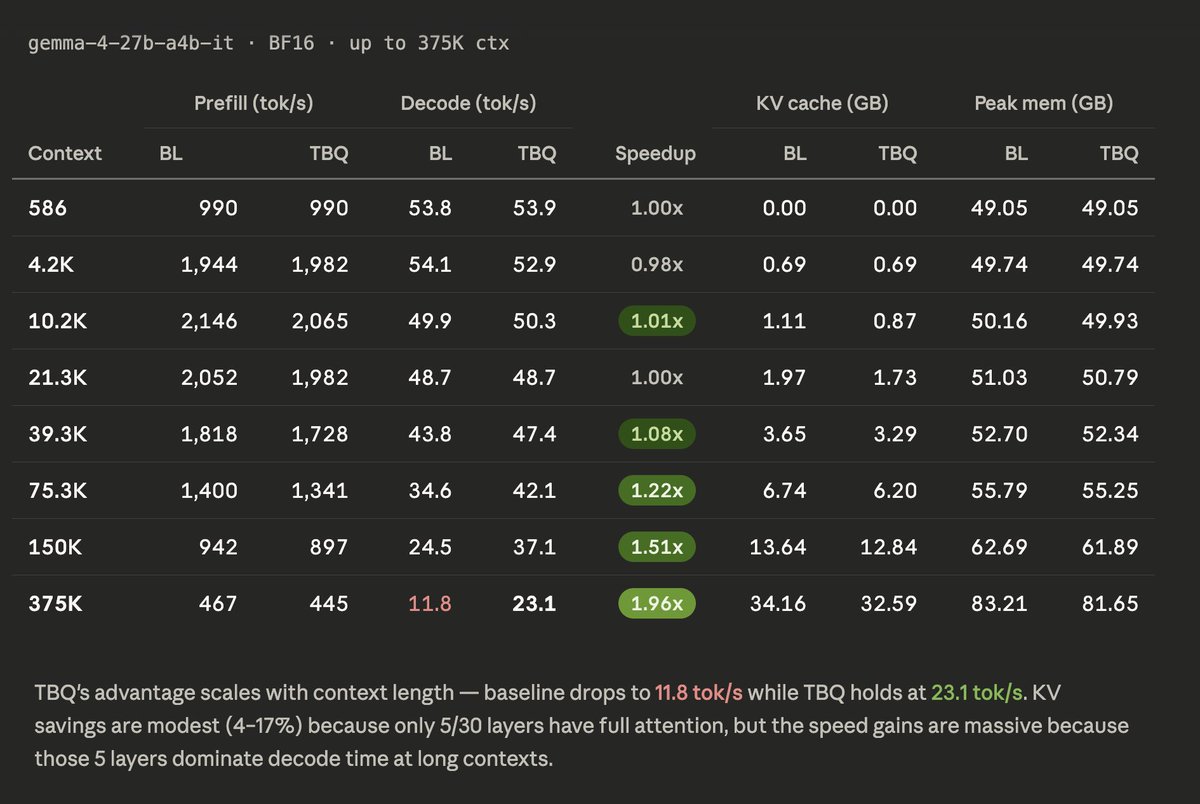

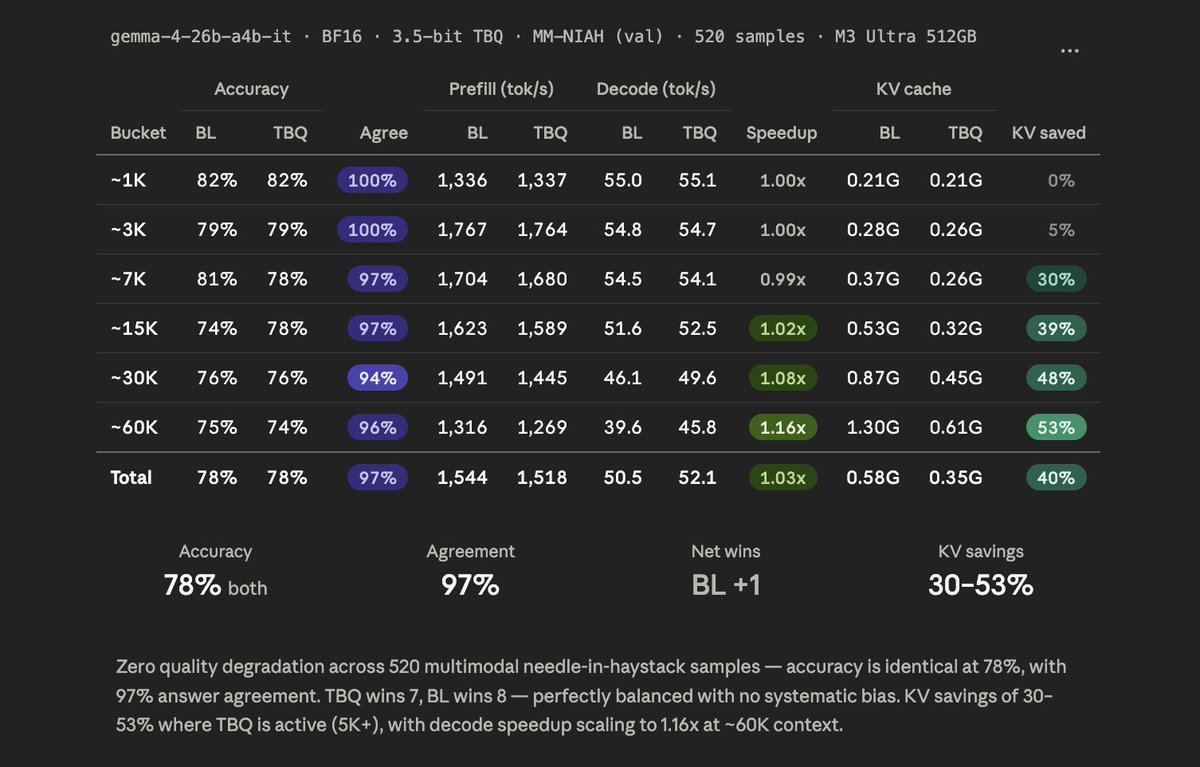

TurboQuant: Open Evals on MLX 🔥 Yesterday I launched mlx-vlm v0.4.4 with major TurboQuant performance improvements. Today, the open benchmark results on MM-NIAH (val, 520 samples) using Gemma 4 26B IT by Google DeepMind on M3 Ultra: → 0 quality loss — 78% accuracy for both

Awesome work by Tom Turney 🔥