Manuel Renner

@manu_rnnr

ID: 1711690681926701056

10-10-2023 10:30:42

26 Tweet

17 Followers

51 Following

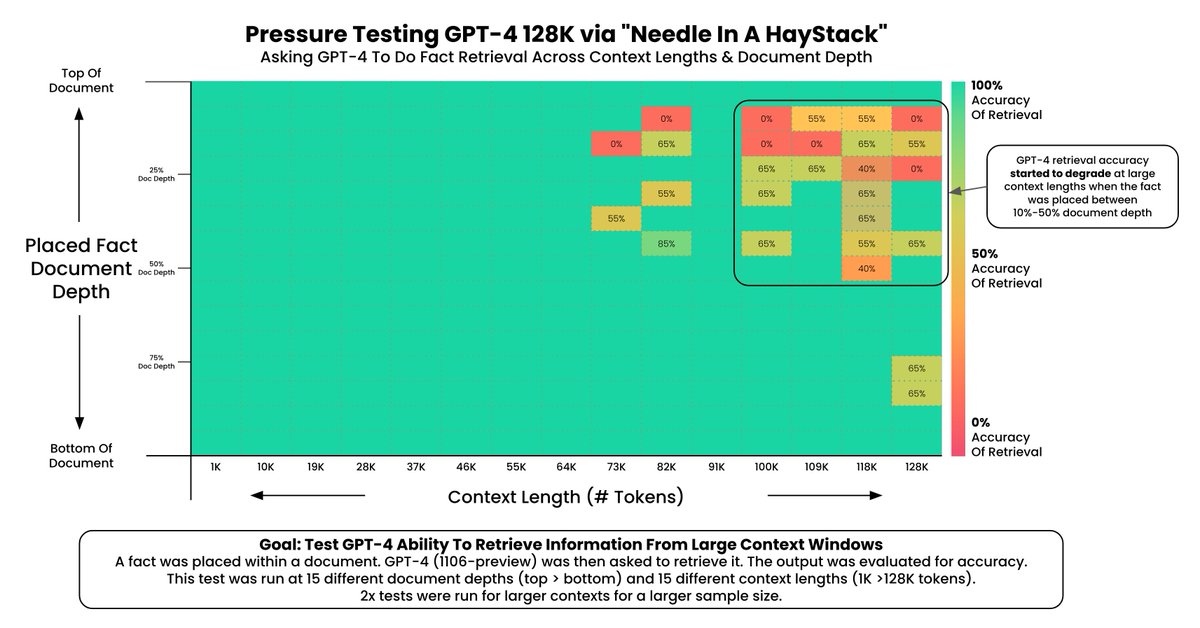

Estamos siguiendo en directo el #OpenAIDevDay y escuchando las novedades que está empezando a detallar Sam Altman in stage; entre las más destacadas: 🎉 Nuevo modelo: GPT-4 Turbo. 1️⃣ Mayor longitud de contexto: 128k tokens 📅 Ventana de conocimiento: hasta abril 2023.

Interesante análisis sobre cómo medir la performance de tu pipeline de #RetrievalAugmentedGeneration a través de distintos modelos de #embeddings y #rerankers utilizando el módulo Retrieval Evaluation de LlamaIndex 🦙 📊 🔢 buff.ly/3swok3W

🧑💻 En ciertos casos, usar programación asíncrona en el desarrollo de tus aplicaciones con #LLMs puede optimizar notablemente su desempeño. En este artículo, Manuel Renner profundiza en diferentes técnicas para construirlas en Python: diverger.medium.com/building-async… Abrimos 🧵: