David J Wu

@lightvector1

Researcher, game AI enthusiast, author of KataGo (katagotraining.org)

ID: 1317558974154158082

17-10-2020 20:12:11

27 Tweet

503 Followers

59 Following

Here's my conversation with Noam Brown (Noam Brown), co-creator of AI systems that achieve superhuman level performance in games of poker and Diplomacy that involves strategic negotiations with humans. This was a fascinating, technical conversation. youtube.com/watch?v=2oHH4a…

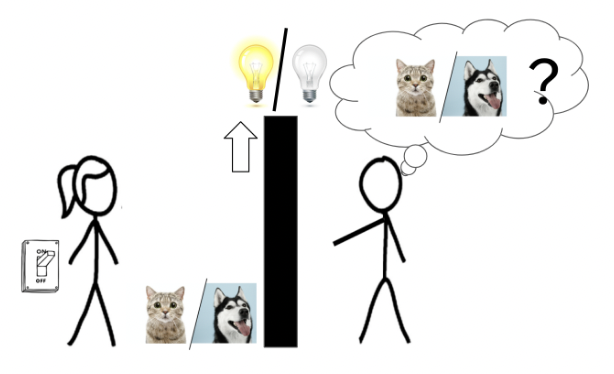

In the recent paper arxiv.org/abs/2402.04494 Google DeepMind introduced a transformer chess network, but didn't include Lc0 in their comparison. We've used transformers for a while, and our network is stronger with fewer parameters. More details soon.

Google DeepMind ..and the blog post with more details is live at lczero.org/blog/2024/02/h…