Kyungmin Lee

@lee_kyungmin21

ID: 1735497643508297730

https://kyungminn.github.io/ 15-12-2023 03:11:30

39 Tweet

56 Takipçi

39 Takip Edilen

🚀Excited to share that our paper EgoX👀 has been accepted to #CVPR2026 ! Huge thanks to my co-first authors (taewoongkang, dohyeon), co-authors (Minho Park,junhahyung) and Prof. Jaegul Choo. See you in Denver!🏔️ #VideoGeneration #WorldModeling #Robotics

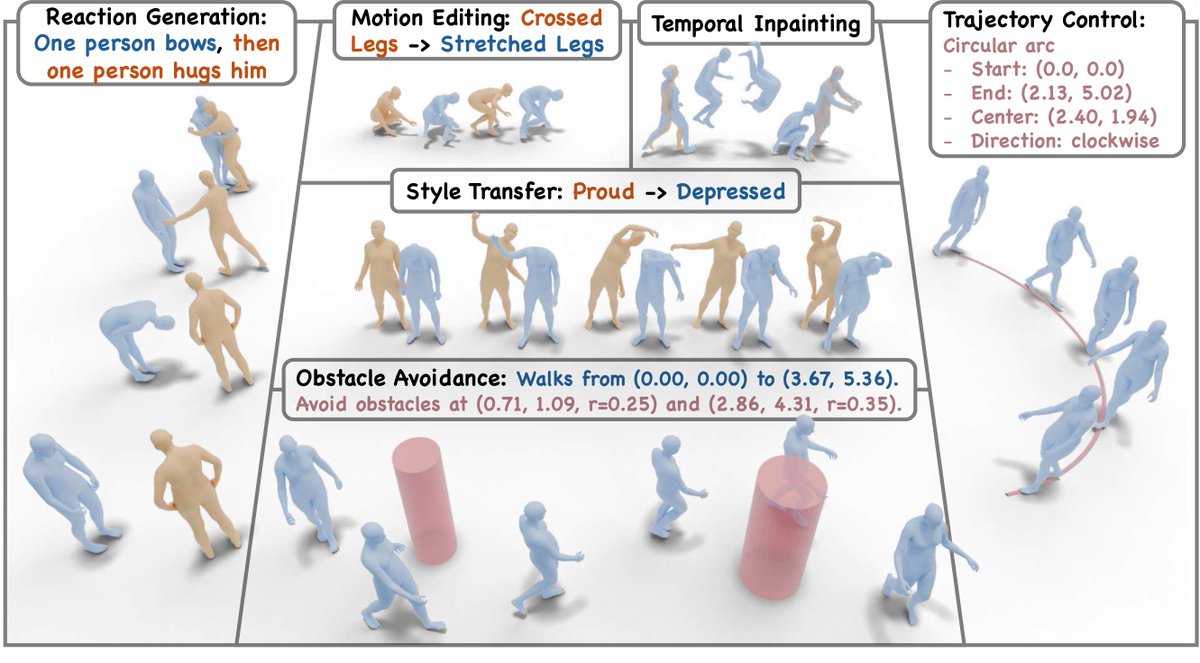

💡Introducing 𝑼𝑴𝑶 -- one unified model that unlocks motion foundation model (HY-Motion Tencent HY) priors for 𝟏𝟎+ 𝐭𝐚𝐬𝐤𝐬: 𝐞𝐝𝐢𝐭𝐢𝐧𝐠, 𝐫𝐞𝐚𝐜𝐭𝐢𝐨𝐧 𝐠𝐞𝐧𝐞𝐫𝐚𝐭𝐢𝐨𝐧, 𝐬𝐭𝐲𝐥𝐢𝐳𝐚𝐭𝐢𝐨𝐧, 𝐭𝐫𝐚𝐣𝐞𝐜𝐭𝐨𝐫𝐲 𝐜𝐨𝐧𝐭𝐫𝐨𝐥, 𝐨𝐛𝐬𝐭𝐚𝐜𝐥𝐞

We scaled off-policy RL to sim-to-real. To our knowledge, FlashSAC is the fastest and most performant RL algorithm across IsaacLab, MuJoCo Playground, and many more, all with a single set of hyperparameters. Project page: holiday-robot.github.io/FlashSAC Paper: arxiv.org/pdf/2604.04539