Kevin Xu

@kevin671xu

Ph.D. student at the University of Tokyo / Deep Learning, Neural Networks, Transformers

ID: 1496180074856730625

https://sites.google.com/g.ecc.u-tokyo.ac.jp/kevinxu 22-02-2022 17:48:56

13 Tweet

27 Followers

66 Following

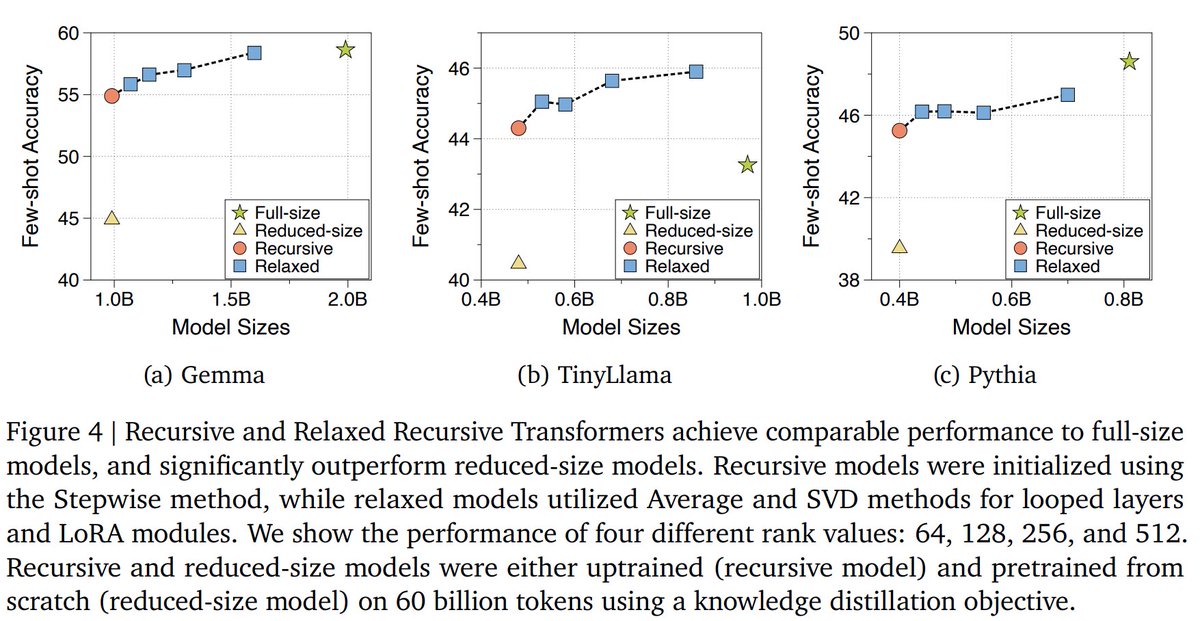

First practical Looped Transformer?!😺 Latest research from Google DeepMind proposes relaxed recursive transformers. That works by 1. Take out layers from a pretrained LM 2. Group them together and add LoRA 3. Decode with early exit Able to recover 90% of Gemma-2B! Paper:

Releasing a new "Agentic Reviewer" for research papers. I started coding this as a weekend project, and Yixing Jiang made it much better. I was inspired by a student who had a paper rejected 6 times over 3 years. Their feedback loop -- waiting ~6 months for feedback each time -- was