Jinuk Kim

@jusjinuk1

CS PhD student @SeoulNatlUni. Previously Research Intern @Google & @Samsung

ID: 1482603102545285120

https://jinukkim.me 16-01-2022 06:38:37

220 Tweet

39 Followers

268 Following

This matters a lot for making long-horizon RL tuning for LLMs scalable, especially if you're gpu-poor. In the long run, I agree with dr. jack morris (see x.com/jxmnop/status/…): Moving from post-training to training-aware methods is a proven recipe (e.g., GQA, QAT, Linear attention).

Rohan Pandey envs which codify domain-specific knowledge + eval criteria which isn’t readily apparent from web browsing

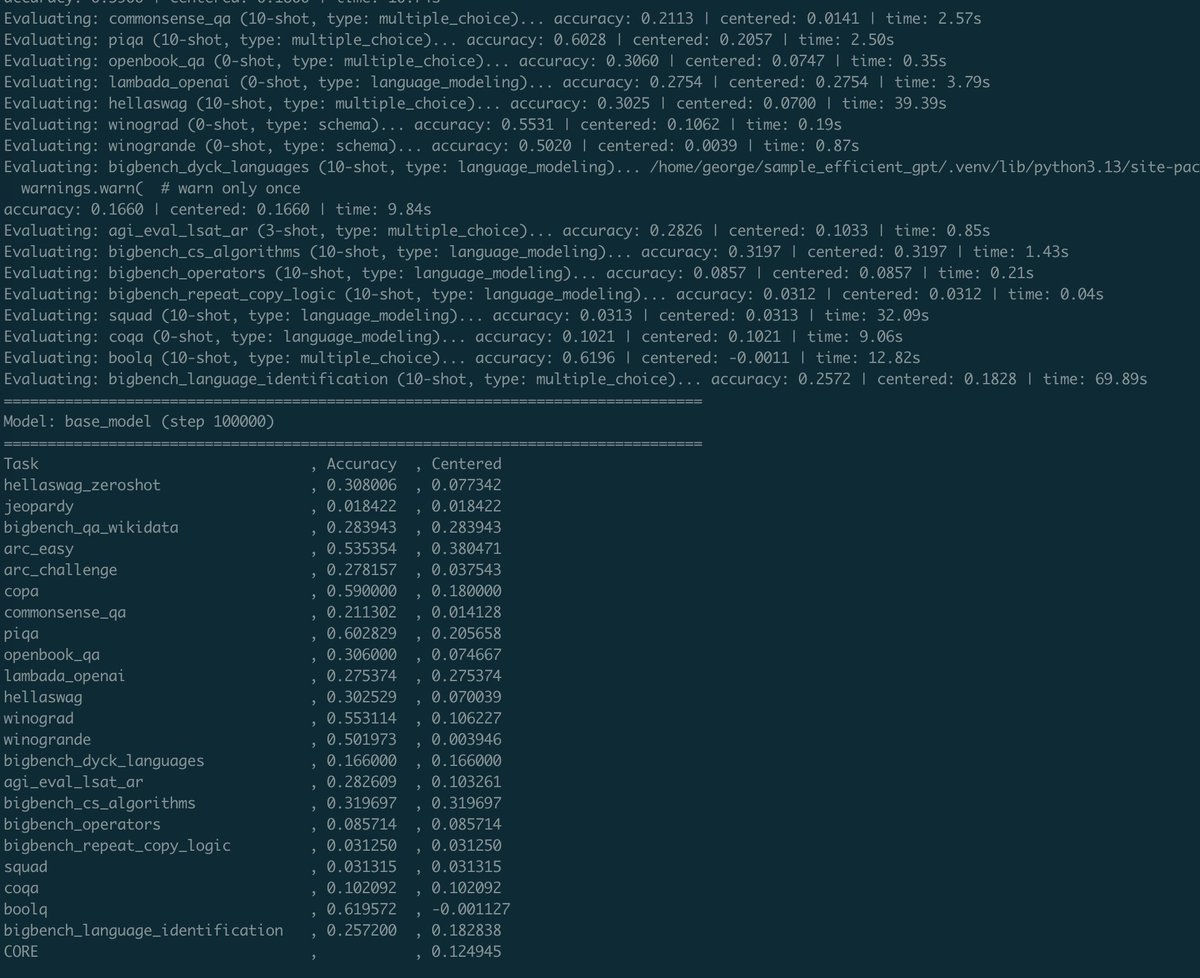

bro Andrej Karpathy literally re-implemented the entire lm-eval-harness in 2 Python files It's been very useful for my own repo and easy to adapt for SuperBPE case

Peter Steinberger is joining OpenAI to drive the next generation of personal agents. He is a genius with a lot of amazing ideas about the future of very smart agents interacting with each other to do very useful things for people. We expect this will quickly become core to our