jinyang (patrick) li

@jinyang34647007

CS PhD student @HKUniversity. Previously M.S. in @Columbia. Intern at @MSFTResearch, prev. at @AlibabaGroup.

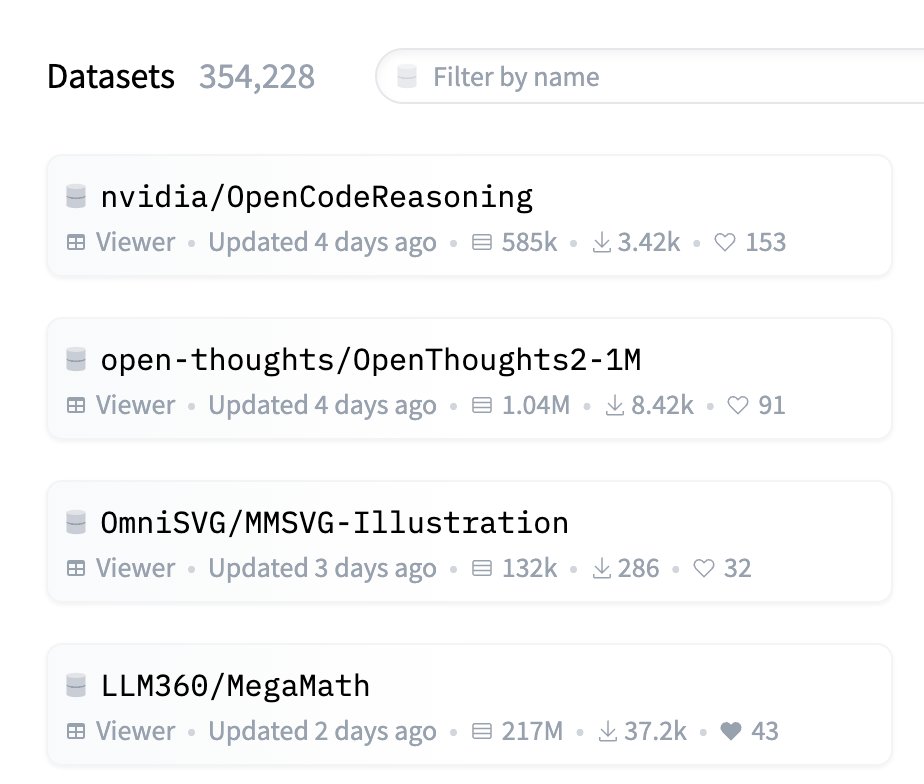

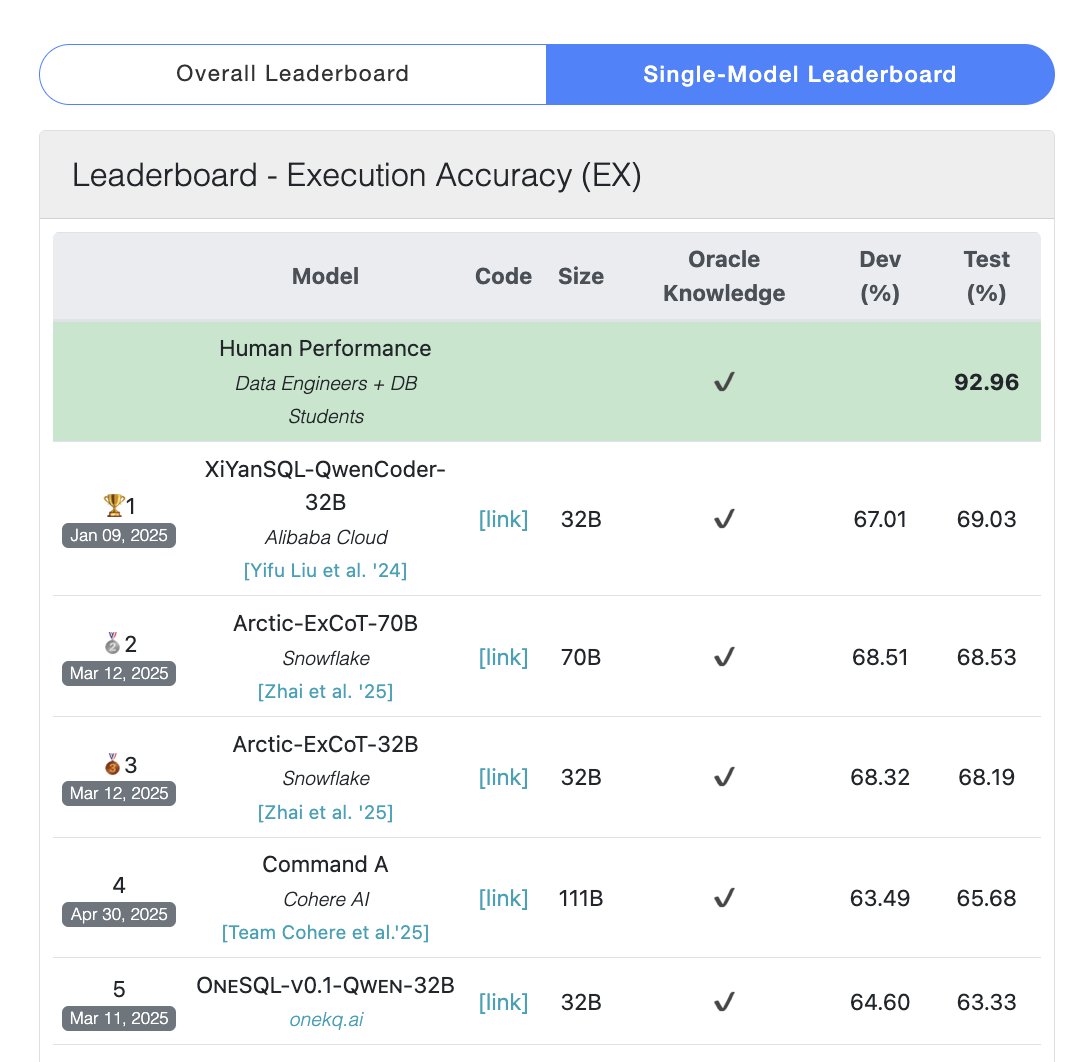

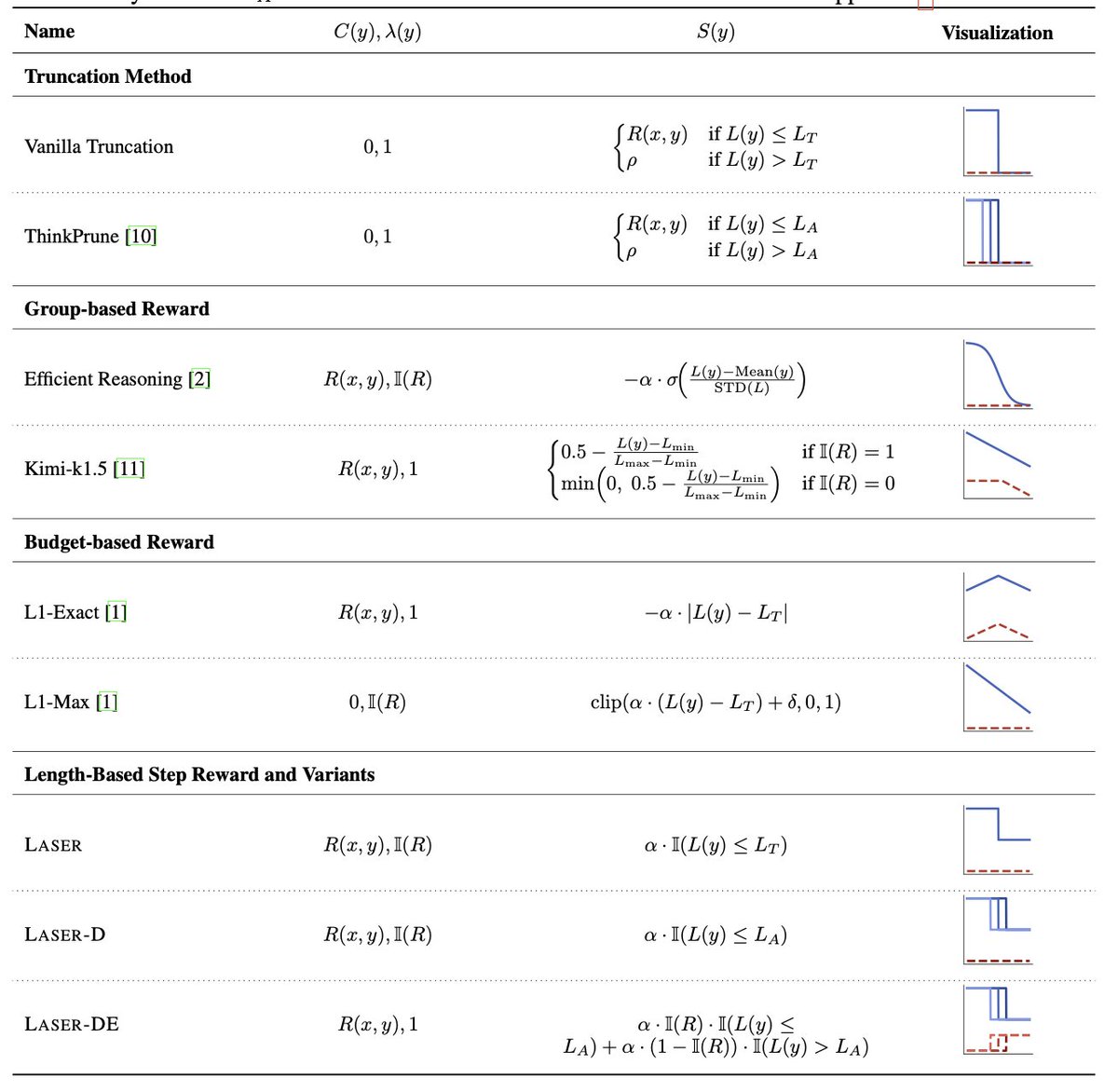

LLM, Interactive Semantic Parsing, text-to-SQL.

ID: 1463518977376673794

https://jinyang-li.me/ 24-11-2021 14:45:05

614 Tweet

1,1K Followers

1,1K Following