Jakob Heiss

@jakobheiss

PhD student in Mathematics at ETH Zürich

ID: 76379652

https://people.math.ethz.ch/~jheiss/ 22-09-2009 16:40:22

37 Tweet

11 Followers

37 Following

If you are at #IJCAI2022, you can see both talks, today, in the Multi-Agent-Systems session, from 3:30pm-3:42pm. Joint work with: Jakob Weissteiner, Jakob Heiss, Chris Wendler, Ben Lubin, Julien Siems, and Markus Püschel. Universität Zürich ETH Zurich ETH AI Center European Research Council (ERC)

Jakob Heiss will present his ICML paper "NOMU - Neural Optimization-based Model Uncertainty" at the Uncertainty in AI reading group today at 5:30pm (Berlin time). uncertainty-reading-group.github.io/2021-11-28-tal… Co-author: Jakob Weissteiner, Hanna Wutte, Sven Seuken, Josef Teichmann

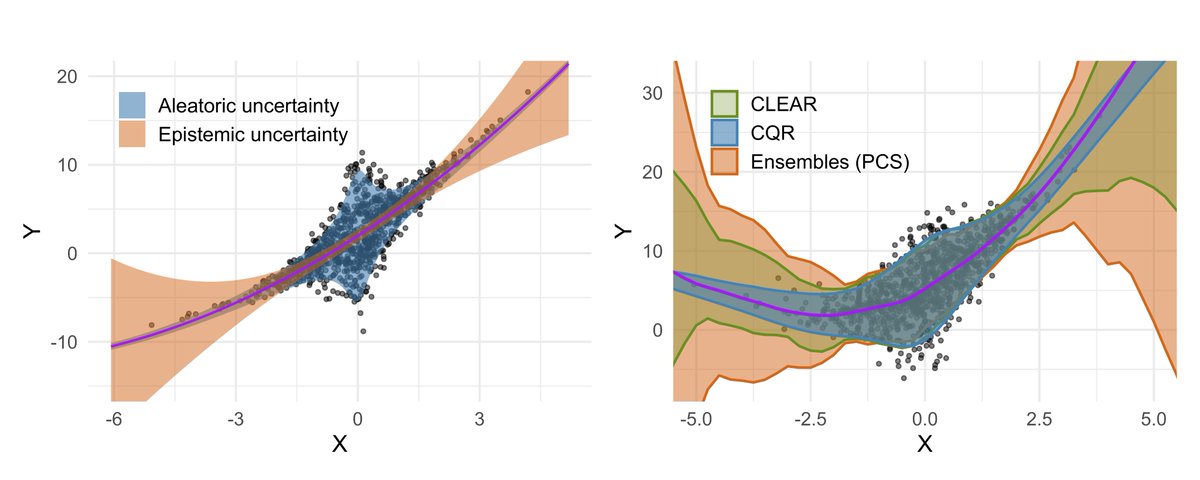

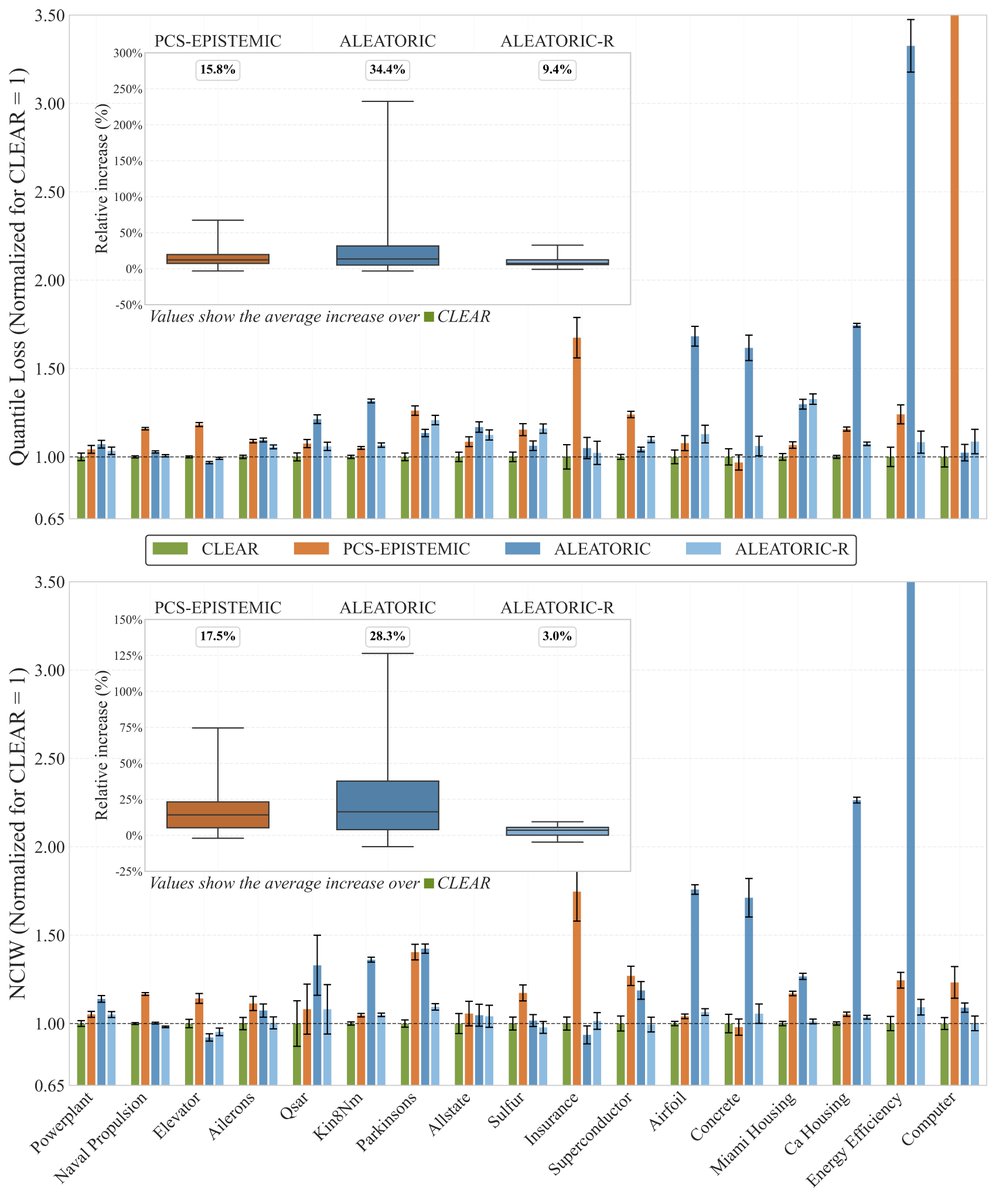

CLEAR is available as an open-source Python package, so feel free to try it: 🐍 pip install clear-uq 💻 unco3892.github.io/clear/ 📄 openreview.net/forum?id=RY4IH… 🔗 github.com/Unco3892/clear Joint work with my great co-authors juro bodik , Jakob Heiss, and Bin Yu. (4/4)