Ray Lillywhite

@lillywhiteray

🇹🇼

ID: 1102451411143389184

04-03-2019 06:11:13

204 Tweet

29 Takipçi

522 Takip Edilen

Joshua Achiam I'm sorry, but this is ridiculous. The implication is that people should censor sincere assessments of our situation bc it might indirectly incite unwell individuals to violence? By that standard, nothing could ever be called an emergency, as it might cause panic, so to avoid

Every person here's reaction to the Jensen + Dwarkesh Patel podcast can be extrapolated *directly* from whether they believe in the frontier labs achieving short timelines for AGI/ASI. If you believe in the labs achieving RSI and then AGI/ASI (for some definition of all three) in

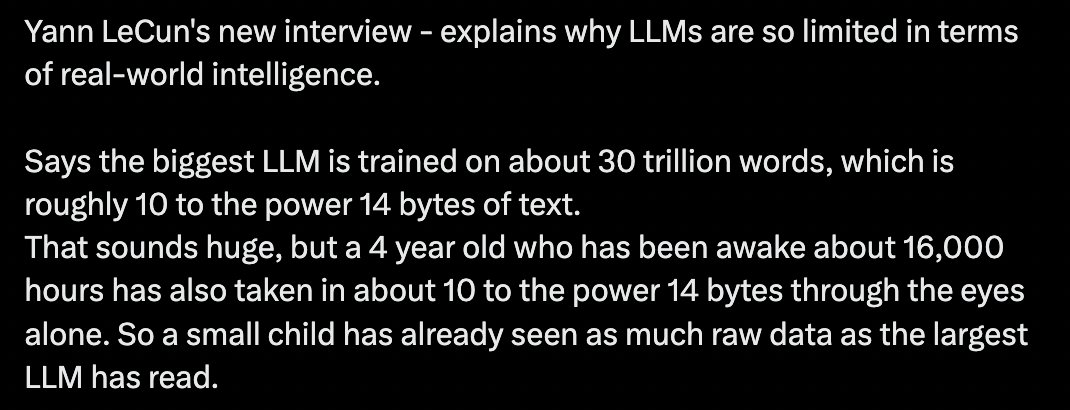

Yann LeCun Aghion Philippe Erik Brynjolfsson Economists and meteorologists.. two professions built on past behavior models, constantly wrong and yet still listened to for some reason

Andrew Curran The old ones aren't even in GA. Last real model release was 11 months ago