Tapan jain

@jainnitk

Analytics Director @GroupM

ID: 75007226

17-09-2009 13:07:35

5,5K Tweet

418 Takipçi

4,4K Takip Edilen

This is why Andrej Karpathy will go into history books as one of the most consequential minds in AI of our time. 243 lines of ruthless compression but a FULL training + inference loop for autoregressive transformer. I feel this is also such a genius, quiet defiance of the “AI is

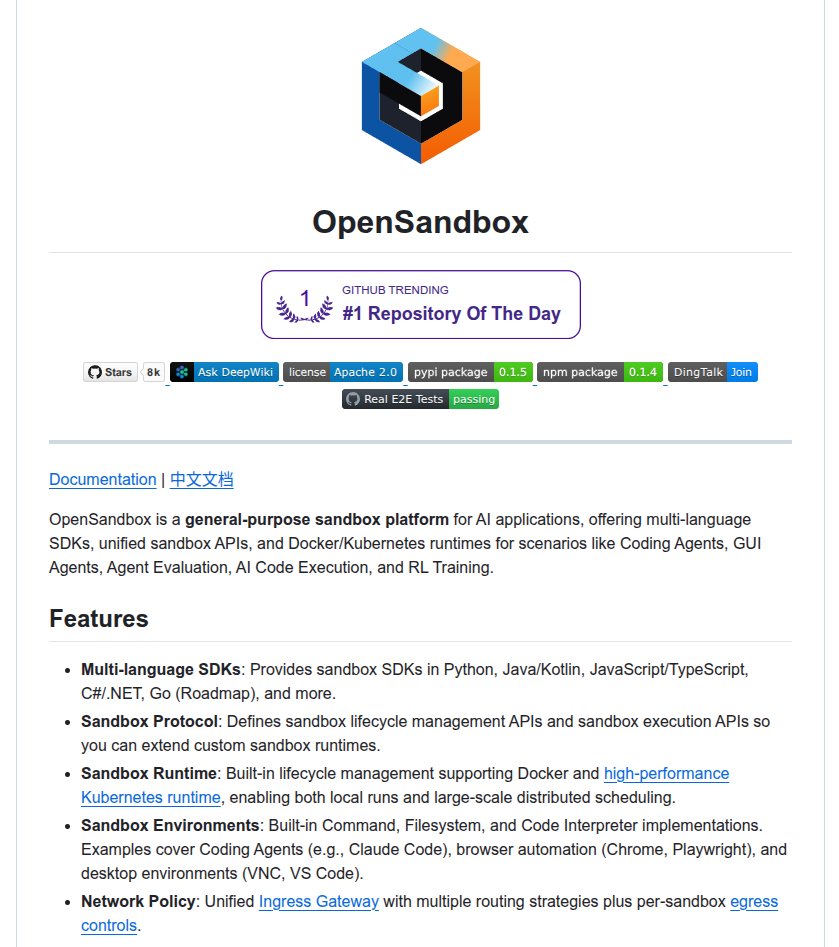

Andrej Karpathy Very inspiring as always! We are also open sourcing part of our infra on automated research for Gemini to evolve itself at github.com/google-deepmin… More complex than the nanochat setup but closer to SOTA LLM pre/post-training while staying as minimal as possible. More on the way.