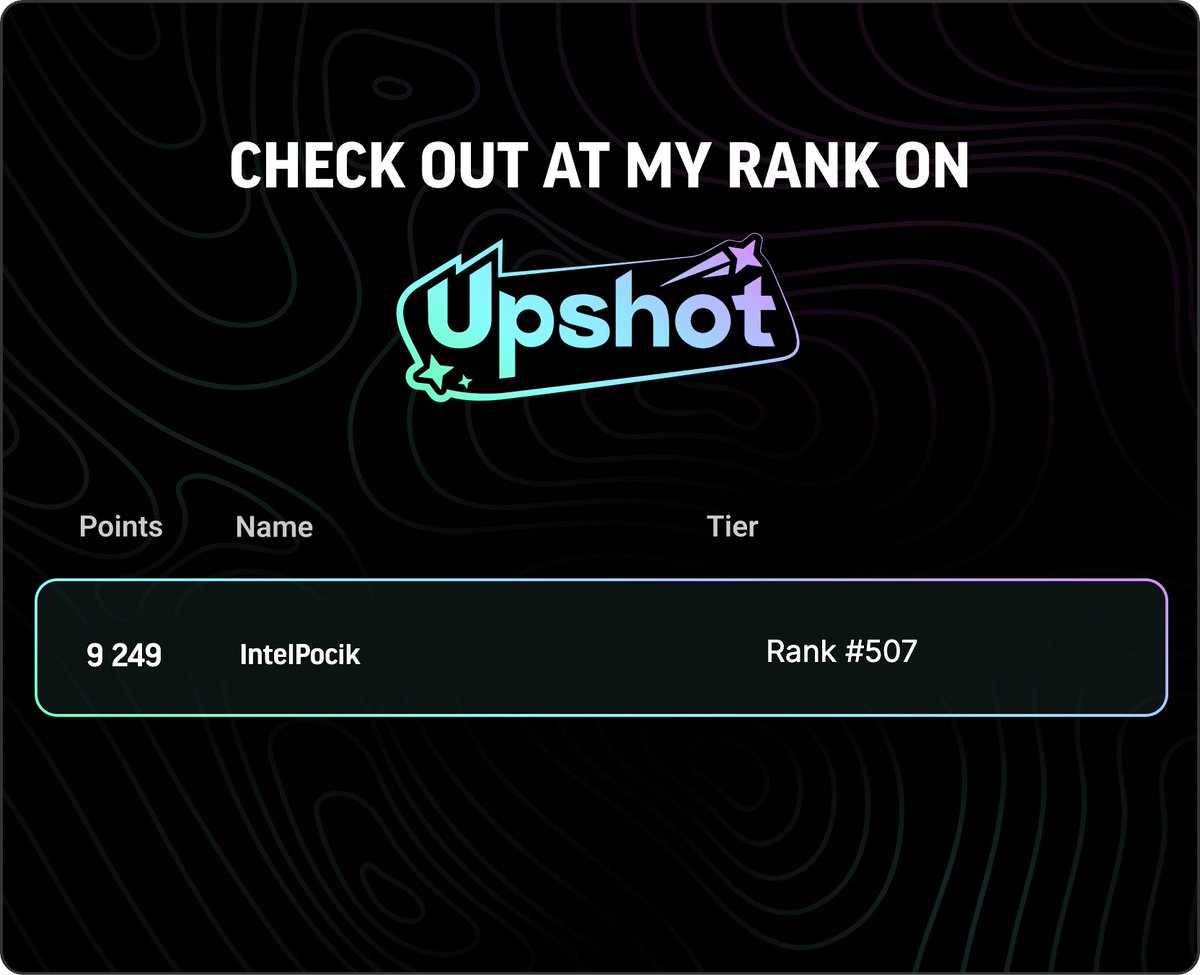

intelpocik.base.eth 👽 🍚 ⛓ (Ø,G).ink 👁️

@intelpocik

👽

#SomniaNetwork

ID: 1066785584

https://link3.to/bugattimusic 06-01-2013 21:44:51

5,5K Tweet

329 Followers

2,2K Following

If this works, I am pretty sure that the optimal formula to capital formation for early stage startups is: 1) staking for long term holders 2) day 1 tge 20%+ release of tokens 3) better to have zero investors but if you have some unlock them all 100% on the same day 1 year after x.com/oleksandrvolsk…