Ibragim

@ibragim_bad

Data for coding agents | @nebiusai

ID: 882369312517959682

04-07-2017 22:43:32

31 Tweet

56 Takipçi

80 Takip Edilen

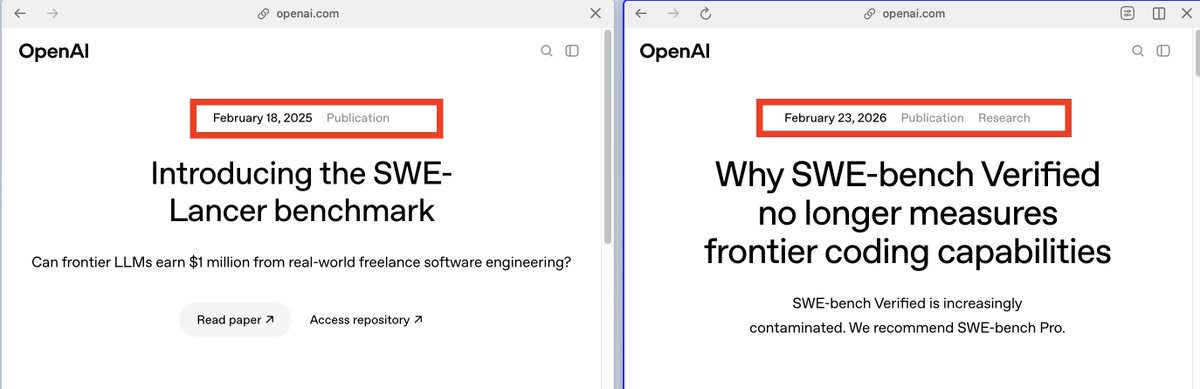

one interesting point is that OpenAI Developers didn't mention SWE-Lancer at all despite its release just a year ago. I actually liked the idea of e2e tests for the front end and back end. but, yeah, one repo benchmark is not what you want

Ankith 🐋/acc Ibragim Simon Karasik Alexander Golubev A solid example of Docker isolation failing: CVE-2019-5736 in runc (Docker's default runtime). If the AI agent (running as root, common in sandboxes) drops malicious code that overwrites the host's runc binary via a /proc/self/exe symlink trick during any exec, boom—next