GX Xu

@gx_nlp

Research Engineer @ Redhat AI Innovation

ID: 1542500294340186112

30-06-2022 13:28:40

26 Tweet

63 Followers

313 Following

Today, with Tim Dettmers, Hugging Face, & @mobius_labs, we're releasing FSDP/QLoRA, a new project that lets you efficiently train very large (70b) models on a home computer with consumer gaming GPUs. 1/🧵 answer.ai/posts/2024-03-…

A personal note: Unitxt originated within the Leshem (Legend) Choshen 🤖🤗 fusing team, aiming to streamline the sharing of academic outputs, primarily through model weights but also data. In the process of training various models on numerous datasets, we encountered significant challenges related

Congrats Google DeepMind on the new Gemma-2 27B & 9B release! Gemma-2 was tested in the Arena under the codename "*late-june-chatbots" and now out of stealth. Its early result matches the best open models (Llama-3-70B, Nemotron-340B) with only 27B parameters! Impressively,

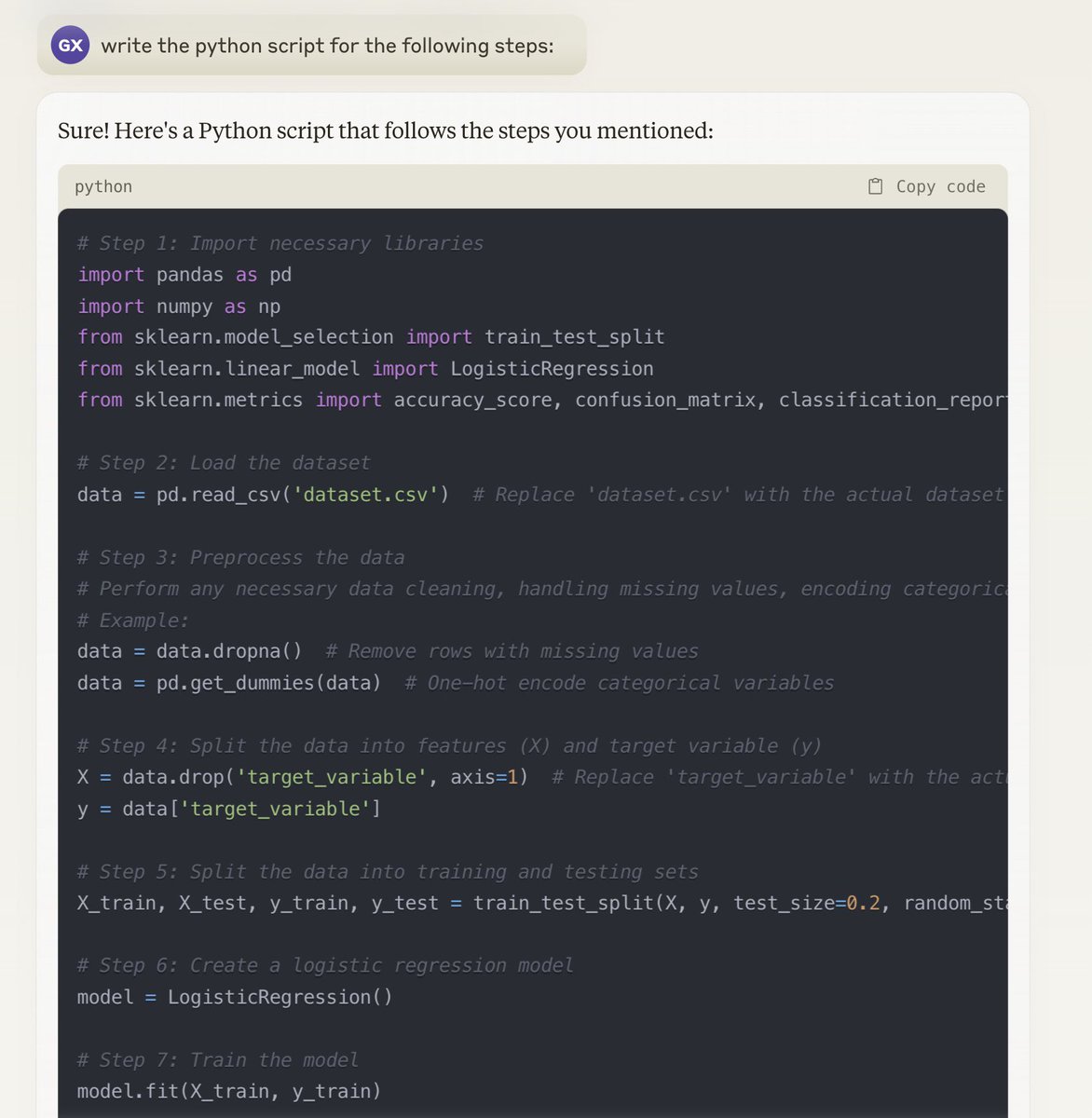

![Isha Puri (@ishapuri101) on Twitter photo [1/x] can we scale small, open LMs to o1 level? Using classical probabilistic inference methods, YES! Joint <a href="/MIT_CSAIL/">MIT CSAIL</a> / <a href="/RedHat/">Red Hat</a> AI Innovation Team work introduces a particle filtering approach to scaling inference w/o any training! check out …abilistic-inference-scaling.github.io [1/x] can we scale small, open LMs to o1 level? Using classical probabilistic inference methods, YES! Joint <a href="/MIT_CSAIL/">MIT CSAIL</a> / <a href="/RedHat/">Red Hat</a> AI Innovation Team work introduces a particle filtering approach to scaling inference w/o any training! check out …abilistic-inference-scaling.github.io](https://pbs.twimg.com/media/GjERBNebIAE6IaO.jpg)

![Hao Wang (@hw_haowang) on Twitter photo [1/x] 🚀 We're excited to share our latest work on improving inference-time efficiency for LLMs through KV cache quantization---a key step toward making long-context reasoning more scalable and memory-efficient. [1/x] 🚀 We're excited to share our latest work on improving inference-time efficiency for LLMs through KV cache quantization---a key step toward making long-context reasoning more scalable and memory-efficient.](https://pbs.twimg.com/media/Gnx9W5FWIAA4vbt.jpg)