Giorgi Giglemiani

@giglema

ID: 1497280181249232901

25-02-2022 18:40:03

1 Tweet

13 Followers

156 Following

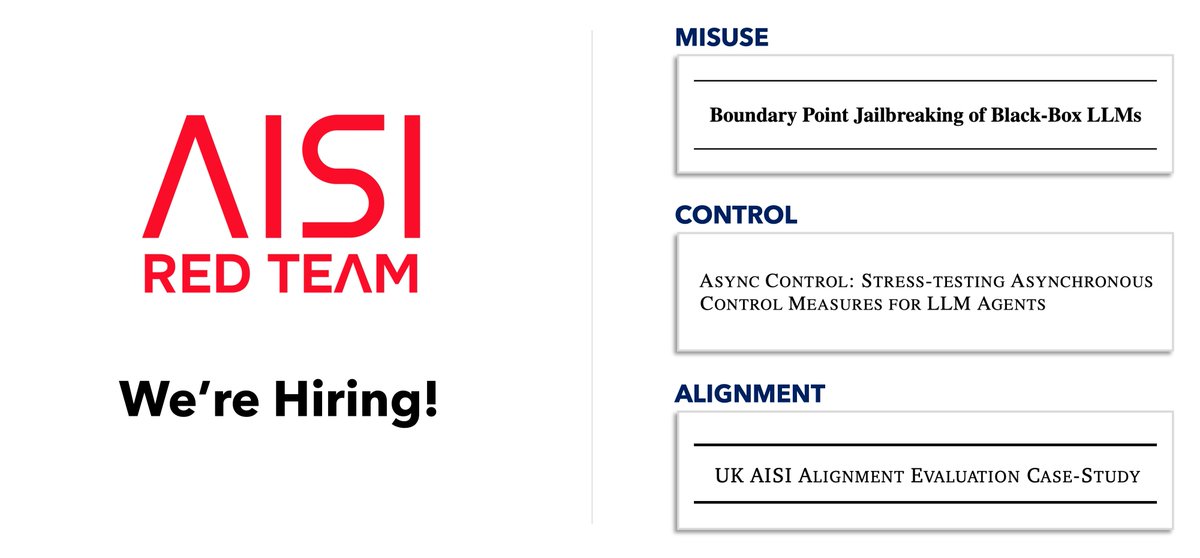

The Red Team at AI Security Institute is hiring! We work with frontier AI companies to red team their misuse safeguards, control measures, and alignment techniques. As the stakes rise, we need much stronger red teaming and many more talented researchers working within gov 🧵

We AI Security Institute tested GPT-5.5's cyber safeguards, developing a universal jailbreak in 6 hours of red teaming. AISI also performed cyber capabilities testing -- more in the system card.