Zach Furman

@furmanzach

Singular learning theory and AI alignment research. Previously embedded SWE, aerospace, and physics.

ID: 1591503649729036288

http://zachfurman.com 12-11-2022 18:50:39

12 Tweet

111 Followers

169 Following

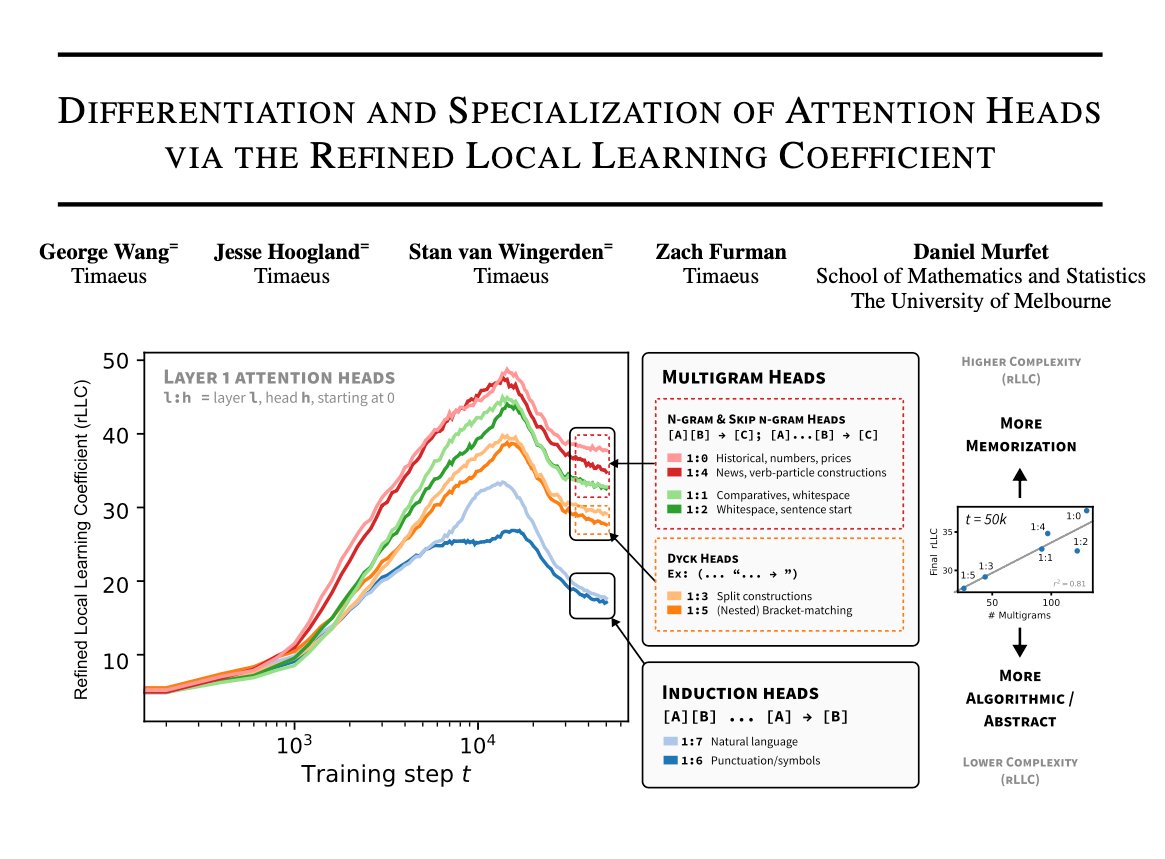

Timaeus is a new research organization, dedicated to making fundamental breakthroughs in technical AI alignment using deep ideas from mathematics and the sciences. Led by Jesse Hoogland Consistently Candid Alex Stan van Wingerden and myself. lesswrong.com/posts/nN7bHuHZ… [1/n]

![Daniel Murfet (@danielmurfet) on Twitter photo Timaeus is a new research organization, dedicated to making fundamental breakthroughs in technical AI alignment using deep ideas from mathematics and the sciences. Led by <a href="/jesse_hoogland/">Jesse Hoogland</a> <a href="/FellowHominid/">Consistently Candid Alex</a> Stan van Wingerden and myself. lesswrong.com/posts/nN7bHuHZ… [1/n] Timaeus is a new research organization, dedicated to making fundamental breakthroughs in technical AI alignment using deep ideas from mathematics and the sciences. Led by <a href="/jesse_hoogland/">Jesse Hoogland</a> <a href="/FellowHominid/">Consistently Candid Alex</a> Stan van Wingerden and myself. lesswrong.com/posts/nN7bHuHZ… [1/n]](https://pbs.twimg.com/media/F9E24KHbYAANwCF.png)

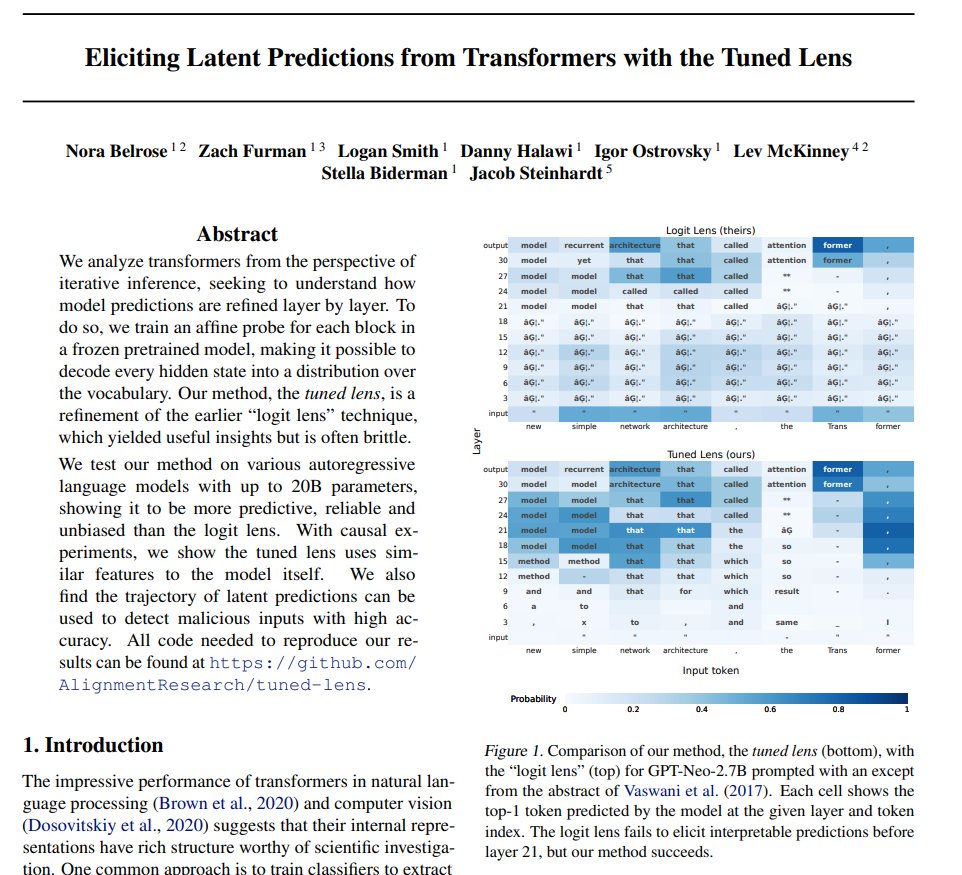

1/8 How do transformers learn? In our new work, we find that transformers develop in-context learning in discrete stages that can be automatically discovered. 🧵 arxiv.org/abs/2402.02364 Joint work w/ george, Matthew Farrugia-Roberts, Liam Carroll, Susan Wei, Daniel Murfet